Amazon Redshift helps querying knowledge saved utilizing Apache Iceberg tables, an open desk format that simplifies administration of tabular knowledge residing in knowledge lakes on Amazon Easy Storage Service (Amazon S3). Amazon S3 Tables delivers the primary cloud object retailer with built-in Iceberg assist and streamlines storing tabular knowledge at scale, together with continuous desk optimizations that assist enhance question efficiency. Amazon SageMaker Lakehouse unifies your knowledge throughout S3 knowledge lakes, together with S3 Tables, and Amazon Redshift knowledge warehouses, helps you construct highly effective analytics and synthetic intelligence and machine studying (AI/ML) functions on a single copy of knowledge, querying knowledge saved in S3 Tables with out the necessity for complicated extract, rework, and cargo (ETL) or knowledge motion processes. You’ll be able to benefit from the scalability of S3 Tables to retailer and handle giant volumes of knowledge, optimize prices by avoiding extra knowledge motion steps, and simplify knowledge administration by means of centralized fine-grained entry management from SageMaker Lakehouse.

On this publish, we display the right way to get began with S3 Tables and Amazon Redshift Serverless for querying knowledge in Iceberg tables. We present the right way to arrange S3 Tables, load knowledge, register them within the unified knowledge lake catalog, arrange fundamental entry controls in SageMaker Lakehouse by means of AWS Lake Formation, and question the info utilizing Amazon Redshift.

Notice – Amazon Redshift is only one possibility for querying knowledge saved in S3 Tables. You’ll be able to study extra about S3 Tables and extra methods to question and analyze knowledge on the S3 Tables product web page.

Resolution overview

On this resolution, we present the right way to question Iceberg tables managed in S3 Tables utilizing Amazon Redshift. Particularly, we load a dataset into S3 Tables, hyperlink the info in S3 Tables to a Redshift Serverless workgroup with acceptable permissions, and eventually run queries to investigate our dataset for traits and insights. The next diagram illustrates this workflow.

On this publish, we are going to stroll by means of the next steps:

- Create a desk bucket in S3 Tables and combine with different AWS analytics companies.

- Arrange permissions and create Iceberg tables with SageMaker Lakehouse utilizing Lake Formation.

- Load knowledge with Amazon Athena. There are alternative ways to ingest knowledge into S3 Tables, however for this publish, we present how we are able to rapidly get began with Athena.

- Use Amazon Redshift to question your Iceberg tables saved in S3 Tables by means of the auto mounted catalog.

Stipulations

The examples on this publish require you to make use of the next AWS companies and options:

Create a desk bucket in S3 Tables

Earlier than you should use Amazon Redshift to question the info in S3 Tables, you have to first create a desk bucket. Full the next steps:

- Within the Amazon S3 console, select Desk buckets on the left navigation pane.

- Within the Integration with AWS analytics companies part, select Allow integration in case you haven’t beforehand set this up.

This units up the mixing with AWS analytics companies, together with Amazon Redshift, Amazon EMR, and Athena.

After a couple of seconds, the standing will change to Enabled.

- Select Create desk bucket.

- Enter a bucket title. For this instance, we use the bucket title

redshifticeberg. - Select Create desk bucket.

After the S3 desk bucket is created, you can be redirected to the desk buckets record.

Now that your desk bucket is created, the subsequent step is to configure the unified catalog in SageMaker Lakehouse by means of the Lake Formation console. This may make the desk bucket in S3 Tables out there to Amazon Redshift for querying Iceberg tables.

Publishing Iceberg tables in S3 Tables to SageMaker Lakehouse

Earlier than you may question Iceberg tables in S3 Tables with Amazon Redshift, you have to first make the desk bucket out there within the unified catalog in SageMaker Lakehouse. You are able to do this by means of the Lake Formation console, which helps you to publish catalogs and handle tables by means of the catalogs characteristic, and assign permissions to customers. The next steps present you the right way to arrange Lake Formation so you should use Amazon Redshift to question Iceberg tables in your desk bucket:

- In case you’ve by no means visited the Lake Formation console earlier than, you have to first accomplish that as an AWS consumer with admin permissions to activate Lake Formation.

You can be redirected to the Catalogs web page on the Lake Formation console. You will note that one of many catalogs out there is the s3tablescatalog, which maintains a catalog of the desk buckets you’ve created. The next steps will configure Lake Formation to make knowledge within the s3tablescatalog catalog out there to Amazon Redshift.

Subsequent, it is advisable to create a database in Lake Formation. The Lake Formation database maps to a Redshift schema.

- Select Databases beneath Information Catalog within the navigation pane.

- On the Create menu, select Database.

- Enter a reputation for this database. This instance makes use of

icebergsons3. - For Catalog, select the desk bucket that you just created. On this instance, the title could have the format

:s3tablescatalog/redshifticeberg - Select Create database.

You can be redirected on the Lake Formation console to a web page with extra details about your new database. Now you may create an Iceberg desk in S3 Tables.

- On the database particulars web page, on the View menu, select Tables.

This may open up a brand new browser window with the desk editor for this database.

- After the desk view masses, select Create desk to start out creating the desk.

- Within the editor, enter the title of the desk. We name this desk

examples. - Select the catalog (

:s3tablescatalog/redshifticeberg icebergsons3).

Subsequent, add columns to your desk.

- Within the Schema part, select Add column, and add a column that represents an ID.

- Repeat this step and add columns for added knowledge:

category_id(lengthy)insert_date(date)knowledge(string)

The ultimate schema appears like the next screenshot.

- Select Submit to create the desk.

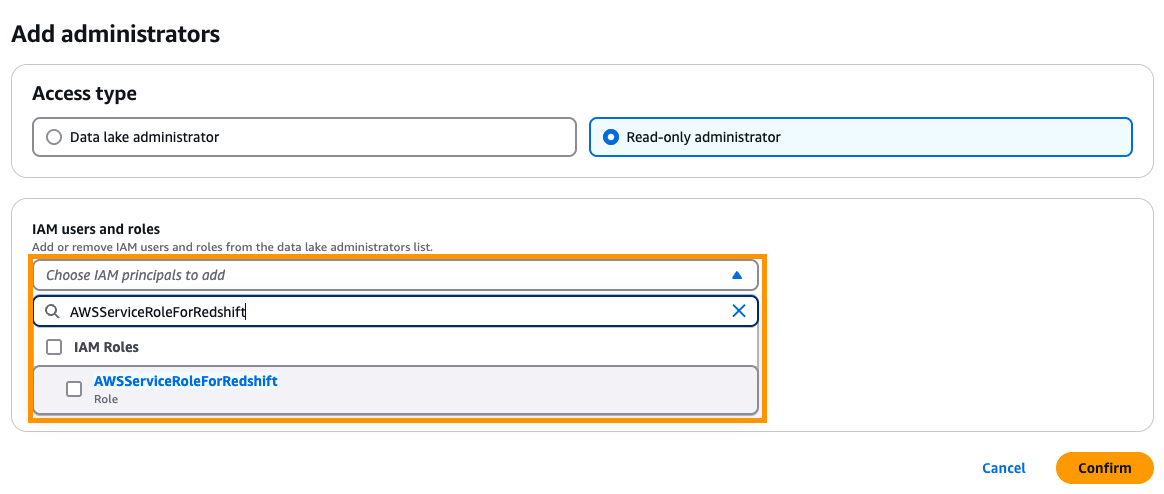

Subsequent, it is advisable to arrange a read-only permission so you may question Iceberg knowledge in S3 Tables utilizing the Amazon Redshift Question Editor v2. For extra data, see Stipulations for managing Amazon Redshift namespaces within the AWS Glue Information Catalog.

- Beneath Administration within the navigation pane, select Administrative roles and duties.

- Within the Information lake directors part, select Add.

- For Entry kind, choose Learn-only administrator.

- For IAM customers and roles, enter

AWSServiceRoleForRedshift.

AWSServiceRoleForRedshift is a service-linked position that’s managed by AWS.

- Select Verify.

You may have now configured SageMaker Lakehouse utilizing Lake Formation to permit Amazon Redshift to question Iceberg tables in S3 Tables. Subsequent, you populate some knowledge into the Iceberg desk, and question it with Amazon Redshift.

Use SQL to question Iceberg knowledge with Amazon Redshift

For this instance, we use Athena to load knowledge into our Iceberg desk. That is one possibility for ingesting knowledge into an Iceberg desk; see Utilizing Amazon S3 Tables with AWS analytics companies for different choices, together with Amazon EMR with Spark, Amazon Information Firehose, and AWS Glue ETL.

- On the Athena console, navigate to the question editor.

- If that is your first time utilizing Athena, you have to first specify a question end result location earlier than executing your first question.

- Within the question editor, beneath Information, select your knowledge supply (

AwsDataCatalog). - For Catalog, select the desk bucket you created (

s3tablescatalog/redshifticeberg). - For Database, select the database you created (

icebergsons3).

- Let’s execute a question to generate knowledge for the examples desk. The next question generates over 1.5 million rows akin to 30 days of knowledge. Enter the question and select Run.

The next screenshot exhibits our question.

The question takes about 10 seconds to execute.

Now you should use Redshift Serverless to question the info.

- On the Redshift Serverless console, provision a Redshift Serverless workgroup in case you haven’t already performed so. For directions, see Get began with Amazon Redshift Serverless knowledge warehouses information. On this instance, we use a Redshift Serverless workgroup known as

iceberg. - Be sure that your Amazon Redshift patch model is patch 188 or increased.

- Select Question knowledge to open the Amazon Redshift Question Editor v2.

- Within the question editor, select the workgroup you wish to use.

A pop-up window will seem, prompting what consumer to make use of.

- Choose Federated consumer, which is able to use your present account, and select Create connection.

It is going to take a couple of seconds to start out the connection. Whenever you’re linked, you will note an inventory of obtainable databases.

- Select Exterior databases.

You will note the desk bucket from S3 Tables within the view (on this instance, that is redshifticeberg@s3tablescatalog).

- In case you proceed clicking by means of the tree, you will note the

examplesdesk, which is the Iceberg desk you beforehand created that’s saved within the desk bucket.

Now you can use Amazon Redshift to question the Iceberg desk in S3 Tables.

Earlier than you execute the question, overview the Amazon Redshift syntax for querying catalogs registered in SageMaker Lakehouse. Amazon Redshift makes use of the next syntax to reference a desk: database@namespace.schema.desk or database@namespace".schema.desk.

On this instance, we use the next syntax to question the examples desk within the desk bucket: redshifticeberg@s3tablescatalog.icebergsons3.examples.

Be taught extra about this mapping in Utilizing Amazon S3 Tables with AWS analytics companies.

Let’s run some queries. First, let’s see what number of rows are within the examples desk.

- Run the next question within the question editor:

The question will take a couple of seconds to execute. You will note the next end result.

Let’s attempt a barely extra sophisticated question. On this case, we wish to discover all the times that had instance knowledge beginning with 0.2 and a category_id between 50–75 with not less than 130 rows. We are going to order the outcomes from most to least.

- Run the next question:

You would possibly see completely different outcomes than the next screenshot due the randomly generated supply knowledge.

Congratulations, you have got arrange and queried Iceberg knowledge in S3 Tables from Amazon Redshift!

Clear up

In case you carried out the instance and wish to take away the assets, full the next steps:

- In case you now not want your Redshift Serverless workgroup, delete the workgroup.

- In case you don’t must entry your SageMaker Lakehouse knowledge from the Amazon Redshift Question Editor v2, take away the info lake administrator:

- On the Lake Formation console, select Administrative roles and duties within the navigation pane.

- Take away the read-only knowledge lake administrator that has the

AWSServiceRoleForRedshiftprivilege.

- If you wish to completely delete the info from this publish, delete the database:

- On the Lake Formation console, select Databases within the navigation pane.

- Delete the

icebergsaheaddatabase.

- In case you now not want the desk bucket, delete the desk bucket.

- In you wish to deactivate the mixing between S3 Tables and AWS analytics companies, see Migrating to the up to date integration course of.

Conclusion

On this publish, we confirmed the right way to get began with Amazon Redshift to question Iceberg tables saved in S3 Tables. That is just the start for a way you should use Amazon Redshift to investigate your Iceberg knowledge that’s saved in S3 Tables—you may mix this with different Amazon Redshift options, together with writing queries that be a part of knowledge from Iceberg tables saved in S3 Tables and Redshift Managed Storage (RMS), or implement knowledge entry controls that provide you with fine-granted entry management guidelines for various customers throughout the S3 Tables. Moreover, you should use options like Redshift Serverless to robotically choose the quantity of compute for analyzing your Iceberg tables, and use AI to intelligently scale on demand and optimize question efficiency traits to your analytical workload.

We invite you to go away suggestions within the feedback.

Concerning the Authors

Jonathan Katz is a Principal Product Supervisor – Technical on the Amazon Redshift staff and relies in New York. He’s a Core Workforce member of the open supply PostgreSQL mission and an lively open supply contributor, together with PostgreSQL and the pgvector mission.

Jonathan Katz is a Principal Product Supervisor – Technical on the Amazon Redshift staff and relies in New York. He’s a Core Workforce member of the open supply PostgreSQL mission and an lively open supply contributor, together with PostgreSQL and the pgvector mission.

Satesh Sonti is a Sr. Analytics Specialist Options Architect based mostly out of Atlanta, specialised in constructing enterprise knowledge platforms, knowledge warehousing, and analytics options. He has over 19 years of expertise in constructing knowledge property and main complicated knowledge platform applications for banking and insurance coverage purchasers throughout the globe.

Satesh Sonti is a Sr. Analytics Specialist Options Architect based mostly out of Atlanta, specialised in constructing enterprise knowledge platforms, knowledge warehousing, and analytics options. He has over 19 years of expertise in constructing knowledge property and main complicated knowledge platform applications for banking and insurance coverage purchasers throughout the globe.