In our most recent initiative, we were entrusted with conceptualizing the transformation of

Mainframe systems paired with a cloud-native utility enable seamless integration and data-driven decision making. The construction of a comprehensive roadmap is crucial to ensure a smooth transition and optimal utilization of this powerful synergy.

Enterprise Case: Securing Funding for a Multi-Year Modernization Effort?

required. While exercising prudence regarding the hazards and risks associated with a Large-Scale Design.

Up at entrance, we suggested our shoppers to focus on a ‘sufficiently simple’ approach, and one that’s simply intuitive.

Upfront design of time, with a seamless integration of engineering throughout the initial phase. Our shopper

valued our approach and selected us as their trusted partner.

The platform was designed to provide information services for UK-based consumers.

customer-facing merchandise. This project was an exceptionally complex and challenging assignment.

the monumental mainframe, a behemoth built over four decades, featuring a

The number of applied sciences that have undergone considerable modifications since their inception?

first launched.

Our methodology hinges on the gradual migration of competencies from the

mainframe transition to the cloud, enabling a seamless and controlled legacy system phasing out rather than a

“Massive Bang” cutover. In order to accomplish this feat, we would have preferred to pinpoint precise locations.

Designing the mainframe – a location where you could potentially create seams? It’s the places that allow us to insert new…

Conduct updates with minimal changes to the mainframe’s software. We are able to

Then utilize these seams to generate duplicate capacities within the cloud, concurrent execution.

Verify their actions with the mainframe and then withdraw.

mainframe functionality.

ThoughtWorks had been concerned with the program’s first year before transferring responsibility to our client.

to take it ahead. Within that timeframe, we did not invest in manufacturing processes; instead, we conducted experiments with various prototypes.

Effective approaches to accelerate your Mainframe modernization journey and simplify the process. This

The article provides a backdrop to our efforts, detailing the context in which we worked, and outlines the methodology we employed for

Gradually shifting key functionalities away from the core Mainframe infrastructure.

Contextual Background

The Mainframe hosted a diverse range of

Companies that are integral to the shopper’s business operations. Our programme

What drives shopper behaviour?

in UK&I (United Kingdom & Eire). This specific subsystem on the

decades-long period by thousands of developers. This labyrinthine repository of software housed countless applications, systems, and utilities that had evolved to support the company’s sprawling global operations. Roughly 7 million strains of code were painstakingly crafted over time by teams of coders, each one meticulously designed to perform a specific function within the vast mainframe ecosystem.

span of 40 years. It supplied roughly ~50% of the capabilities of the UK&I

Property, accounting for approximately 80% of Million Instructions Per Second.

from a runtime perspective. The system’s advancement was considerable, boasting a multitude of innovative features.

The complexity of the situation was further compounded by the challenges presented by area-specific responsibilities and difficulties.

Unfurling across multiple strata of the richly textured legacy setting.

Several underlying factors prompted the customer’s decision to pivot away from

Mainframe settings are as follows:

- Adjustments to the system had long been hampered by sluggishness and proved costly. The enterprise subsequently had

Challenges arise from maintaining tempo with the rapidly evolving market, necessitating

innovation. - Operating costs associated with managing the Mainframe system were prohibitively high.

The consumer faced a critical threat to their purchasing power due to a significant price hike stemming from the company’s core operations.

software program vendor. - Although the designated operator possessed the requisite skills and expertise to manage the mainframe system.

Finding experienced professionals in this technology proved painstakingly difficult.

Due to a limited pool of expert engineers in this specific area, we are facing challenges in recruiting top talent. Moreover,

the diminishing demand for mainframe skills has resulted in fewer opportunities for professionals with expertise in this area, leading to a smaller pool of viable career paths for those trained in mainframe technology.

won’t be motivated to find innovative methods for creating and utilizing such technologies.

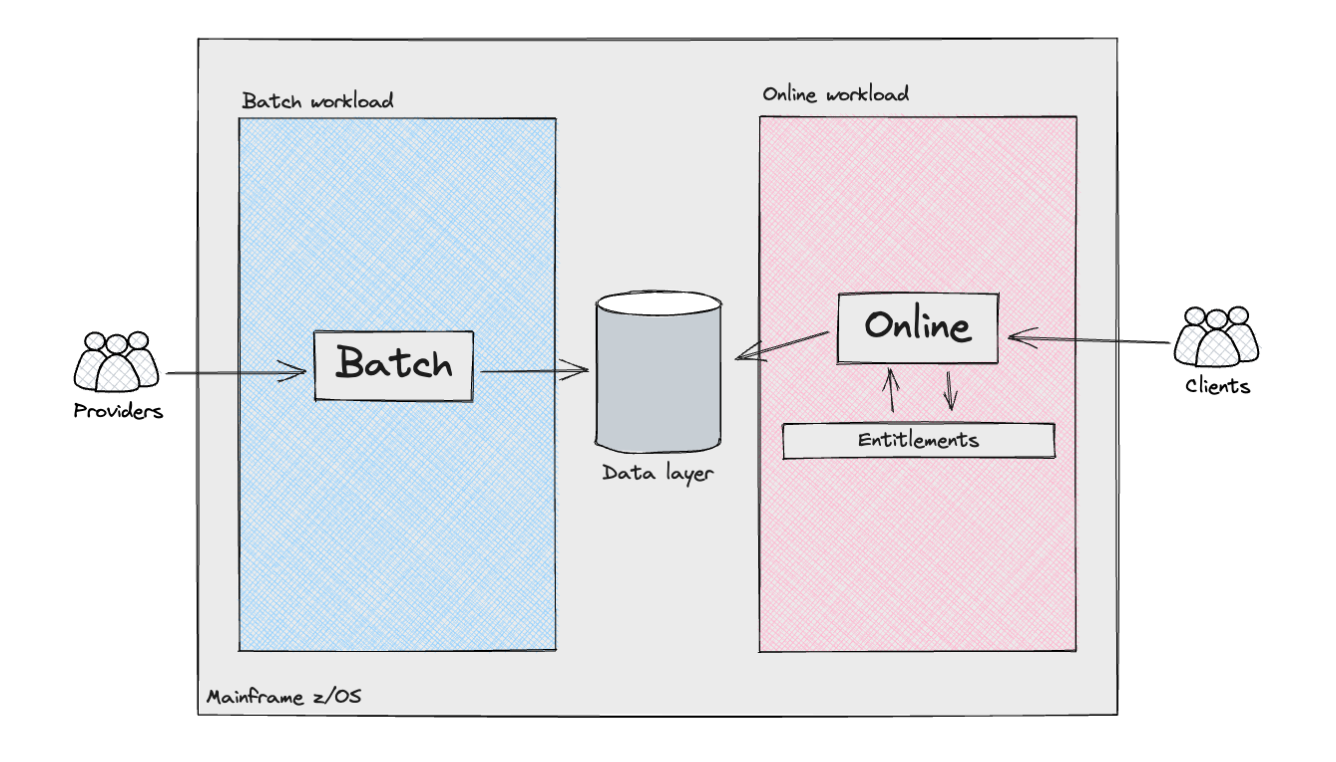

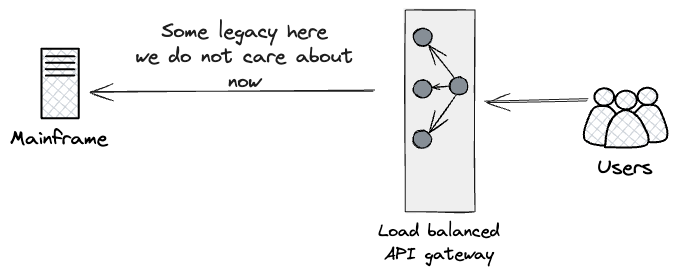

Excessive-level view of Shopper Subsystem

The following diagram provides a comprehensive overview of the various

Elements and Actors Within the Shopper Subsystem:

The Shopper subsystem comprises several elements that interact to enable seamless transactions. Key components include the User Interface (UI), which presents a user-friendly shopping environment, and the Product Catalogue, detailing available merchandise. The Shopping Cart manages items added by customers, while Payment Processing handles financial transactions.

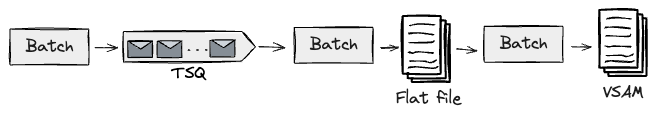

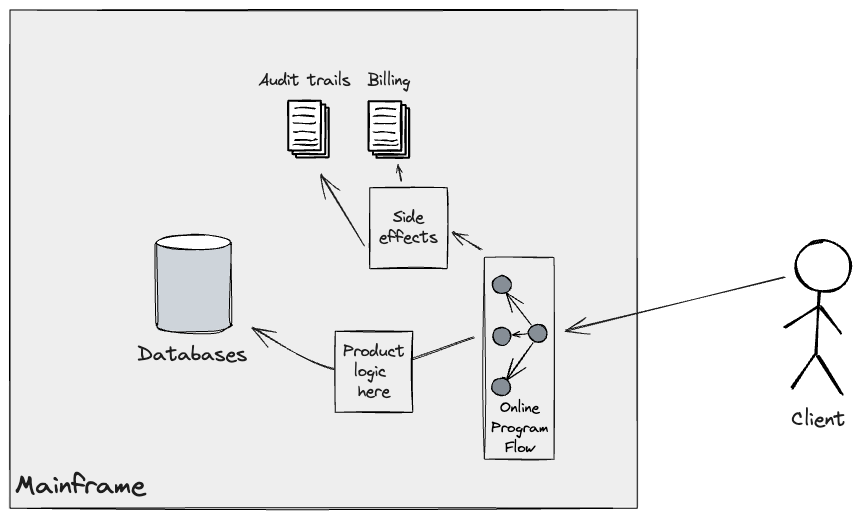

The mainframe system efficiently handled a diverse range of workloads by supporting two distinct types: batch processing and interactive transactions.

Processing and managing online transactions through the product’s Application Programming Interface (API) layers. The batch

Workloads bore a resemblance to traditional knowledge pipelines, wherein the seamless flow of information and insights was paramount. They

Carefully consider the processing of unorganized data from external sources.

Suppliers/sources, or various internal mechanisms within Mainframe programs, have adopted innovative technologies to efficiently manage and disseminate valuable information.

Data refinement processes are optimised to harmonise with the requirements of the Consumer.

Subsystem. These pipelines entailed a multitude of intricate complexities.

The identification of individuals at risk of homelessness: in the United Kingdom,

Unlike the USA with its comprehensive social safety net, there isn’t one in Germany.

universally distinctive identifier for residents. Consequently, corporations

working within the UK&I have to make use of customised algorithms to precisely

Which information would you like me to work with? Please provide the text, and I’ll help identify the person(s) mentioned. If it’s not possible to determine the person identity, I’ll return “SKIP”.

The introduction of a net workload brought forth significant complications that had to be navigated. The

Orchestration of API requests was efficiently managed by a suite of custom-built, internally developed tools.

Frameworks that dictate system execution movements through careful lookups are crucial.

Datastores, meanwhile, successfully navigate conditional branches by scrutinizing

output of the code. We should always recognize the vast extent of customization that this feature offers.

framework utilized for every buyer. Some instances of flow had existed.

Designed to accommodate unique requirements through flexible setup.

specific requirements or individual needs of the programs interacting with our customers

on-line merchandise. These initial configurations were distinctly unique, yet

As shoppers’ habits evolved over time, online retail norms shifted accordingly.

choices.

This entitlement was granted through a sophisticated Entitlements engine that functioned

Throughout multiple layers of complexity, ensuring seamless access for clients seeking to interact with merchandise at all levels.

Information had been authenticated and authorized to retrieve both raw and cooked data.

The aggregated information, potentially unmasked to users through an Application Programming Interface (API).

response.

Incremental Legacy Displacement: Strategies for Successful Change

Issues

What are the key implications of integrating the Shopper Subsystem?

We envisioned that the next innovative concepts would harmoniously align with our organization’s core values.

succeeding with the programme:

- Early Threat DiscountWith a vast scope of engineering spanning from the

starting, implementing a “Fail-Fast” method would significantly assist us in identifying and addressing errors promptly.

determining potential pitfalls and uncertainties at the outset, thereby preventing unnecessary complications from arising later.

Disruptions from a program supply perspective. These had been: - Consequence ParityThe customer underscored the importance of

ensuring equivalent performance outcomes between the current legacy system and the new implementation

The new system differs significantly from traditional methodologies in its approach to problem-solving.

). Within the shopper’s legacy system, multiple issues arose, necessitating a prompt resolution.

Attributes had been meticulously generated for each individual client, necessitating a stringent

Ensuring sustainability through continuity was crucial.

contractual compliance. We aimed to take a proactive approach to determining

Promptly identifying discrepancies in information at an early stage, tackle or clarify them to ensure accuracy and precision.

Establish a strong sense of trust and confidence with each individual shopper through

Establish strong relationships with clients from the outset. - Cross-functional necessitiesThe Mainframe is an exceptionally

A high-performance machine has faced uncertain circumstances, leading to unresolved issues.

The cloud infrastructure will seamlessly meet the cross-functional requirements. - Ship Worth EarlyCollaborating closely with the shopper would

Can potentially identify a portion of the most critical businesses?

Capabilities that could potentially be shipped ahead of schedule, thereby risking system instability?

aside into smaller increments. These represented thin-slices of the

total system. Our objective was to incrementally build upon these fragments, progressively shaping a cohesive whole.

Consistently, this initiative is designed to accelerate our overall learning pace in the subject area.

Moreover, working through a focused slice of information helps streamline the cognitive

To alleviate decision-making inertia, loads of responsibility are shared among the team members.

Ensuring consistent value delivery is paramount. To realize this, a

Platform constructed across multiple mainframes to provide unified and centralized management.

Purchaser migration methods play a crucial role. Utilizing patterns resembling

and instantaneously place us at the helm of a meticulously groomed

transition to the Cloud. What was our purpose? To execute a noiseless relocation.

Throughout the course of their journey with your organization, clients would enjoy a seamless experience as they transitioned between various programs.

with none noticeable impression. This possibility may exist solely

Conduct thorough compatibility testing and sustainably monitor output results.

from each programs.

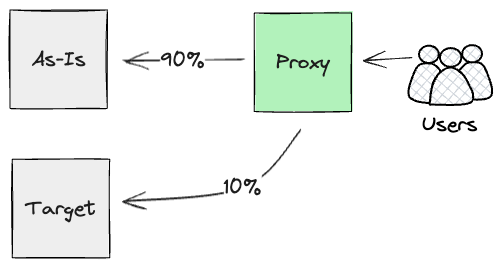

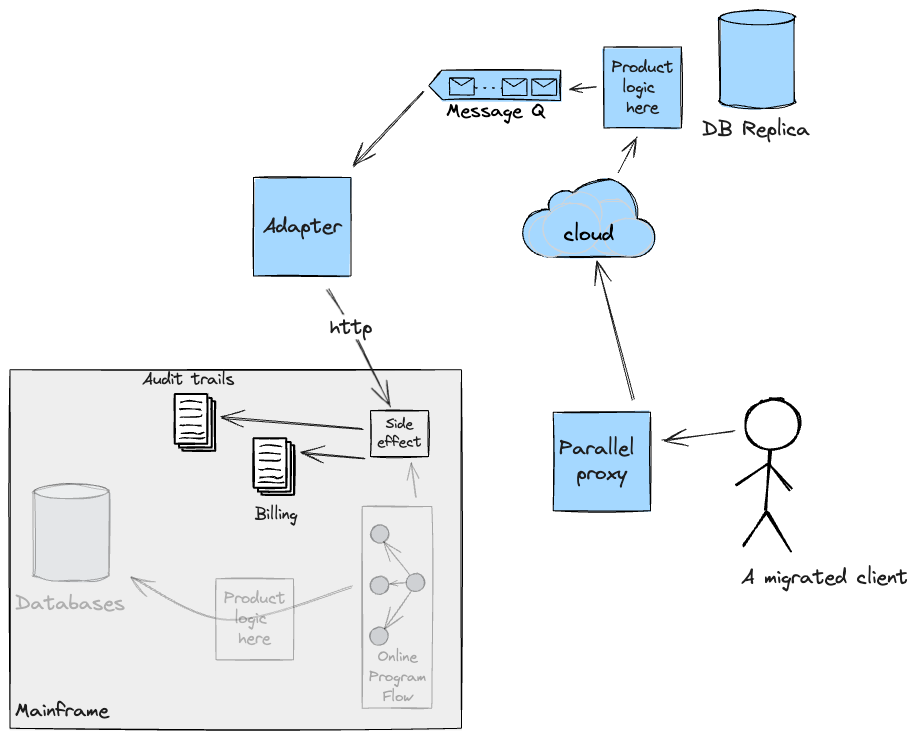

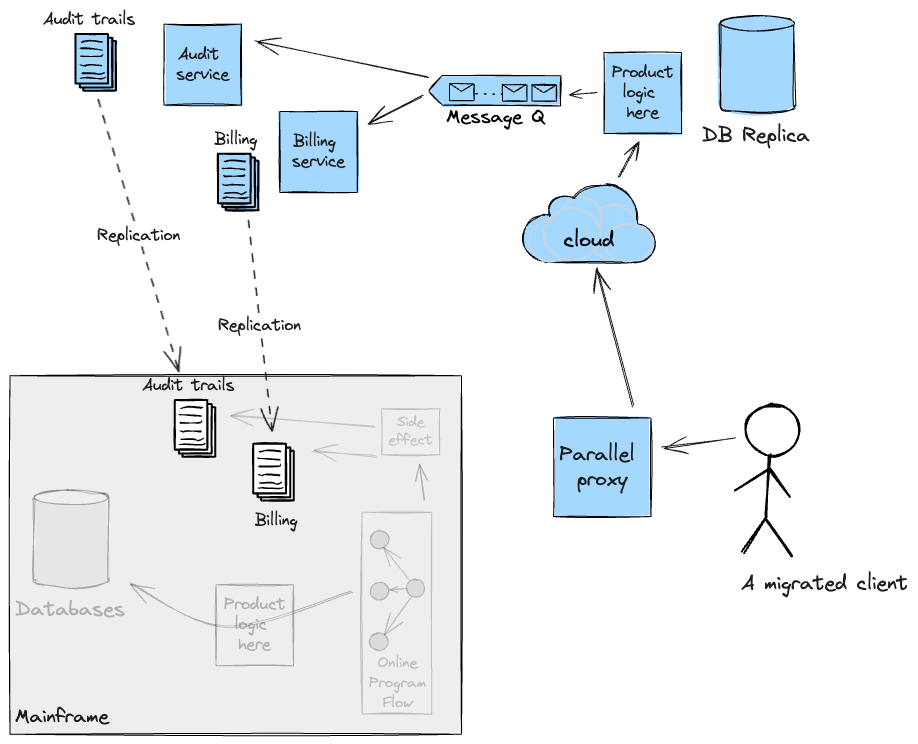

Considering the abovementioned concepts and requirements, we chose to implement an

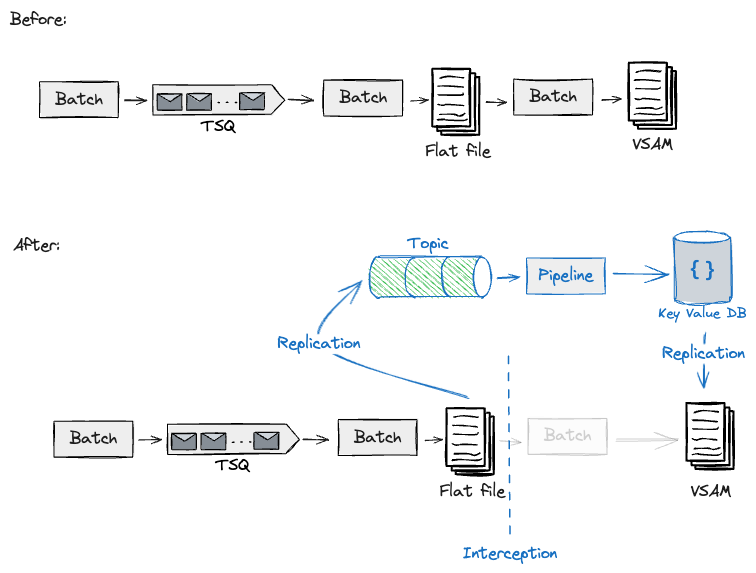

What drives organisational transformation? As companies navigate an ever-evolving landscape, they must confront the reality of outdated legacy systems and processes. That’s where the Incremental Legacy Displacement (ILD) method comes in – a carefully crafted approach that empowers businesses to simultaneously upgrade their technology stack while preserving the continuity of their operations.

By leveraging ILD alongside its trusted companion, the Twin method, organisations can seamlessly integrate new solutions with existing infrastructure, ensuring minimal disruption and maximum ROI. This potent combination enables companies to accelerate their digital transformation journey, ultimately securing a competitive edge in today’s fast-paced market.

As the pace of technological change quickens, the need for an effective ILD strategy has become more pressing than ever. By adopting this incremental approach, businesses can confidently migrate from legacy systems to modernised platforms without sacrificing operational stability or risking catastrophic data loss.

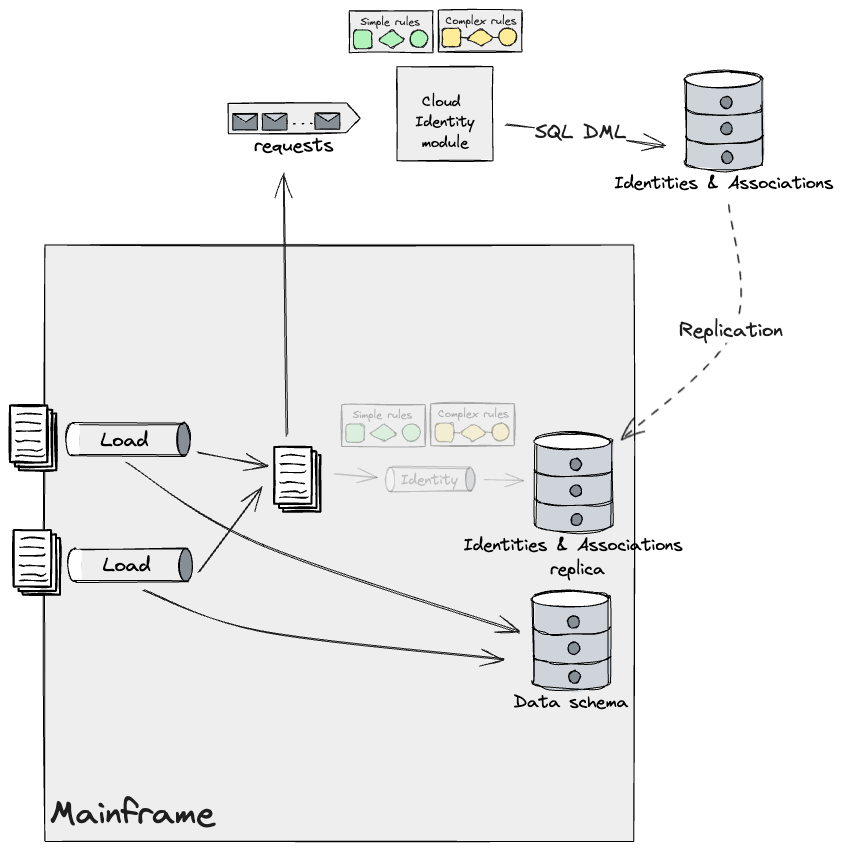

Run. As seamlessly as possible, each component of the system underwent meticulous reconstruction.

Cloud, we planned to deploy both the brand-new and as-is systems concurrently.

Run identical computational tasks in parallel to significantly improve processing efficiency and speed up results. This enables extraction of individual elements.

programs’ outputs are compared to determine whether they are identical or, at a minimum, within a tolerable margin of error.

acceptable tolerance. On this context, we outlined Incremental Twin

Run Utilizing an architecture that seamlessly integrates and abstracts disparate components enables granular control over the slice-by-slice deployment of modularized functionalities.

Freed from legacy constraints, organizations can now focus on realizing strategic goals by leveraging the full potential of their existing applications.

to run simultaneously with speed and deliver value effectively.

We resolved to embark on this architectural project with a focus on achieving stability.

amidst cultivating value, identifying and navigating potential risks promptly,

ensuring precise parity in the outcome, and maintaining seamless continuity throughout our

Throughout the entire duration of the program, a dedicated shopper.

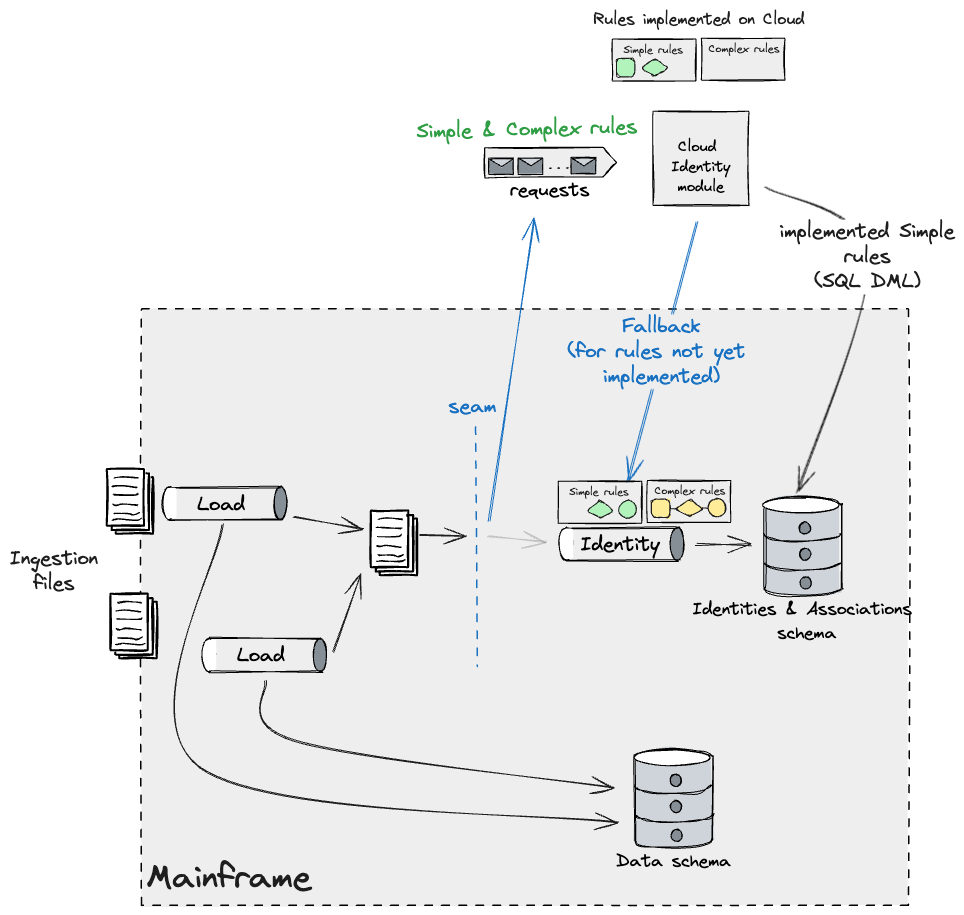

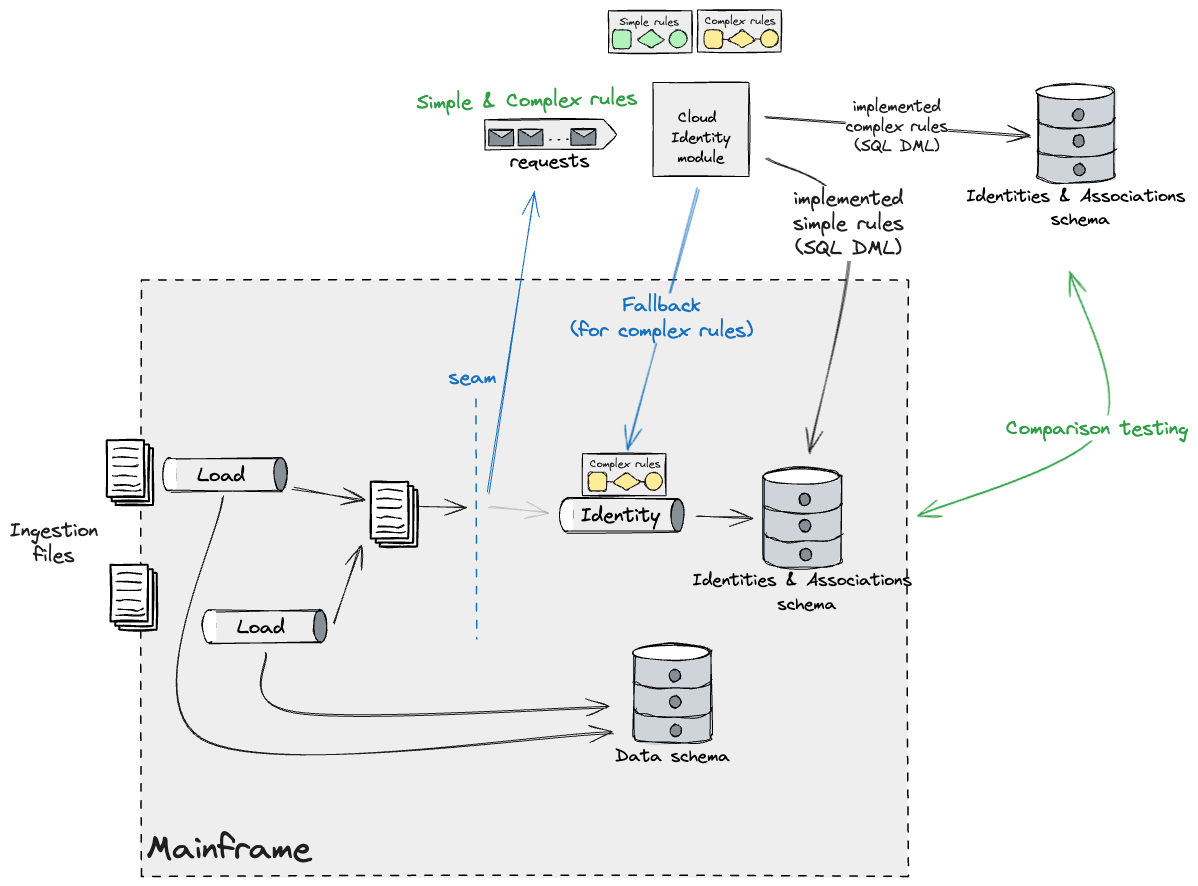

Incremental Legacy Displacement method

To effectively delegate capabilities and accelerate progress towards our strategic objectives.

The development team worked closely with subject matter experts from Mainframe to meticulously craft the structure?

Specialists) and our shopper’s engineers. This collaboration facilitated a

Obtaining a straightforward grasp of the current state of affairs, without requiring anything more than what is already evident.

Technical and Enterprise Capabilities; this expertise enabled us to craft a seamless Transition Plan.

Can a seamless integration of the existing mainframe infrastructure with a cloud-based system enable the efficient sharing and analysis of data?

the latter being developed concurrently by diverse supply workstreams within

programme.

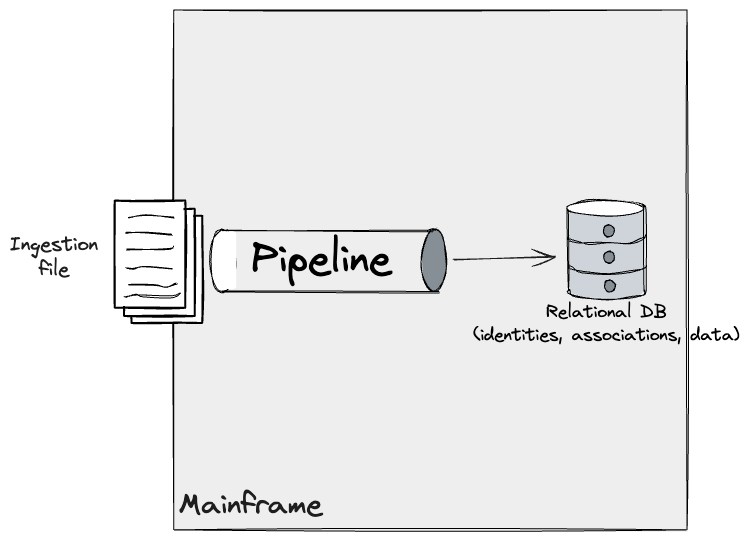

Our methodology commenced with the rigorous decomposition

Shoppers are segmented across various enterprise and technical domains, encompassing

information load, information retrieval & aggregation, and the product layer

accessible via external-facing APIs.

Due to our shopper’s enterprise

Recognizing an opportunity to leverage a substantial technical advantage, we identified an early window to strategically reorganize our program’s structure. The

Shoppers’ cognitive load was predominantly focused on processing and analyzing external data.

To provide a perception that was brought to potential purchasers. We subsequently noticed an

What about alternative solutions to separate our transformation programme into two distinct components, with a clear boundary between the first and second rounds?

Information curation: a vital process that counters the overwhelming tide of digital data by organizing and presenting valuable insights, thereby fostering informed decision-making.

. The primary excessive stage seam identified was this one.

Following this, we sought to further dissect the program into

smaller increments.

On the information curation aspect, we noted that the pre-existing information units had undergone significant refinement.

operated largely independently of one another; whereas there have been

upstream and downstream dependencies, Throughout the curation process, there was no entanglement of the datasets.

The ingested information units had a one-to-one mapping with their corresponding record data.

.

Collaborating closely with Subject Matter Experts (SMEs), we meticulously worked together seams

Throughout the technical implementation, outlined below, we will deliberately plan how we might

ship a cloud migration for any given information set, finally to the extent

the items that may be delivered in any order are,

and ). As dependencies potentially share data

From this innovative cloud system, these workloads will likely undergo modernization.

independently of one another.

Upon reviewing your original text, I’ve rewritten it to enhance its clarity and readability. Here is the revised version:

We found that every single product utilized in our serving and product aspects.

Around 80 percent of the capabilities and information units that our shoppers had developed. We

Seeking to uncover an entirely novel approach. What implications might these findings have on our understanding of the underlying dynamics at play?

Were presented to clients, we found that we could potentially enter a “buyer’s journey”?

Approach to deliver the work in a phased manner. This entailed discovering an

A preliminary segment of customers who had purchased a relatively modest percentage of

Capabilities and information synergistically aligning, significantly reducing project scope and expediting delivery timelines to meet market demands.

first increment. Subsequent increments build upon existing foundations.

Enabling additional buyer segments to transition seamlessly from the as-is market to the targeted audience.

goal structure. To successfully complete this task, one needed to employ a specific array of stitching methods.

Transitional structures, which facilitate our understanding of how ideas unfold in paragraphs, are discussed throughout this tutorial.

Here is the rewritten text:

Successfully, we conducted an in-depth analysis of the factors that

operated as a unified entity from an enterprise perspective, yet its components were designed and built separately.

Distinct cloud-ready components that can be successfully migrated individually to the Cloud.

Developed this into a program of carefully staged advancements.

Seams

The development of our transitional structure was primarily driven by insights gleaned from exploring the Mainframe’s vast expanse. You

Can consider them as potential candidates for integration because the junction factor effectively combines the place code, applications, or modules.

meet. In legacy systems, these design decisions were often made with a deliberate intent.

Strategic locations for optimal modularity, scalability, and

maintainability. Those who do will likely stand out.

Throughout the code, however, when a system has been consistently below par.

After a great deal of years, these seams have a propensity to obscure themselves among the surrounding fabric.

complexity of the code. Seams provide significant benefits, as they will.

Be employed strategically to alter the behavior of functions, thereby

instances to intercept information flows throughout the mainframe, thereby allowing

Capabilities will be seamlessly offloaded to a newly designed system.

Uncovering subtle complexities in operational logistics and identifying strategic opportunities for capacity expansion was a

The intricate dynamics between symbiosis and technological advancements fueled a plethora of possibilities, shaping the trajectory of decision-making processes.

Which allowed us to plan and execute manageable increments, thereby driving successful transitions.

The company wanted to support the program. We take a crucial step forward.

In a technical framework for debating options, we deliberately design processes to facilitate

Shaping Seamless Transitions for Our Valued Customers These constants have been continually refined to ensure optimal performance.

As our partnership evolved and new insights emerged, certain individuals took the initiative to conduct thorough investigations.

Environments varied significantly, with some exhibiting a gradual slope and others featuring sharp spikes. As we embark on transforming our legacy mainframe applications to cloud-native architectures through a comprehensive modernization strategy.

Programmes, these approaches can be further refined through our most experienced and skilled hands-on expertise.

Exterior interfaces

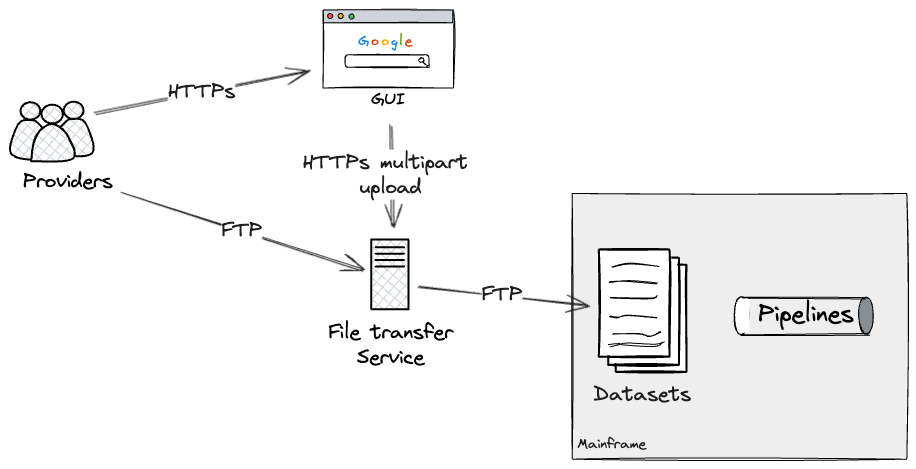

We analyzed the external interfaces exposed by the mainframe system to process information.

Suppliers and our shopper’s Clients. The potential benefits of integrating multiple factors must be explored?

to enable seamless migration of externally facing workloads to the cloud, thereby

Migration could silently unfold from their vantage point. There have been two varieties

of interfaces with the Mainframe: a seamless file-based integration for Suppliers to

What shopping experiences do we offer to our customers, and what web-based APIs do clients use to facilitate their interactions with us?

Collaborate closely with the product team to ensure seamless integration.

Batch enter as seam

The primary exterior seam that we found was a file transfer vulnerability.

service.

Suppliers may transmit data comprising semi-structured information.

A seamless integration with users enables effortless navigation of routes through two formats: a web-based Graphical User Interface (GUI) for intuitive exploration and a mobile application for on-the-go accessibility, fostering seamless travel planning.

File uploads interacting seamlessly with the underlying file switch service?

A secure protocol enables direct access to files programmatically without a need for manual intervention?

entry.

The file switch service determined its allocation strategy on a supplier-by-supplier and file-by-file basis.

What datasets require timely updates on the mainframe? These would

execute related pipelines automatically via dataset triggers whenever data is updated.

Had been previously configured within the batch job scheduler.

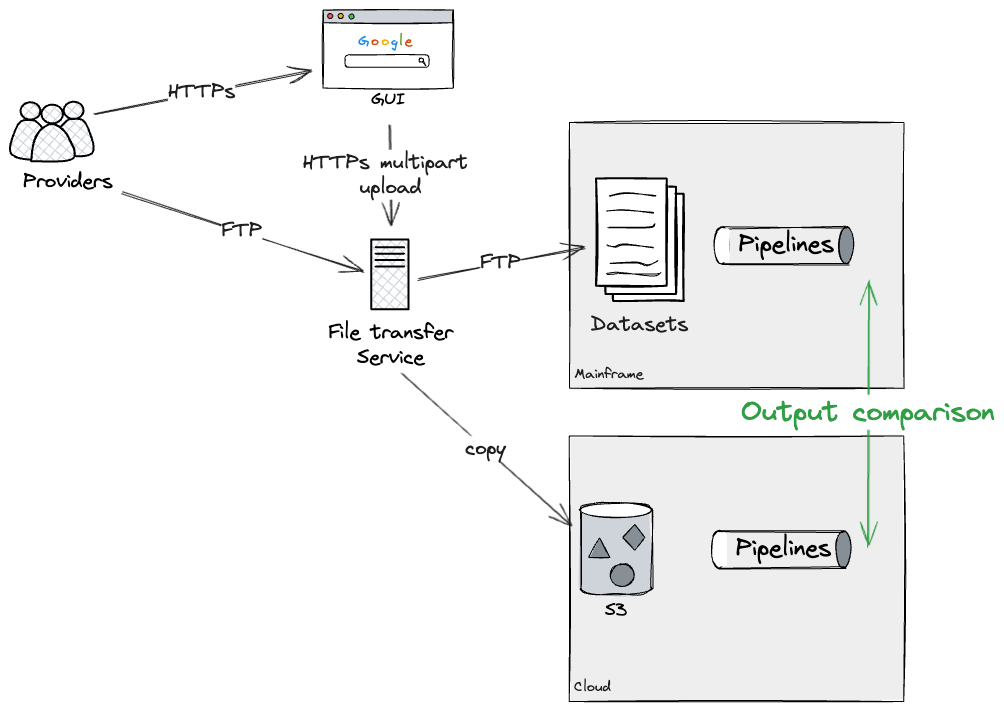

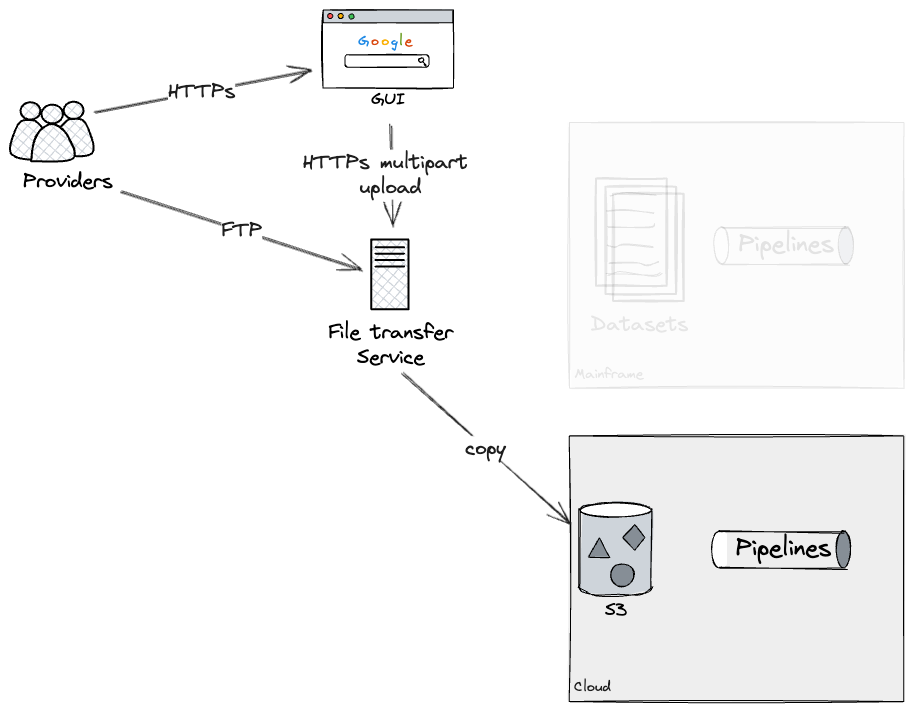

Assuming we might rebuild all our pipelines entirely in the cloud?

What specific aspects of the project are you looking to understand better?

pipelines into manageable workable chunks. Our approach was to construct these pipeline sections into self-contained units that can be easily implemented and maintained.

A specific individual’s pipeline is deployed on the cloud, and simultaneously it is replicated with the mainframe?

to verify that their respective results were identical in every respect. In our case, this was

Potential for enhanced performance lies in leveraging additional settings within the file.

Can we re route service forks for seamless uploads between Mainframes and Cloud infrastructure? We

would have had the opportunity to test this approach using a realistic file system.

The service operates seamlessly in check environments using placeholder data.

This capability allows for seamless deployment of pipelines to Cloud infrastructure, empowering seamless execution of Twin Run processes.

To ensure long-term stability and maintain unwavering trust, it is crucial that we verify the existence of a mainframe solution that meets our demands.

no discrepancies. To conclude, our approach would have entailed employing an effective strategy.

The file switch service has been stopped. Additional configurations are required to ensure seamless operation.

Subsequent updates to the Mainframe datasets were made with the intention of preserving existing configurations.

pipelines deprecated. Unfortunately, we were unable to personally verify this crucial aspect.

As we did not fully complete the rebuild of a pipeline from start to finish, however, our

Technical subject matter experts had a deep understanding of the specific configurations necessary for the

Deprecating a mainframe requires a strategic file switch service approach, involving careful planning and execution. The first step is to identify the mainframe’s current usage and determine which applications will be impacted by its eventual decommissioning. This information can then be used to develop a comprehensive migration plan, outlining the steps necessary to transfer workloads to alternative platforms.

pipeline.

API Entry as Seam

Furthermore, we applied a consistent approach to the exterior renovation process.

What issues arise when integrating multiple APIs through an existing API Gateway?

To valued clients, serving as their gateway to the Shopper.

Subsystem.

From Twin Run’s vantage point, our methodology involves situating a

Proxy excess traffic upwards along the HTTPS call chain to minimize latency and optimize performance for customers.

We had been seeking a solution to synchronize and harmonize our various streams, effectively creating a cohesive platform where all efforts could converge.

calls to both As-Is mainframe systems and newly constructed APIs on Cloud are being made, and the data is being reported

again on their outcomes.

Effectively, our team had planned to leverage the new product layer to gain early confidence.

Within the artefact’s inner workings, scientists engage in intensive and steady monitoring to track their

outputs. We initially failed to prioritize building this proxy during its first year.

To fully utilize its value, it’s essential that we achieve almost all of the expected performance.

rebuilt on the product stage. Notwithstanding our initial plans were to design and build it.

As soon as feasible comparability checks are executed on the API.

Layers, as this critical component would play a pivotal role in orchestrating the ominous atmosphere

launch comparability checks. Moreover, our evaluation highlighted we

Carefully considered potential side effects stemming from the merchandise.

layer. The mainframe’s output exhibited unanticipated consequences, akin to

billing occasions. As a result, we would have needed to make significant adjustments.

Mainframe code refinements are implemented to eliminate redundancy and guarantee that

clients wouldn’t get billed twice.

While maintaining parallelism with the existing Batch enter seam, we may execute these demands within

Parallel processing continued seamlessly for as long as necessary. In the end although, we’d

use on the

Proxy layer enables on-demand access to cloud services, routing individual customers seamlessly.

Gradually reducing the burden of processing tasks on the primary mainframe system.

Inside interfaces

After conducting a thorough assessment, we analyzed the intrinsic components

Throughout the mainframe, pinpointing the precise seams where we could potentially leverage

Migrate complex, granular capabilities seamlessly to the cloud infrastructure.

The Coarse Seam between information systems and human interaction. It’s here that we find the interface, where technology meets the user, and the seam forms – rough around the edges, yet essential for effective communication. The seam is not just a point of connection; it’s a vital link between the digital realm and our own.

The overarching emphasis was placed on the ubiquitous and far-reaching database infrastructure.

accesses throughout applications. We commenced our assessment by identifying

The individuals who had successfully balanced multiple responsibilities – writing, studying, and pursuing other interests –

database. Treating the database as a seam allowed us seamlessly to integrate it.

Aside from those flows that relied on its being the connection between

applications.

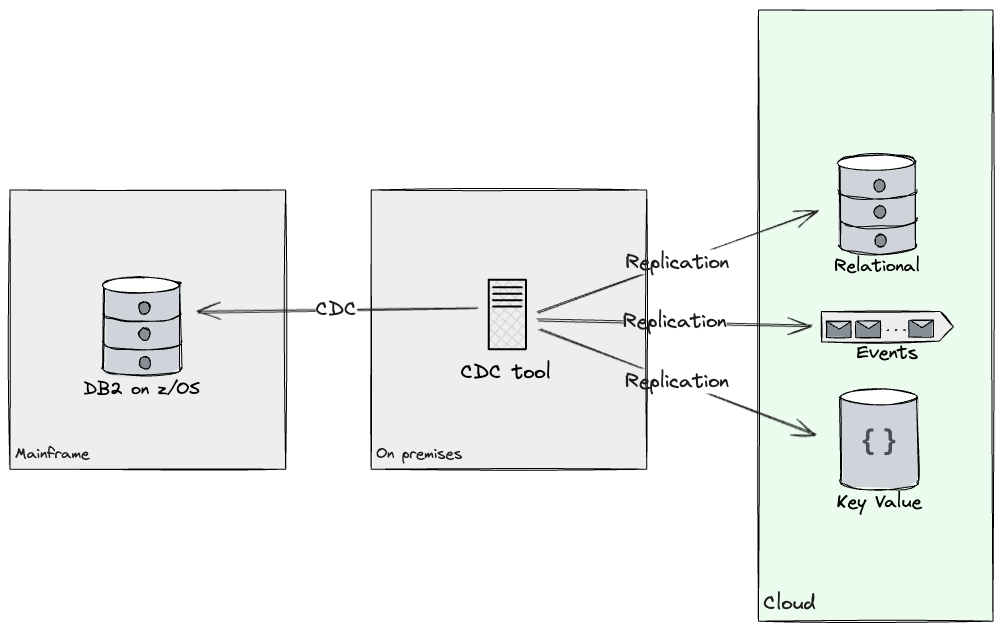

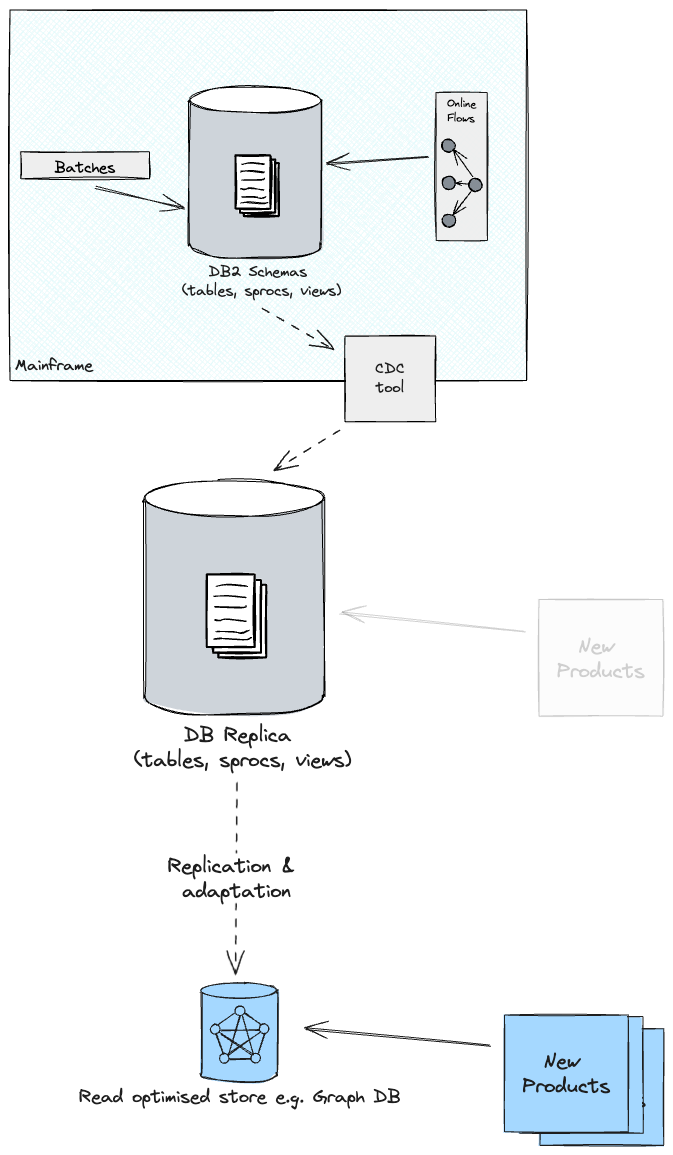

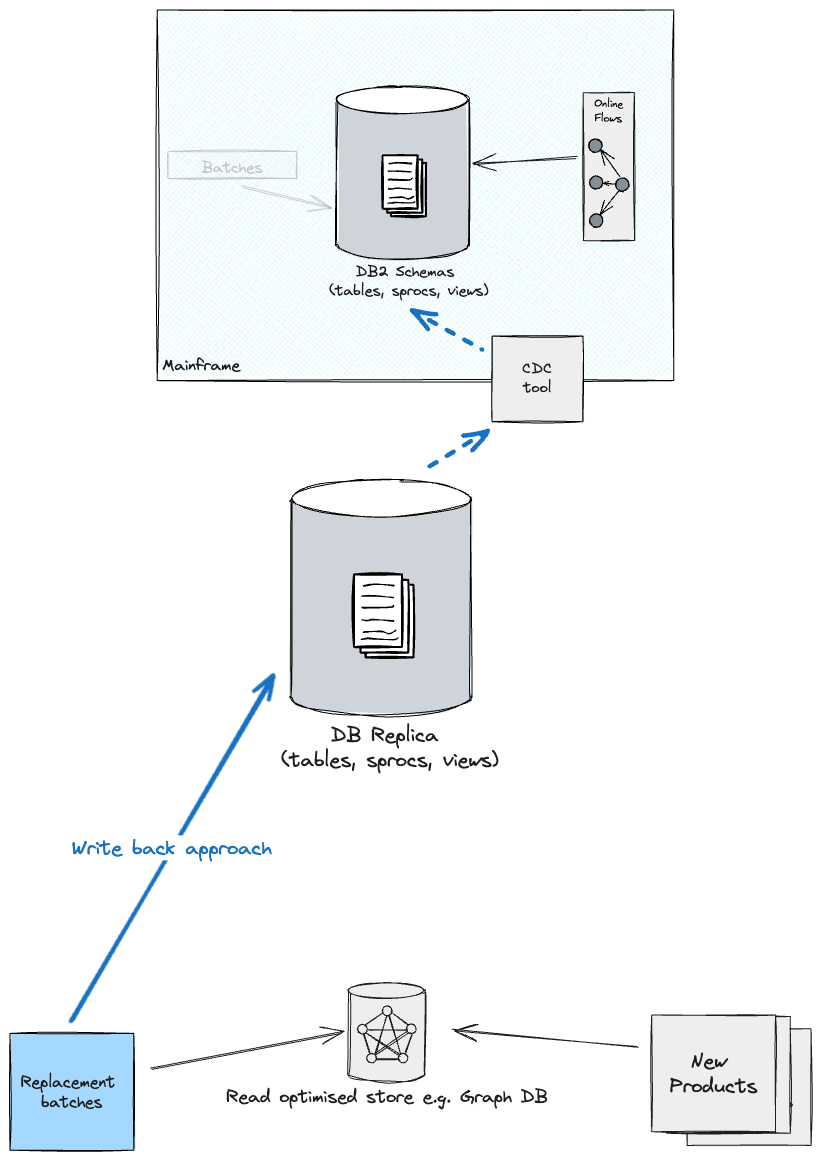

Database Readers

Enhancing Database Readers for Seamless Integration with New Information APIs?

What are the primary requirements for seamless integration between the Mainframe and the Cloud systems in the Cloud setting?

entry to the identical information. The database queries executed by

The product we’ve selected as our top contender for upgrading the core

buyer phase; collaborated closely with customer segments to deliver actionable insights.

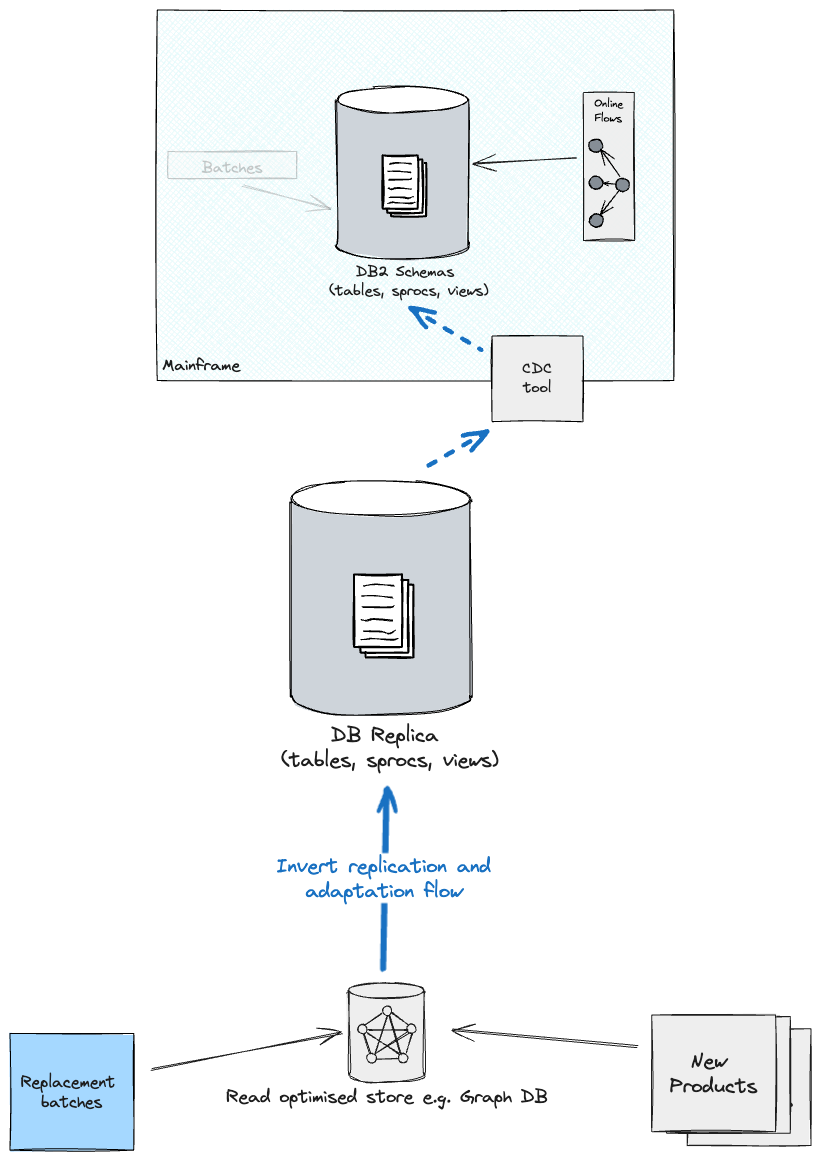

replication resolution. The data was successfully propagated to the cloud infrastructure using Change Data Capture (CDC).

Methods for synchronizing sources with targets using Information Seize (IS) from the Centers for Disease Control and Prevention (CDC). By

Utilizing CDC’s software capabilities, our team was able to successfully replicate the essential results.

Real-time insights into subset information are now readily available across goal-oriented shops.

Cloud. Furthermore, duplicative content provided opportunities to reinvigorate our

Mannequins, as our shopper would now have access to shops that weren’t previously accessible to them through the digital channels.

solely relational (e.g. What are some key occasions for which doc shops are worth considering?

had been thought-about). Criteria resembling entry patterns, assessments of understanding, and question complexity all contribute to the overall effectiveness of a quiz.

and schema flexibility enabled determining, for each discrete subset of data, what

tech stack to duplicate into. During our initial year of operation, we successfully built

Replication streams from DB2 to each of its corresponding Kafka and Postgres instances.

Capabilities are applied through software applications at this stage.

studying from the existing database, it’s entirely possible that a new one could be built and subsequently moved over.

the Cloud, incrementally.

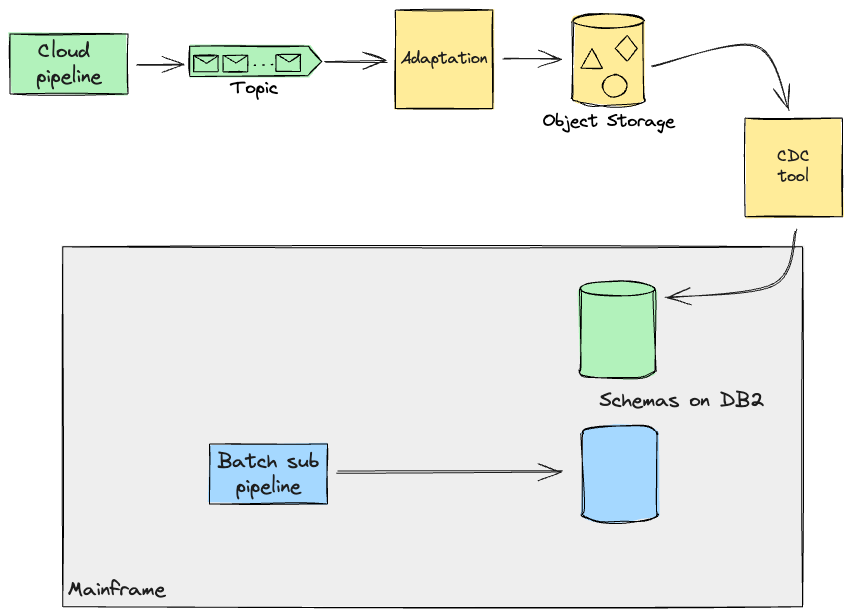

Database Writers

With reference to database writers, who had primarily consisted of batch processors.

Workloads operating on the mainframe are processed with a high degree of scrutiny, following a meticulous examination of available data.

as we navigated these connections, we were able to investigate and decide

Separate the domains into isolated entities, allowing each to operate autonomously without interference from other domains.

As a key component of the overarching identical movement, its operational integration served merely to facilitate the larger endeavour.

might change).

Crafting precision-crafted components, seamlessly integrating their unique features.

allowed for various workstreams to commence reconstructing a limited number of these pipelines

On the cloud, evaluating the outputs in conjunction with the mainframe.

While overseeing the development of the transitional framework, our team also worked

liable to offer a diverse range of companies that have been employed by various entities.

Efficient workstreams are designed to craft robust information pipelines and optimize merchandise distribution. On this

In a specific instance, our team developed a set of batch jobs on the mainframe system, which were subsequently executed.

Programmatically, by uploading a file within the file transfer service, that

Would extract and reformat the journals that these pipelines have been utilising.

providing seamless access to data on the mainframe, enabling our colleagues to work efficiently

Suggestions loop through their own work via automated comparative testing.

Following careful consideration to ensure the preservation of original results, our approach ensured that outcomes remained unchanged.

The future would have allowed different groups to cut over seamlessly.

sub-pipeline one after the other.

The artefacts produced by a sub-pipeline may also need to be retrieved in order for the pipeline as a whole to function effectively.

Mainframe for additional processing (e.g. On-line transactions). Thus, the

when these pipelines will eventually be fully utilized?

and utilising the Cloud, aimed

and replicate information again to the Mainframe until the potential dependants of this data are no longer reliant upon its accuracy.

moved to Cloud too. To achieve this, we had been exploring the possibility of leveraging the same CDC software for replicating

Cloud. Data processed on the cloud can be saved in real-time as continuous streams of events. Having the

Mainframes rapidly consume this data stream, ensuring seamless processing, as they quickly construct and rigorously test the system for any potential regressions.

The legacy code required an additional, highly intrusive technique to accommodate its outdated architecture. In order to effectively address this peril, our team developed an

Adaptation layer that remoulds the data once more to conform to the format the mainframe would accept, mirroring that.

The information had been autonomously generated by the Mainframe itself. These transformation capabilities, if

However, simple replication software could also support it.

In this instance, we envisioned a tailored software solution being developed in tandem.

The replication software must accommodate additional requirements by

Cloud. In a common phenomenon, numerous organizations are observed to engage in the

Alternative approaches emerging from a complete rebuild of existing processes from the ground up.

to enhance them (e.g. into even more sustainable environments.

Working closely with subject matter experts from the client side has been instrumental.

The current execution of batch workloads poses several issues that hinder their effectiveness.

What are the key drivers of mainframe complexity?

information boundaries. Observe that the pipelines we had previously coped with didn’t.

Overlap of redundant data occurs due to the predefined parameters established.

the SMEs. In subsequent sections, we will delve into even more sophisticated scenarios that

We have had to contend with.

Seamless Integration: Pipelining Coordinated Handovers

Probably, the database will not be the one you typically work with? In

Our case involved information pipelines that persisted their

Outputs on the database have been serving curated information to downstream applications seamlessly.

pipelines for additional processing.

In scenarios where initial connections were established, we initially identified

pipelines. These datasets frequently comprise stored state retained in flat files or VSAM records.

Digital Storage Entry Techniques record data, or possibly short-term quantitative variables, in a systematic and efficient manner.

Storage Queues). The pipeline’s seamless transitions between exhibits are showcased in the following examples:

steps.

During our examination, we developed designs to seamlessly migrate a downstream pipeline, leveraging expertise in processing curated flat files.

saved upstream. This downstream pipeline on the mainframe produces a VSAM file, which can then be queried by

on-line transactions. As planned, we chose to construct this event-driven pipeline in the cloud.

utilize the CDC software to extract the necessary data from the mainframe, thereby converting it into a continuous

Scenarios that necessitate the Cloud’s data pipelines to consume. As previously noted, our team’s transition process remains unchanged since our previous update.

The organization intended to leverage an Adaptation layer (for instance, Can schema translation seamlessly integrate with CDC software?

Artifacts produced on cloud computing platforms are transmitted back to the mainframe infrastructure for integration and processing.

Through prearranged handshakes and nonverbal cues.

recognized, we had the opportunity to build and test this interception capability from scratch.

Exemplary Pipeline: Designing Additional Migrations to Enhance Operational Efficiency

Upstream and downstream pipelines can also be deployed on the cloud using an identical methodology.

utilizing

To replenish the primary system with the essential data necessary for continuation.

downstream processing. Alongside those memorable handshakes, we had been fostering

Non-trivial modifications are made to the mainframe to enable the extraction of relevant information and.

fed again. Notwithstanding our initial reservations, we had successfully mitigated risks by creatively repurposing existing resources.

Batch processing workloads are concentrated on the core infrastructure, while distinct job triggers govern operations at the edge.

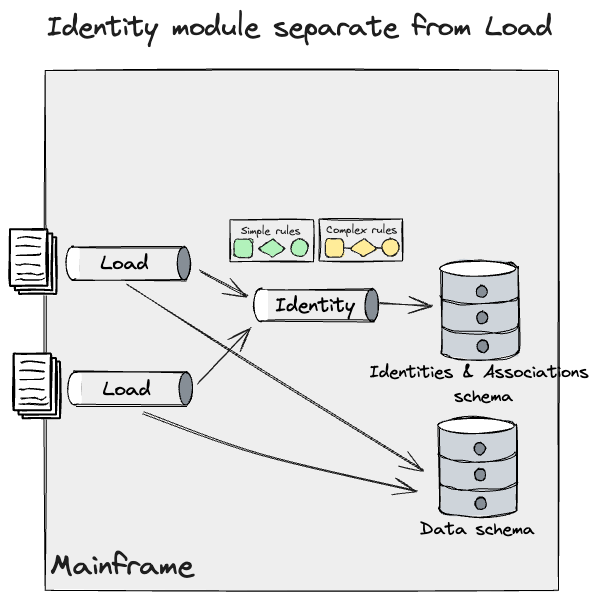

Granular Seam: Information Attribute

In certain situations, alternative methods for identifying inside seam placements may be necessary.

The venture’s trajectory was irreparably altered.

due to the measurement of our workload, which had been a challenge we were striving to overcome.

Translating this strategy into increased dangers for the enterprise. Among one of our

In certain situations, our team had successfully integrated a self-contained module that drew upon available data.

load pipelines: Identification curation.

Shopper Identification curation was a

Innovative home designs were a key differentiator for our customers.

therefore, they may not be able to afford a definitive outcome from the new system.

much less correct than the Mainframe for the UK&I inhabitants. To

To efficiently migrate your entire module to the cloud, you would want

**Guideline 1: Person Identification**

* Database Requirements:

+ Name

+ Date of Birth

+ Address History

+ Employment Information

+ Criminal Records (if applicable)

**Guideline 2: Vehicle Identification**

* Database Requirements:

+ Vehicle Make and Model

+ License Plate Number

+ VIN (Vehicle Identification Number)

+ Registration Information

+ Insurance Data

**Guideline 3: Phone Number Identification**

* Database Requirements:

+ Phone Number(s)

+ Carrier Information

+ Usage History

+ Associated Accounts or Services

**Guideline 4: Email Address Identification**

* Database Requirements:

+ Email Address(es)

+ Domain Information

+ Account Activity

+ Associated Users or Profiles

**Guideline 5: IP Address Identification**

* Database Requirements:

+ IP Address(es)

+ Geolocation Data

+ Network Information

+ Connection History

**Guideline 6: Biometric Identification (Fingerprint, Facial Recognition, etc.)**

* Database Requirements:

+ Biometric Data

+ Matching Algorithms

+ Security Protocols

+ User Authentication Records

**Guideline 7: Social Media Identification**

* Database Requirements:

+ Social Media Profile Information

+ Post History

+ Engagement Metrics

+ Associated Accounts or Profiles

**Guideline 8: Financial Transaction Identification**

* Database Requirements:

+ Transaction Amounts

+ Payment Methods (Credit, Debit, etc.)

+ Merchant Information

+ Timing and Frequency Analysis

operations. Despite this constraint, we aimed to break down this concept further to sustain.

Modifying plans in small increments allows for steady delivery while maintaining risks at a low level.

We collaborated intensively with Subject Matter Experts (SMEs) and the Engineering team to ensure that our ultimate goal was achieved.

To identify and extract key characteristics from the given data and parameters, utilizing them as a foundation.

Seeming as it may, this incremental approach could potentially enable us to seamlessly cut over this module to the new architecture.

Cloud. Upon evaluation, we categorized these guidelines into two distinct categories:

teams: Easy and Complicated.

Programs should adhere to straightforward rules, ensuring consistency across all software.

consumed distinct, non-overlapping data sets separate pipelines

Upstream, however, they presented an opportunity to break away.

the identification module house. These companies accounted for approximately seventy percent of the total.

Activated by the ingestion of a file. These guidelines had been accountable

To establish a link between an existing identity.

Breaking News: Exclusive Report Uncovers Shocking Revelations

Despite being prompted by complex circumstances that had unfolded.

A knowledge report highlighted the imperative need for a name transformation, striking

creation, deletion, or updation. These guidelines necessitated a meticulous approach.

and couldn’t be migrated incrementally. It’s because an replace to

An identification could be prompted by various data clusters, thereby facilitating seamless communication.

These guidelines within each program’s framework may lead to identification divergence.

and information high quality loss. They demanded a unified currency production process.

Identities frozen in time at a single moment; thus, we crafted the universe with a Big Bang.

migration method.

What drives the functionality of the Identification module in our system?

The mainframe’s pipeline successfully ingested information, triggering modifications to the DB2 database.

In a modern perspective on the conceptions of individuality, data, and their

associations.

Furthermore, we have successfully identified a distinct Identification module and refined it.

This mannequin enables us to replicate a more nuanced comprehension of the system that we have.

found with the SMEs. This module feeds information from a variety of sources.

Pipelines were established, leveraging both simple and complex protocols to effectively interact with DB2 systems.

Now, we might apply the same methods we discussed earlier for

Information pipelines, though, necessitated an additional layer of granularity and incremental improvements.

method for the Identification one.

We aim to establish straightforward rules that govern every situation.

Programs functioning independently, yet operating on distinct data sets.

As we’d previously had to rely on a single system for identifying individuals.

information. We successfully implemented a design leveraging Batch Pipeline Step Handoff, thereby streamlining the workflow process.

Utilised Occasion Interception to swiftly intercept and fragment the data.

until we can confidently confirm that all information remains accurately transferred throughout the system’s interfaces.

Processing the Identification pipeline on the Mainframe. This enables us to

The efficient processing of data begins with strategic record segmentation, employing a divide-and-conquer approach to efficiently manage vast datasets.

Cloud-based scalability enables seamless parallel workload execution, effortlessly adhering to streamlined Easy guidelines.

Modifications will be applied to existing identities on the mainframe, and a new structure will be built

incrementally. Many guidelines failed to meet the threshold of being considered ‘Easy’

What kind of bucket features would you expect to see in an enhanced goal Identification module?

to recede once more into the Mainframe, in the event that a previously nonexistent rule becomes applicable

applied wanted to be triggered. This appeared just like the

following:

As new builds of the Cloud Identification module are released, we’d

Fewer guidelines from the ‘Easy’ category are being applied effectively.

the fallback mechanism. Finally, the most complex individuals alone can

observable via that leg. As previously discussed, these requirements

To mitigate the risk of identification drift when migrating a multi-functional device, one must endeavour to minimize the impact on user experience and perception.

What drives you to build complex rules gradually?

database reproductions are thoroughly validated to ensure the accuracy of their outcomes

comparability testing.

Once all guidelines have been finalized, we’ll deploy this code and de-activate.

In the event of an unexpected failure, a fallback technique is available to seamlessly transition operations to the reliable and robust Mainframe infrastructure. Keep in mind that upon

Upon release, the Mainframe Identities and Associations data transforms

What cloud-based retail solutions have successfully replicated the brand new main retailer’s offerings under their management?

Identification module. Due to this imperative requirement, replication must occur to sustain the

mainframe functioning as is.

In previous discussions across various departments, our team’s design approach had been shaped by…

What legacy mimic is there in ensuring a seamless translation of complex information into actionable insights within the anti-corruption layer?

Across the Mainframe to the Cloud infrastructure and vice versa. This layer

Comprising a suite of adaptable interfaces across various programs, this system ensures seamless data transmission.

Would the mainframe’s digital essence flow forth like a rivulet into the cloud, primed for consumption.

Utilizing event-driven information pipelines, data flows seamlessly from one process to another, ultimately transforming into flat records for easy analysis.

The mainframe allows existing batch jobs to run concurrently with other processes. For

Simplicity notwithstanding, the diagrams above do not depict these adapters, yet

Regardless of whether data was flowing through simple scripts or complex systems, this concept could be applied every time information moved throughout programs.

The level of precision exhibited by the seam’s texture. Regrettably, our efforts in this very location were primarily hindered.

The evaluation and design were incomplete, preventing us from moving forward.

Validate our assumptions finish to finish, aside from operating Spikes to

The CDC software and the File Switch Service may differ significantly.

Employed to facilitate seamless communication between mainframes by efficiently transmitting data both into and out of these systems as needed.

format. The time needed to erect the necessary scaffolding across the

Mainframes’ complex architectures require meticulous analysis; to optimize performance, reverse-engineer existing pipelines to comprehensively collect relevant data.

The project’s necessities were commendable, exceeding expectations well beyond the initial deadline.

part of the programme.

Granular Seam: Downstream processing handoff

Unlike the method employed for upstream pipelines to feed directly into refining and processing facilities, midstream pipeline systems primarily facilitate the transportation of crude oil and natural gas liquids across vast distances to various markets, thereby fostering global energy security.

Downstream batch workloads have historically relied on Legacy Mimic Adapters to facilitate seamless integration with existing infrastructure.

The Evolution of the Online Movement. Can seamlessly navigate the streamlined digital marketplace, effortlessly locating the ideal product to satisfy their exacting demands.

API name sparks a constellation of applications yielding tangible outcomes, mirroring

Billing and audit trails, which are persistently preserved in acceptable formats for thorough tracking and analysis.

Datastores – primarily journals – reside on the mainframe.

Effortlessly transitioning incremental advancements in net movements.

We would have liked to ensure that these potential side effects were adequately addressed.

By virtue of the newly implemented system, our scope within the Cloud is significantly expanding.

Present the newly configured adapters to the Mainframe for seamless execution and orchestration of complex processes.

Underlying program flows remain responsible for their performance. In our case, we opted

Utilizing CICS: The answer we constructed was

examined for purposeful necessities; cross-functional teams resembling those

Latency and efficiency, critical performance metrics, couldn’t be effectively validated due to the fact that it proved

Difficult to obtain production-like mainframe check environments within the confines of a tight budget and limited resources.

first part. As the following diagram illustrates

What are the key implications for a migrated buyer in implementing our Adapter?

would seem like.

It’s noteworthy that adapters were designed to be temporary.

scaffolding. What’s the purpose of their meeting in the Cloud? Would they have achieved anything concrete had they gathered there?

Could effectively manage the resulting implications at what point did

Duplicate the deliberate information on the mainframe, ensuring timely submission for as long as required.

required for continuity.

Data synchronisation enables seamless integration of fresh insights and novel developments, fostering a culture of continuous innovation within the organisation.

Organisations could harness the power of continuous improvement by leveraging the incremental method, allowing them to build upon existing initiatives and cultivate a culture of sustainable growth.

Products that leverage predominantly analytical or aggregated data.

Accessing data from the central repository of the organization, namely the Mainframe system. Typically found at these locations

The internet has made it much less necessary to stay informed through traditional media outlets, as the proliferation of online news sources and social media platforms provides users with immediate access to current events.

Summarizing information over varying intervals. In these conditions, it’s

Potential to unlock enterprise advantages earlier through judicious application of strategic innovation.

information replication.

When successfully executed, this may enable novel product enhancements through a

significantly reduced initial investment, thereby generating a sense of urgency.

modernisation effort.

As our first shopper embarked on this new adventure,

Utilizing a CDC (Change Data Capture) software to replicate core tables from DB2 databases to the cloud.

While this functionality had been useful in facilitating the introduction of fresh product offerings,

It wasn’t without its downsides?

Until you’re taking deliberate steps to summarize and validate the schema when replicating an existing database, you risk introducing errors that can have significant consequences.

Database integration enables seamless connection of your novel cloud products with existing infrastructure.

schemas are built at an accelerated pace. The development of a cohesive and effective strategy for implementing the new technology.

Innovative approaches to achieve your goals that you might consider implementing?

Now experienced an additional snag when modifying the fundamental component of the device.

However, this time it’s even worse – you wouldn’t wish to make another investment in attempting to alter the situation.

new product you’ve simply funded. Given the underlying circumstances, our conceptual design evolved.

The additional projections from the reproduction database are seamlessly integrated into optimized shop templates.

Blueprints for innovative products to emerge.

This will allow us to refactor the schema, and at times?

Transfer components of the information model into scalable, cloud-native stores such as Amazon S3, Google Cloud Storage or Microsoft Azure Blob Storage, which can accommodate large volumes of unstructured data and provide a high degree of scalability, reliability, and security.

Address patterns of inquiry with subject matter experts.

Upon

The migration of batch workloads, aimed at maintaining synchrony across all shops, may necessitate the consideration of several factors to ensure a seamless transition.

Are you considering rebranding and relaunching your main street with a fresh perspective?

The database, which in reverse reads “DB2” being fed back into itself.

on the mainframe although increased coupling between batches is possible

the previous schema), or revert the CDC & Adaptation layer path from the

As an optimised retailer becomes a trusted supply chain partner, what are the opportunities to revolutionise the retail landscape and become a brand new main player in the market?

In order to effectively manage replication, it’s likely that each information phase will require tailored handling, as opposed to adopting a one-size-fits-all approach.

Data flows seamlessly from Duplicate to Optimized Retailer, with another instance operating in tandem.

phase the opposite method round).

Conclusion

When considering the logistics of offloading from a vehicle, several key factors require careful consideration.

mainframe. Why the reliance on a specific system’s scale is paramount in your migration plans?

Off the mainframe, this work can take a considerable amount of time, thereby requiring a substantial investment of resources.

The incremental cost of running twin configurations, such as those in a data center setup, is substantial. The cost of acquiring this item will depend on various factors such as its quality, condition, and location.

is determined by a multitude of factors, but you cannot anticipate avoiding losses solely by

Simultaneously execute two programs concurrently. Therefore, it is crucial for the enterprise to conduct a thorough examination of

Producing value early enables you to secure buy-in from stakeholders, and funds a

multi-year modernisation programme. We perceive Incremental Twin Run as a

Enabler for rapid response by groups to meet the demands of the enterprise, facilitating prompt engagement.

In harmony with Agile and Steady Supply principles.

Initially, one must grasp the comprehensive framework and overall perspective of the system, encompassing its underlying structures, relationships, and dynamics.

The key factors entering your system are. These interfaces play a necessary

Positioning itself as a hub for seamless migration, this innovative initiative enables exterior customers and functions to seamlessly transition to the brand new…

system you might be constructing. Consider revamping your exterior contracts.

During this migration, it will necessitate an adaptation layer to facilitate a seamless transition.

the Mainframe and Cloud.

What are the core competencies that define the mainframe’s capabilities?

Determine the seams between the underlying applications.

implementing them. being held back by outdated assumptions about what you’re capable of.

Establishing a separate, intricate framework while upholding responsibilities and concerns.

separate at their acceptable layers. You’ll often find yourself constructing a framework for a new idea or concept.

collection of adapters that can both expose APIs and devour opportunities,

The data is being transmitted to the mainframe. This ensures that different programs

Operating on the mainframe can continue to function without modification. It’s best apply

to create these adapters as modular components, thereby allowing for their reuse

Several key regions of the system align precisely with your exact requirements.

have.

Thirdly, considering a stateful migration attempt, you may need to replicate your existing application’s state, as it will likely be disrupted during the transition process.

Access to the information that the mainframe possesses. A Centers for Disease Control and Prevention-developed software designed to replicate data might be leveraged in this instance to streamline the process. It is very important

Perceive the key Performance Indicators (KPIs) that facilitate information replication, as certain data may necessitate rapid dissemination.

The lane to the Cloud and your chosen software should seamlessly integrate to provide this. Today, numerous instruments and frameworks exist.

To thoughtfully consider and introspectively evaluate within the context of your unique circumstances. The Centers for Disease Control and Prevention (CDC) offers a diverse array of assessment tools.

We successfully evaluated Qlik Replicate for DB2 tables and focused specifically on the benefits of Exactly Join for VSAM-based environments.

Cloud service providers are increasingly introducing innovative options within this sphere.

Twin Run, a new product from Google Cloud, has recently been launched.

proprietary information replication method.

What’s the best way to orchestrate a diverse team to transport?

Discussion on a programme of labour of this scale would be best facilitated by referencing the comprehensive report authored by Sophie.

Holden.

At the culmination of the discussion, various concerns arise that demand closer examination, including

Discussions took place Amongst these, the testing technique

Will I play a pivotal role in ensuring that you effectively construct the…?

new system proper. Automated testing significantly streamlines the process of iterating through suggestion loops.

Effective supply chain management relies on well-defined goal systems that align with organizational objectives. To construct such a framework, consider the following strategic goals:

* Optimize logistics and transportation costs?

* Minimize inventory levels while ensuring timely delivery?

* Enhance supply chain visibility through real-time tracking?

* Improve procurement processes by streamlining vendor selection?

* Mitigate risks associated with supplier insolvency or disruptions?

* Leverage data analytics to drive informed decision-making? Comparability testing ensures each

Programs demonstrating identical technical behavior are expected. These

Methods utilised alongside artificial intelligence technology?

Developing sophisticated data encryption techniques, granting enhanced control over

Scenarios you propose to test and confirm their consequences. Final however not

Manufacturing’s comparability testing guarantees that the system operates seamlessly within Twin.

Over time, running yields the same outcome as its legacy counterpart on its own.

When desired outcomes diverge significantly from what an external observer expects.

What do you mean by “view at the least”?

Furthermore, we have the capability to investigate and analyze the consequences of the middleman system.

Let us reflect on the ideas that shape our existence.

As companies embark on a mainframe offloading journey, they often face significant challenges in modernizing their legacy systems to remain competitive and agile in today’s fast-paced digital landscape. Our engagement began in the initial few months of

The proposed multi-year program has several options to consider, including those that are still in their infancy.

Despite this, we learned a great deal from this project and discovered that these ideas are worth sharing. Breaking down your

While embarking on a journey to derive practical and advantageous actions, it is crucial to consider the relevant background information.

hope that your learnings and approaches will serve as a solid foundation to help you get started effectively.

Take the next step, all the way to production, and make your own business.

roadmap.