Earlier this summer, my colleague Noelle Walsh published a blog post highlighting our efforts to conserve water in our data center operations, as part of our commitment to achieving carbon negativity, water positivity, zero waste, and biodiversity conservation.

Microsoft designs and builds a comprehensive cloud computing infrastructure that spans the full technology stack, encompassing datacentres, servers, and customised semiconductor components. This fosters unique possibilities for harmonizing weather patterns to optimize overall performance and impact. To achieve our commitment to become carbon neutral by 2030, we prioritize optimizing energy and vitality efficiency as a crucial step in our journey, while also advancing carbon-free electricity generation and elimination methods.

As AI technology continues to evolve at an unprecedented pace, our focus on sustainability has never been more crucial? We’re committed to harnessing the power of AI for a better future, and that means ensuring its development and deployment have a net positive impact on the environment.

Unlocking new opportunities lies at the heart of our organization’s mission. Our strategic efforts are concentrated on three distinct pillars that drive growth and prosperity: Innovation, Sustainability, and Community Engagement.

As rapid advancements in AI drive innovation, we now have an opportunity to transform our infrastructure, from datacentres to servers to silicon, prioritizing efficiency and sustainability. To reduce the environmental impact of our services, we’re pioneering sustainable practices throughout every stage of our technology stack, minimizing both the power consumption and carbon footprint of our cloud and AI-based solutions. Long before electrons reach our data centers, our teams focus on optimizing the computational power we can derive from each kilowatt-hour (kWh) of electrical energy consumed.

Let’s showcase innovative applications of AI that are driving advancements in efficiency, productivity, and overall performance. This implementation leverages a holistic approach to optimize efficiency by harnessing the power of artificial intelligence, specifically machine learning, in managing cloud and AI workflows.

Can digital transformation unlock a new era of IT efficiency? By streamlining infrastructure and harnessing the power of AI-driven insights, organizations can optimize resource allocation, reduce costs, and accelerate innovation. From datacenter consolidation to server virtualization and chip-level optimization, the possibilities are vast. What if you could predict and prevent outages, automate routine tasks, and gain real-time visibility into your IT operations? The future of IT efficiency is here.

Optimizing hardware resource allocation through efficient workload management strategies.

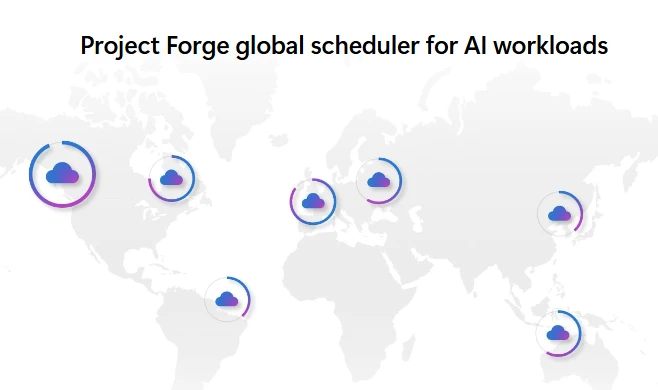

As a pioneering software development company, we foster efficiency by leveraging real-time workload scheduling software that enables us to optimize the utilization of existing infrastructure and meet cloud service demands with maximum precision. As daylight hours vary across different time zones, we observe a surge in demand during peak morning hours in one region, followed by a decline as evening approaches elsewhere? In numerous scenarios, we will synchronize availability to optimize internal resource utilization, such as running AI training workloads during off-peak hours, leveraging existing hardware that would otherwise remain idle during that time frame? This enhancement also enables us to optimize energy usage effectively.

We harness the power of software programming to optimize vitality effectiveness across every layer of the infrastructure stack, encompassing datacentres, servers, and even silicon.

Conventional practices in the industry have long dictated that executing AI and cloud computing workloads relies heavily on allocating central processing units (CPUs), graphics processing units (GPUs), and processing resources to each team or workload, resulting in a CPU and GPU utilization rate of approximately 50% to 60%. This scenario leaves certain CPU and GPU combinations with unrealized potential, underscoring the need to leverage their capabilities more effectively across various workloads. To address the utilization issue and streamline workload management, Microsoft has consolidated its AI training workloads into a unified pool governed by Project Forge’s machine learning capabilities.

Microsoft’s manufacturing solutions employ artificial intelligence to intelligently manage coaching and inference workloads, incorporating robust checkpointing capabilities that freeze the current state of an application or model, allowing seamless pausing and restarting whenever necessary? Regardless of running on accomplice silicon or Microsoft’s custom-designed silicon, Undertaking Forge consistently boosts efficiency across Azure, achieving 80-90% utilization rates at scale.

Unlocking untapped power potential across our global datacenter portfolio.

To optimize energy efficiency, we utilize intelligent workload placement across a data center, harnessing any idle energy in a sustainable manner. Energy harvesting refers to the effective utilisation of available energy, enabling optimal resource allocation and conservation. If a workload’s energy consumption doesn’t fully utilize its allocated resources, excess energy can be repurposed or reallocated to other tasks. Since its inception in 2019, this project has successfully harnessed approximately 800 megawatts of electrical energy from existing data centers, equivalent to powering roughly 2.8 million miles driven by a typical electric vehicle.1

Over the past year, as buyer AI workloads have surged, our rate of improvement in energy savings has remarkably doubled. As part of our ongoing efforts to optimize energy usage across our entire datacenter portfolio, we are committed to implementing best practices that enable us to reclaim and reallocate excess energy without compromising performance or uptime.

Driving IT infrastructure efficiency through innovative cooling solutions?

With a focus on reducing the energy and water consumption required to cool high-performance chips and servers, our goal is to optimize workload management while minimizing environmental impact. As AI workloads continue to process complex data with remarkable efficiency, a corresponding rise in heat generation has been observed, prompting a shift towards liquid-cooled servers that minimize electricity consumption for thermal management compared to their air-cooled counterparts. The shift to liquid cooling enables us to extract greater efficiency from our silicon, as the chips operate more effectively within an optimal temperature range.

One of the major engineering challenges we faced when implementing these options was figuring out how to upgrade existing data centers, which were originally designed for air-cooled servers, to support the latest advancements in liquid cooling technology. We’re introducing liquid cooling solutions that sit adjacent to racks of servers, mimicking the functionality of an automobile radiator, thereby bringing innovative cooling approaches into modern datacentres, reducing power consumption for cooling while increasing rack density. This innovative technology will significantly boost the computational power we can harness from each square foot of surface area. foot inside our datacenters.

Maximize your cloud and AI capabilities with cutting-edge training and innovative tools.

Stay attuned to further investigate this subject, in conjunction with our efforts to successfully transition cutting-edge effectiveness analysis from the laboratory setting to industrial applications. Discover innovative approaches to sustainability through our Sustainable by Design blog series, starting with [insert links].

Here is the improved/revised text:

For architects, lead builders, and IT decision-makers seeking to enhance their understanding of cloud and AI efficacy, we recommend exploring the… This documentation set aligns with the design principles of the industry, providing a framework to help clients navigate evolving sustainability requirements and regulations throughout the lifecycle of their IT capabilities – from planning to deployment and ongoing operations.

1Based on industry standards, it is generally assumed that an electric vehicle can travel approximately 4 kilometers per kilowatt-hour, assuming a driving time of one hour and considering the typical energy consumption rate of 80 kWh for a full charge.