Think about driving via a tunnel in an autonomous car, however unbeknownst to you, a crash has stopped visitors up forward. Usually, you’d must depend on the automotive in entrance of you to know it is best to begin braking. However what in case your car might see across the automotive forward and apply the brakes even sooner?

Researchers from MIT and Meta have developed a pc imaginative and prescient method that would sometime allow an autonomous car to do exactly that.

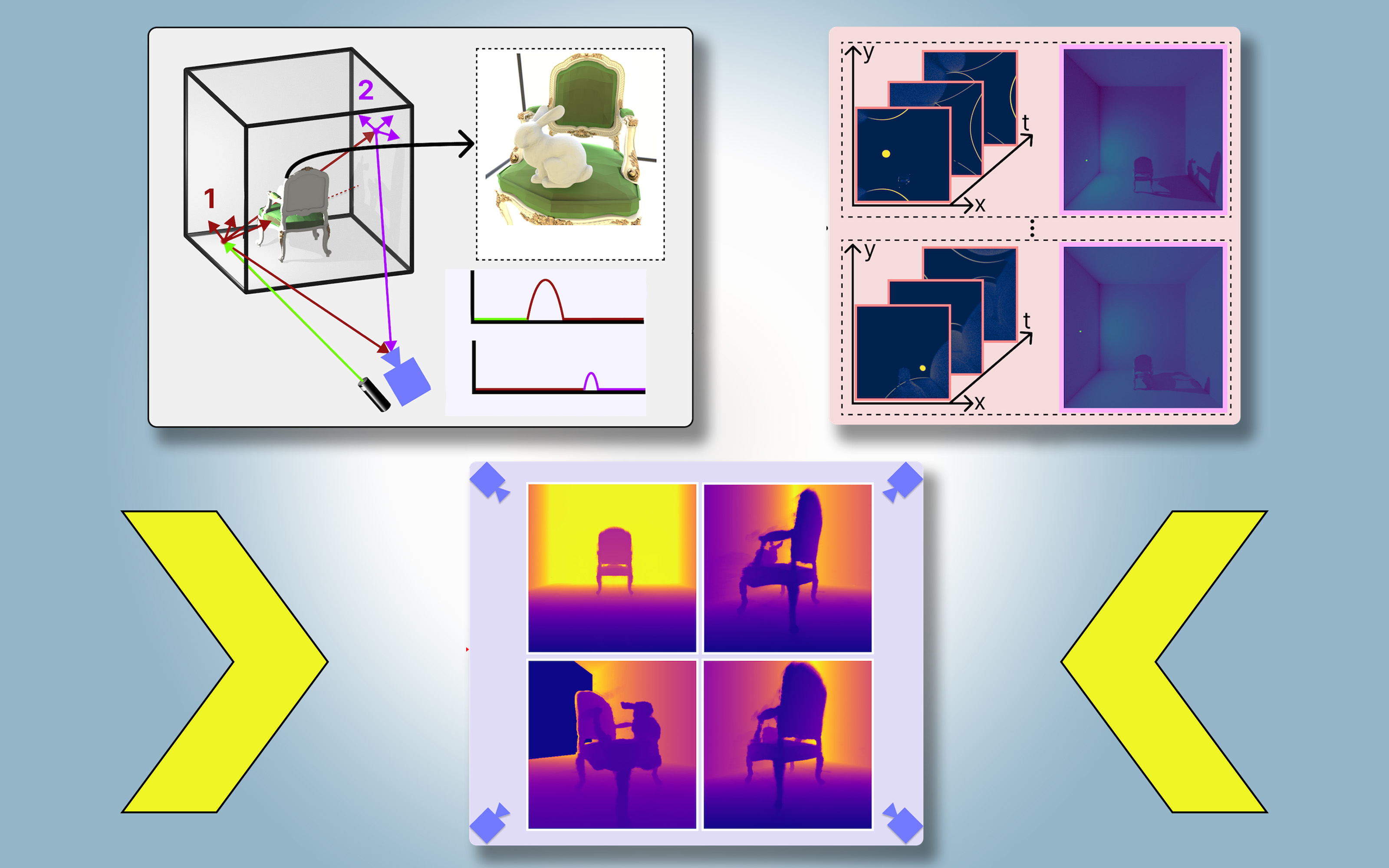

They’ve launched a way that creates bodily correct, 3D fashions of a complete scene, together with areas blocked from view, utilizing pictures from a single digicam place. Their method makes use of shadows to find out what lies in obstructed parts of the scene.

They name their strategy PlatoNeRF, based mostly on Plato’s allegory of the cave, a passage from the Greek thinker’s “Republic” through which prisoners chained in a cave discern the fact of the skin world based mostly on shadows forged on the cave wall.

By combining lidar (gentle detection and ranging) know-how with machine studying, PlatoNeRF can generate extra correct reconstructions of 3D geometry than some current AI strategies. Moreover, PlatoNeRF is best at easily reconstructing scenes the place shadows are arduous to see, resembling these with excessive ambient gentle or darkish backgrounds.

Along with bettering the protection of autonomous autos, PlatoNeRF might make AR/VR headsets extra environment friendly by enabling a consumer to mannequin the geometry of a room with out the necessity to stroll round taking measurements. It might additionally assist warehouse robots discover objects in cluttered environments sooner.

“Our key concept was taking these two issues which were finished in several disciplines earlier than and pulling them collectively — multibounce lidar and machine studying. It seems that if you convey these two collectively, that’s if you discover a whole lot of new alternatives to discover and get the perfect of each worlds,” says Tzofi Klinghoffer, an MIT graduate scholar in media arts and sciences, analysis assistant within the Digicam Tradition Group of the MIT Media Lab, and lead writer of a paper on PlatoNeRF.

Klinghoffer wrote the paper together with his advisor, Ramesh Raskar, affiliate professor of media arts and sciences and chief of the Digicam Tradition Group at MIT; senior writer Rakesh Ranjan, a director of AI analysis at Meta Actuality Labs; in addition to Siddharth Somasundaram, a analysis assistant within the Digicam Tradition Group, and Xiaoyu Xiang, Yuchen Fan, and Christian Richardt at Meta. The analysis shall be introduced on the Convention on Laptop Imaginative and prescient and Sample Recognition.

Shedding gentle on the issue

Reconstructing a full 3D scene from one digicam viewpoint is a fancy downside.

Some machine-learning approaches make use of generative AI fashions that attempt to guess what lies within the occluded areas, however these fashions can hallucinate objects that aren’t actually there. Different approaches try and infer the shapes of hidden objects utilizing shadows in a shade picture, however these strategies can wrestle when shadows are arduous to see.

For PlatoNeRF, the MIT researchers constructed off these approaches utilizing a brand new sensing modality referred to as single-photon lidar. Lidars map a 3D scene by emitting pulses of sunshine and measuring the time it takes that gentle to bounce again to the sensor. As a result of single-photon lidars can detect particular person photons, they supply higher-resolution information.

The researchers use a single-photon lidar to light up a goal level within the scene. Some gentle bounces off that time and returns on to the sensor. Nonetheless, a lot of the gentle scatters and bounces off different objects earlier than returning to the sensor. PlatoNeRF depends on these second bounces of sunshine.

By calculating how lengthy it takes gentle to bounce twice after which return to the lidar sensor, PlatoNeRF captures extra details about the scene, together with depth. The second bounce of sunshine additionally comprises details about shadows.

The system traces the secondary rays of sunshine — people who bounce off the goal level to different factors within the scene — to find out which factors lie in shadow (attributable to an absence of sunshine). Primarily based on the situation of those shadows, PlatoNeRF can infer the geometry of hidden objects.

The lidar sequentially illuminates 16 factors, capturing a number of pictures which are used to reconstruct all the 3D scene.

“Each time we illuminate a degree within the scene, we’re creating new shadows. As a result of now we have all these completely different illumination sources, now we have a whole lot of gentle rays taking pictures round, so we’re carving out the area that’s occluded and lies past the seen eye,” Klinghoffer says.

A successful mixture

Key to PlatoNeRF is the mixture of multibounce lidar with a particular sort of machine-learning mannequin referred to as a neural radiance area (NeRF). A NeRF encodes the geometry of a scene into the weights of a neural community, which provides the mannequin a robust potential to interpolate, or estimate, novel views of a scene.

This potential to interpolate additionally results in extremely correct scene reconstructions when mixed with multibounce lidar, Klinghoffer says.

“The largest problem was determining the way to mix these two issues. We actually had to consider the physics of how gentle is transporting with multibounce lidar and the way to mannequin that with machine studying,” he says.

They in contrast PlatoNeRF to 2 frequent different strategies, one which solely makes use of lidar and the opposite that solely makes use of a NeRF with a shade picture.

They discovered that their technique was in a position to outperform each strategies, particularly when the lidar sensor had decrease decision. This is able to make their strategy extra sensible to deploy in the actual world, the place decrease decision sensors are frequent in industrial gadgets.

“About 15 years in the past, our group invented the primary digicam to ‘see’ round corners, that works by exploiting a number of bounces of sunshine, or ‘echoes of sunshine.’ These strategies used particular lasers and sensors, and used three bounces of sunshine. Since then, lidar know-how has grow to be extra mainstream, that led to our analysis on cameras that may see via fog. This new work makes use of solely two bounces of sunshine, which implies the sign to noise ratio may be very excessive, and 3D reconstruction high quality is spectacular,” Raskar says.

Sooner or later, the researchers wish to attempt monitoring greater than two bounces of sunshine to see how that would enhance scene reconstructions. As well as, they’re involved in making use of extra deep studying strategies and mixing PlatoNeRF with shade picture measurements to seize texture data.

“Whereas digicam pictures of shadows have lengthy been studied as a way to 3D reconstruction, this work revisits the issue within the context of lidar, demonstrating important enhancements within the accuracy of reconstructed hidden geometry. The work exhibits how intelligent algorithms can allow extraordinary capabilities when mixed with extraordinary sensors — together with the lidar methods that many people now carry in our pocket,” says David Lindell, an assistant professor within the Division of Laptop Science on the College of Toronto, who was not concerned with this work.