Filed in . Explore the intricacies of astronomy by delving into the realms of galaxies, black holes, neutron stars, and quasars.

Filed in . Explore the intricacies of astronomy by delving into the realms of galaxies, black holes, neutron stars, and quasars.

Here are some suggestions I’d like to place on Apple’s radar. With the advent of enhanced iPhone management options in iOS 18, Lock Display screen customization has taken a significant leap forward; however, for many users, the ability to simply switch up their wallpaper remains an attractive feature. Since Apple introduced lock screen customization, widgets, and control center capabilities in iOS, creating a new wallpaper has become increasingly complex.

Issues function smoothly in their current form. Apple should provide a straightforward means for iPhone users to design, modify, and switch between distinct Lock Screen configurations, encompassing various Focus modes. That’s nice! You’ll have the ability to set a single Lock Display screen tailored to one specific scenario, while simultaneously having another customizable Lock Display screen with distinct wallpaper and widget options for a separate context?

The most effective approach currently in place could potentially become an enhanced mode, which Apple might reasonably introduce once they begin with a simpler iteration. The factor is that Apple has opted out of offering an easier mode for its products and hasn’t revisited this decision since then.

You are able to customise your Lock display screen clock, widgets, and default settings for display management. I prefer my newly chosen wallpaper to automatically set as the default starting point whenever I create a fresh lock screen.

Since a fresh wallpaper is applied, users are forced to recustomize the clock, widgets, and control settings anew each time. While it may seem like no big deal to leave your lock display screen untouched, setting a customised background and taking the time to personalise this often-overlooked feature can actually have a significant impact on your overall user experience?

With my preferred Lock display screen configuration, I always require it to be used in conjunction with any chosen wallpaper.

I’m pleased to find that this setting is satisfactory enough to be the initial adjustment I make upon acquiring a fresh desktop background? With repeated practice, I’m confident in performing it accurately even without looking. Although my automobile app lacks proper lock screen management, I can still reassign it to the slot occupied by the digital camera app previously.

To customize the lock screen, hold down on the screen, tap Customize, select your lock screen, minus the camera icon, open the App, summon the Shortcuts manager, select the record of installed apps, swipe down and tap the Auto app, dismiss the configurator by tapping Lock screen, then save progress.

I’m dissatisfied that my vehicle app requires a Lock Screen management option, but I believe it would be useful to have the ability to simply change your wallpaper without having to reconfigure your entire Lock Screen. Can we sustainably maintain and improve this intricate system while minimizing disruptions? Assign a default Lock Screen display configuration for each new Lock Screen?

, ,

With a collection of over two dozen mechanical keyboards, I rely daily on my trusty Indignant Miao Cyberboard R4. While this dedicated keyboard isn’t designed for portability, weighing in at 7 pounds, I’ve found a reliable assortment of compact, Bluetooth-enabled keyboards perfect for on-the-go writing when away from my desk.

Typically, 65% or 75% keyboard layouts predominate, and while I generally prefer full-size switches, their bulk can be unwieldy. As curiosity piqued whenever Cherry unveiled its foray into low-profile mechanical switches, I was thoroughly impressed by the Corsair K100 Air’s outstanding performance, while Lofree’s Move remains a top-tier option in this category.

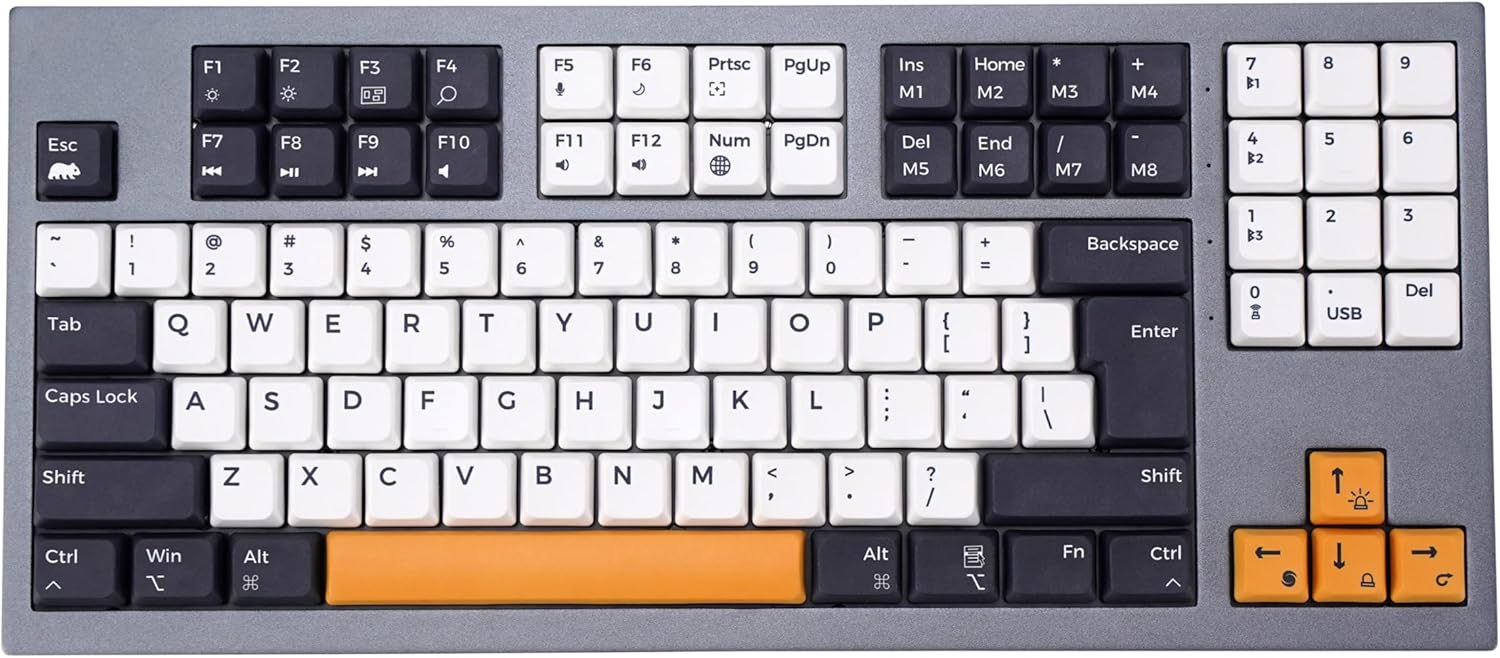

The Willow Professional debuts in this category with a unique full-size design that stands out for its significantly slimmer profile compared to its competitors. As a sub-brand of KSI Keyboards, Wombat’s Willow Professional stands out for its innovative combination of low-profile switches and a tailored full-size design, capturing users’ attention with its unique features. The keyboard, which is also accessible through other devices properly.

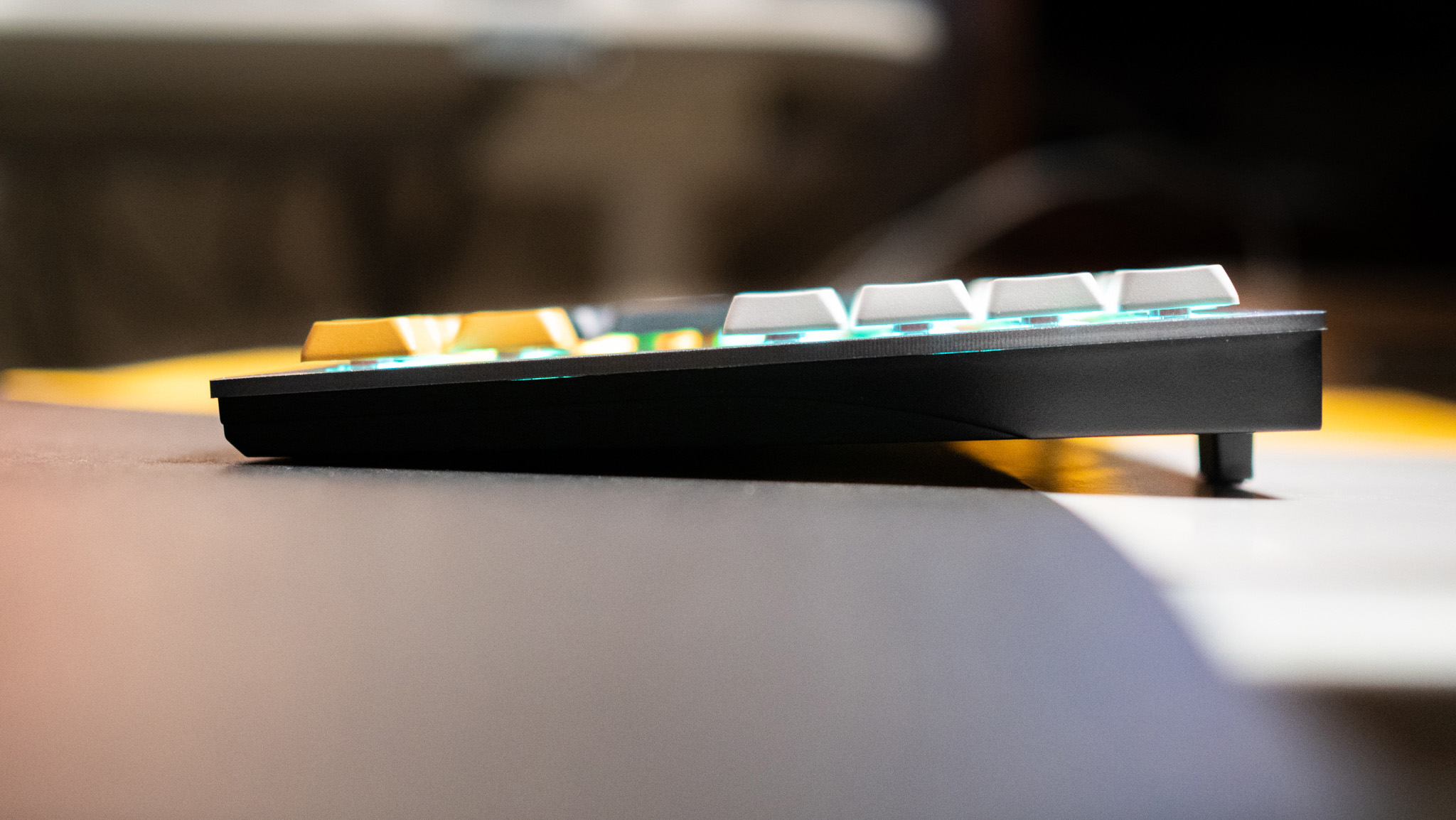

Let’s establish a clear vision and framework for our project from the onset? The professional-grade Willow keyboard boasts a sleek grey aesthetic, thoughtfully paired with crisp white and grey keycaps, while subtle pops of bright yellow on the spacebar and arrow keys add a touch of visual flair. The keyboard’s construction utilizes a durable aluminum prime plate paired with a sturdy plastic base, ensuring exceptional build quality throughout. With no signs of wear and tear after four months of use, the chassis’s rigidity remains unyielding, demonstrating exceptional durability.

While the key layout is distinct, it’s crucial to highlight its unique configuration: the Willow Professional boasts an elevated design where capabilities and system keys are prominently situated at the top, complemented by a numerical keypad situated to the right. The design boasts a spacious layout without sacrificing desk real estate, its height standing out from most keyboards while maintaining the same width as typical 75% designs.

The current placement of the system keys does not provide optimal usability. I typically delete with my pinky due to its proximity to the backspace key; however, in this instance, I’m presented with a numeric keypad instead. While the compact layout was initially jarring, the innovative approach to packing the keys into a smaller profile actually worked surprisingly well.

The feature I appreciate about this design is its clever incorporation of magnetized rubber toes at the rear, allowing for effortless adjustments to the angle. While I generally prefer a 6° angle, the keyboard’s 4° tilt with toes raised does facilitate using the Willow Professional with greater ease.

Utilizing several unassuming keyboards featuring mechanical switches, I have ample experience in this arena, and the Willow Professional impresses me in this respect. The keyboard leverages Gateron’s renowned Crimson low-profile linear switch technology, boasting an unparalleled performance among current offerings. The switch features a tactile bump with a 3.0mm travel distance, delivering a surprisingly realistic typing experience reminiscent of a standard-sized keyboard.

The compact design makes daily use enjoyable, providing the benefits of a mechanical change without occupying excessive space. While the sound profile is impressive, a hollow tone creeps in due to the use of a plastic backing. Considering the Willow Professional’s price point, it seems Wombat should have opted for a durable all-metal casing instead of something less robust.

Aside from those, there are no drawbacks to using the Willow Professional. The PBT keycaps feature durable construction and crisp, easily readable legends. While the keyboard features vibrant RGB lighting, a notable absence is the illumination of individual key legends, resulting in a more subdued lighting effect overall. Unlike other low-profile keyboards, the change is soldered.

Utilize the comprehensive Willow Professional software on both Windows and macOS platforms, featuring an expanded key set seamlessly integrated into your workspace. Since the keyboard features Bluetooth connectivity, pairing it with an Android device or tablet is feasible; in my experience, I typically paired it with my iPad Pro 13 (2024).

The Wombat offers a bespoke software utility, allowing users to effortlessly tailor macros to suit their specific needs. While VIA’s integration offered greater flexibility, I appreciated Wombat’s software for its smoother execution and reasonable level of customization.

One notable limitation of the keyboard is its battery life, which is somewhat compromised by its relatively modest 1000mAh power source – a smaller-than-usual capacity in today’s market. Despite enabling RGB lighting, the keyboard fails to last a full day of use; previously, I was able to extend battery life to two days by disabling the feature, but this remains insufficient.

While some may consider full-size keyboards an anomaly in today’s market, particularly with the rise of low-profile switches, it’s refreshing to see Willow Professional cater to this niche demand with high-quality design and construction. For individuals unencumbered by battery limitations, this is a decent overall option.

Wombat Willow Professional Mechanical Keyboard

The Willow Professional boasts a sleek low-profile design and innovative features. For individuals seeking a compact keyboard with a dedicated numeric keypad and tactile feedback from mechanical switches, this option warrants consideration.

Oil painting by Belgian marine artist François-Etienne Musin depicting the HMS [insert ship name] trapped in Arctic ice.

Researchers at the University of Waterloo have identified the remains of a sailor who perished during Captain Sir John Franklin’s ill-fated expedition to navigate the Northwest Passage in 1846. According to the Journal of Archaeological Science, DNA analysis confirmed that a tooth excavated from a mandible at one of the associated archaeological sites belonged to Captain James Fitzjames of HMS Révolutionnaire. The presence of human remains on the HMS Erebus and Terror exhibits conclusive evidence of cannibalism, substantiating findings from earlier Inuit investigations into the desperate actions taken by determined crew members who resorted to consuming their deceased colleagues.

The revelation of James Fitzjames as the primary recognized victim of cannibalism shatters the veil of anonymity that has concealed the gruesome truth about the fate of their ancestor’s remains for 170 years, sparing no member of the Franklin expedition households from the horrific reality of what might have befallen them. “Ultimately, the chaos revealed a different truth: personal survival became the dominant principle, supplanting any consideration of rank or social status.”

In the midst of a treacherous expedition, Franklin’s two ships, the Erebus and the HMS Terror, became trapped by encroaching ice in the Victoria Strait, ultimately resulting in the loss of all 129 lives on board. The enduring enigma has held audiences entranced for generations. The novelist Dan Simmons commemorated the expedition in his 2007 horror novel, which was subsequently adapted into a television series for AMC in 2018.

The expedition embarked on May 19, 1845, and was last spotted in July of that year by the captains of two whaling vessels. Scholars have painstakingly assembled a substantially reliable narrative of the events that transpired. The Franklin Expedition’s crew spent a grueling winter in 1845-1846 on Beechey Island, where the tragic fate of three of their own was later revealed through the discovery of their graves.

As the climate finally cleared, the expedition navigated into the Victoria Strait earlier than being encased in the ice off King William Island in September 1846. Franklin passed away on June 11, 1847, as attested in a surviving document signed by Fitzjames in the following April, dated accordingly. As Fitzjames took charge after Franklin’s untimely demise, he was left to command the meager remnants of their ill-fated expedition: a mere 105 souls who had miraculously survived the ordeal of being trapped on the icy seas. While it’s likely that everyone else perished either while encamped for the winter or during their attempted trek back to civilization.

In 1854, the fate of the expedition remained unclear until native Inuit informants revealed to Scottish explorer John Franklin that they had observed approximately 40 people hauling a ship’s boat on a sledge along the southern coastline? Last year, several bodies were found near the mouth of the Bay River. In 1859, a subsequent expedition discovered a new site approximately 80 kilometres south of the original location, which was designated as Erebus Bay, alongside several additional bodies and one of the ships’ boats, remarkably preserved on a sled. In 1861, another archaeological discovery was made just two kilometers away, yielding a significantly larger number of human remains. When the two ancient websites were unearthed in the 1990s, archaeologists assigned designations NgLj-3 and NgLj-2 to identify them separately.

Researchers behind this latest study have spent years analyzing DNA to verify the identities of remains excavated from archaeological sites, matching genetic profiles against descendant samples from original expedition participants. So far, a total of 46 archaeological samples – comprising bone, tooth, and hair – sourced from Franklin Expedition-related sites on King William Island, have undergone genetic profiling, and their results have been compared to those of 25 descendant donors whose cheek swab samples were obtained for analysis. While most didn’t match, in 2021, a notable exception emerged – our bodies were recognized as chief engineer John Gregory’s kindred, a connection laboured on by him. Since then, the group has expanded to include four additional descendants, one tied to Fitzjames (technically a second cousin five times removed through the captain’s great-grandfather).

In the mid-19th century, Inuit testimony attested to the desperate measures taken by Franklin expedition members, including the alleged practice of cannibalism; despite these accounts, European incredulity prevailed, finding it too gruesome and unbelievable to warrant serious consideration. In 1997, bioarchaeologist Anne Keenleyside, then of Trent College, analyzed the skeletal remains at NgLj-2 and discovered telltale signs of cannibalism: approximately one-quarter of the bones displayed lower marks, suggesting that at least four of the young men who died there were consumed by their own kind.

The novel examination yields the outcomes of DNA testing conducted on 17 tooth and bone specimens obtained from the NgLj-2 archaeological site, initially excavated in 1993. The samples featured a tooth extracted from a mandible, which proved to be the second instance yielding a conclusive identification. “We worked with an exceptional quality sample that enabled us to generate a Y-chromosome profile and were fortunate enough to secure a match,” noted Dr. [Last Name] of Lakehead University’s Paleo-DNA lab in Ontario? The authors propose that Fitzjames likely succumbed to death in either May or June 1848.

The mandible of Fitzjames exhibits a multitude of subtle markings characteristic of a complex bone structure. “Accordingly, it becomes clear that he died prior to a few of the sailors who perished, with social status and hierarchy irrelevant in the face of desperation during the expedition’s final days as individuals fought to survive.”

“Ultimately, the most empathetic interpretation of these findings is to recognize the desperation that drove Franklin sailors to commit an act they would normally abhor, acknowledging the profound suffering that ensued as a result.”

Journal of Archaeological Science, 2024. DOI: ().

Over the past two decades since the initial mapping of the human genome was completed, the landscape of biological research has undergone a profound metamorphosis. The genomic sector has experienced exponential growth, driving the “omics” revolution forward by integrating various data types, including single-cell RNA sequencing, proteomics, and metabolomics.

Cutting-edge applied sciences are revolutionizing our comprehension of biological systems at the molecular level, yielding profound insights into disease pathophysiology, individual variability, and complex relationships between organisms and their environmental surroundings, including medications and chemicals. The omics explosion’s profound implications hold immense promise for revolutionizing medicine, diagnostics, and our fundamental understanding of biological processes.

Despite the significance of unlocking life sciences insights, many organizations struggle due to the limitations imposed by their current infrastructure and technologies. To overcome these challenges, it is crucial to modernize information platforms, thereby enabling the effective utilization of multi-omics approaches for analysis and improvement.

On this blog, we delve into the possibilities of innovative technologies like Databricks Data Intelligence Platform in tackling these challenges, clearing the path for streamlined and efficient multi-omics data management.

Existing legacy information infrastructure struggles to cope with the intricacies of multiomics data, particularly in providing a scalable solution for integrating and analyzing these massive datasets. Moreover, they struggle to integrate native capabilities with advanced analytics, leaving them ill-equipped to meet the surging demand for artificial intelligence-driven solutions.

The lack of standardization across isolated omics platforms exacerbates concerns about information interoperability, accessibility, and reusability, leading to a plethora of comparable points. Organizations must harmonize information accessibility with individual privacy and regulatory compliance in an increasingly complex, highly regulated environment?

Organizations are currently grappling with these challenges by implementing various initiatives and strategies. As we converse, many leverage multiple applied sciences in tandem to navigate the complexities of omics data. Despite its potential benefits, this technique poses several challenges, including:

Omic datasets are enormous and highly complex, necessitating cutting-edge computational approaches for effective analysis. As data volumes surge with the advent of advanced analytics, the inherently high-dimensional nature of these datasets can give rise to significant “noise,” rendering it increasingly challenging to extract meaningful and actionable insights from the noise. In particular, the Excessive-Dimensional Low-Signal-to-Noise Ratio (HDLSS) scenario poses a significant challenge in omics analysis, where the risk of overfitting in machine learning models can compromise the generalizability of discoveries. To effectively tackle this challenge demands robust information preprocessing and cutting-edge computational techniques, which may render existing data architectures inadequate.

The lack of consistent standards across various -omics platforms poses significant hurdles to ensuring seamless data interoperability and reusability. Without established guidelines, integrating diverse datasets into a unified structure becomes a daunting task.

Ensuring the accessibility of omics data while maintaining patient privacy and complying with regulations like HIPAA and GDPR presents a complex juggling act. The issue is particularly pronounced within a global analytical setting where. As genetics data is increasingly employed in diagnostics and illness risk prediction, such as polygenic risk scoring, the capacity to track every aspect of the training process – from data acquisition and quality control to model training and explainability – has become increasingly vital?

The pharmaceutical industry benefits significantly from the influx of professionals including IT specialists, data scientists, medical researchers, and bench scientists, who conduct cutting-edge research on diverse biological samples. Current information platforms, built on diverse technological foundations including High-Performance Computing (HPC) and various local cloud services, necessitate substantial technical support to accommodate the rapidly shifting landscape of omics data.

The adoption of advanced analytics techniques by non-technical stakeholders is impeded due to their inherent complexity and the significant learning curve required to effectively utilize them, thereby limiting entry into valuable insights generated from area data. Without standardized processes and systems for managing research data, this problem creates a significant hurdle to effective collaboration and data-driven decision-making within life sciences organizations.

Exploring novel coaching frameworks based on multidimensional omics data integration and its applications in drug discovery processes. As single-cell omics data becomes increasingly prevalent, fashioning novel approaches that integrate large-scale multi-omics datasets enables predictive modeling of drug responses and the identification of novel therapeutic targets, thereby driving innovations in personalized medicine. Firms akin to Pfizer and others are developing sophisticated large-language models (LLMs) to generate novel artificial proteins grounded in multi-omics data. Notwithstanding the significance of operationalising these fashions, crucial hurdles arise in implementing them effectively and efficiently. To accommodate the substantial demands of processing enormous datasets, it is essential to have robust infrastructure in place, capable of efficiently handling data management and cost-effectively training complex models on large volumes of multi-modal data?

The Databricks Information Intelligence Platform provides a robust foundation for a multi-omics data management system, effectively tackling the intricacies faced by both researchers and IT specialists in handling large-scale omics datasets. Databricks empowers organizations to overcome pivotal data and analytics hurdles by seamlessly integrating with popular big data technologies, effortlessly scaling data processing, and providing unparalleled collaboration capabilities.

Built upon a scalable cloud infrastructure, Databricks is well-equipped to handle the vast and complex datasets characteristic of omics analysis. With seamless integration with Apache Spark and a powerful high-performance compute engine fueled by , Databricks enables cost-effective distributed data processing. Furthermore, integrating these components eliminates the need for distinct tech stacks for data management and advanced analytics, thereby decreasing friction and expediting time-to-value.

The Databricks Photon engine significantly enhances Spark-based genomic pipelines and tools like it, accelerating and simplifying the analysis of massive genomic datasets, particularly for identifying genetic goals through Genome-Wide Association Studies (GWAS)?

Enables frictionless collaboration by harmoniously combining unstructured, semi-structured, and structured data from information lakes and warehouses directly onto a single, cohesive platform, leveraging the power of open-source technologies such as Apache Spark and Hadoop. This approach enables seamless data fusion by embracing a multitude of datasets, accommodating open information formats and fostering interoperability through versatile interfaces that minimize vendor dependence and streamline cross-system data integration.

Through the strategic deployment of open-source technologies, Databricks provides a unified information repository, thereby ensuring seamless discoverability, accessibility, and integratability with external systems in a manner that complies with regulatory requirements while maintaining audit trails. Researchers benefit from implementing FAIR principles in scientific information management, fostering collaboration, ensuring reproducibility, and generating data-driven insights.

Databricks Unity Catalog empowers organizations to meet the strict requirements of regulations such as HIPAA and GDPR, while simultaneously simplifying data discoverability and access. With its centralised metadata repository and advanced search capabilities, customers can quickly find relevant information primarily based on context and meaning. The platform’s robust, reliable, and transparent infrastructure ensures comprehensive guarantee information safety and compliance.

What’s more, Unity Catalog offers unparalleled metadata management, tagging, and data lineage tracking capabilities, thereby enhancing the discoverability and reproducibility of experiments through streamlined workflows. To further ensure regulatory compliance, Databricks offers robust and reliable features. The platform seamlessly integrates with open-source technologies, including the secure data-sharing protocol that enables trusted information exchange between entities. Facilitates secure collaboration among researchers from diverse organizations while meeting data residency requirements.

Organizations are empowered to maintain rigorous information security protocols while granting authorized users seamless access to vital data for evaluation and analysis within a secure, compliant environment that transcends organizational silos.

Databricks offers a comprehensive, self-serve data platform that streamlines infrastructure management and seamlessly unites various data types. Its user-friendly interfaces, which include intuitive navigation and seamless data import, enable effortless information entry and streamlined evaluation. This approach simplifies complex information exchanges, rendering the platform user-friendly for both technically inclined and non-technical professionals alike.

By streamlining data entry and reducing IT burdens, while fostering seamless collaboration among diverse teams, Databricks empowers expedited decision-making and innovative breakthroughs in drug development and discovery.

Databricks’ platform enables seamless development of generative AI models through its robust infrastructure for pre-training, fine-tuning, and deploying AI applications at scale. With MosaicAI, Databricks offers tailored solutions specifically designed to facilitate cost-effective training of fundamental machine learning models on a company’s proprietary data sets. Moreover, MosaicAI offers a highly scalable platform for building, deploying, and managing artificial intelligence models across their entire lifecycle, further solidifying its capabilities in this regard.

Here is the rewritten text: This guarantees successful, efficient, and large-scale operationalization, empowering organizations to fully harness the power of generative AI and maximize returns on their AI investments.

In our forthcoming technical blog series, we’ll delve into harnessing the power of Databricks to drive insights from complex multi-omics data.

This endeavour seeks to integrate large-scale affiliation research with the pre-training of a Geneformer model using MosaicAI, leveraging their capabilities for genome analysis and artificial intelligence-driven insights.

Databricks offers a comprehensive platform that effectively tackles the diverse complexities of handling omics data. By leveraging its highly adaptable infrastructure, fostering seamless collaboration through interoperability features, implementing stringent security safeguards, and tapping into the power of sophisticated artificial intelligence, Databricks empowers pharmaceutical companies to uncover actionable intelligence from complex omics data sets. By using Databricks, organizations can expedite their analysis and improvement (R&D) efforts, resulting in innovation and improved affected person outcomes.

What’s new in information and AI options for healthcare and life sciences at our company?

As October marks Cybersecurity Awareness Month (CAM), Throughout the coming month, we will be sharing advice, guidance, and recommendations on various safety-related topics to empower and enlighten the community.

A potentially hazardous fact for some of you is that crafting a strong password can be a daunting task. This lack of coverage exposes numerous people to potential risks and vulnerabilities. A robust password consists of a judicious blend of alphanumeric characters, at least 12 characters in length, and incorporating symbols, numbers, and both uppercase and lowercase letters.

When it comes to creating strong passwords, a general guideline is that length is key, with longer passwords generally being more secure than shorter ones.

I recommend generating passwords that are so complex and lengthy that they’re virtually impossible for anyone to recall or guess.

Sounds backwards, proper? Are You Willing to Create Passwords That Are So Unforgettable, You’ll Struggle to Recall Them?

While the level of that rule is two-fold in nature – fostering fascination with password size and emphasizing its importance on one hand, and encouraging consideration of password managers on the other – To alleviate the frustration of remembering lengthy and intricate passwords due to their sheer size and complexity, wouldn’t it be prudent to employ a password management application that can securely store and recall them for you?

The primary reason you require lengthy passwords is to prevent successful guessing and cracking attempts.

As if the very foundation of one’s being is shattering into a thousand pieces. Following a website breach where passwords are compromised, a determined criminal may attempt to exploit the stored hash values by employing dictionaries of commonly used words and educated guesses.

The identical mindset does apply to direct password guessing. If your password consists of personal details such as the year you got married, specifically 1995, along with your spouse’s name being publicly known – April – it’s likely that your password can be easily guessed or compromised.

This is an instance using real data.

Within a mere three minutes per group, it was possible to breach all combinations of six- to ten-character passwords from among the most frequently used 100,000. More than 80,000 passwords had been vulnerable to cracking, with most being compromised far more quickly than the time spent documenting them thus far on this blog.

While most websites demand passwords that are at least eight characters in length, comprising both uppercase and lowercase letters, numerals, and special characters, you would logically presume that attempting to crack or guess such passwords would be a challenging endeavor.

However, it is unlikely that a strong password would be compromised due to the prevalence of password reuse and the use of widely known phrases, words, and patterns.

One crucial factor that effectively safeguards your accounts across various websites is the adoption of unique, complex passwords that deviate from commonly used words or phrases. While a hacked password on one website may seem like a significant security breach, modern password management practices ensure that this vulnerability is isolated to the affected account alone.

Be advised: If your password contains any of the following phrases, update it immediately. Among the vast array of commonly used passwords, these root phrases stand out as stark examples of easily guessable combinations employed to generate passwords.

The checklist introduced earlier displays a subtle consistency. The comprehensive checklist encompasses a multitude of items, including names, state and city designations, sports terms, automotive vernacular, religious phrases, military jargon, colloquialisms, familial expressions, emotive language, musical group monikers, and hues.

Primarily, phrases that appear in dictionaries are unlikely to create robust passwords, as they lack sufficient complexity and uniqueness.

Randomness cannot truly be achieved by human attempts? However, when we attempt to inject randomness into our approach, we often find ourselves stuck in a rut, relying on familiar clichés and expressions. We’ll even throw in a ‘!’ and ‘@’ together with a few quantities to good measure.

While “Whereas” may initially seem like an impressive password, it is actually quite weak.

While true that this password meets certain criteria, there are two significant reasons why using one such as this is ill-advised: The terms “Ruby Red” and “Crimson” are well-known expressions. Notably, appending an exclamation mark (!) to the start of a password and incorporating the current year at the top of the password have emerged as common tactics that can be easily predicted.

Using a readily available hash value sample of -1 ?u?l !?1?1?1?1?1?1?12024, it is possible to crack the encryption within 12 seconds using SHA1 hashing, or approximately two minutes with SHA3 256 hashing.

Two distinct hashing options were evaluated; understanding the significance of this is crucial, as it directly impacts how passwords are stored on a website.

Despite this, if a truly random password is used for the sample, it would be extremely difficult to crack. In reality, attempting to guess a 12-character randomly generated password using SHA1 could take approximately 54 days, while SHA3 may require an even longer timeframe.

When a password is hashed using bcrypt, as employed by numerous websites, the cracking time increases exponentially – in fact, it would take an astonishing 164 thousand years for a hacker to successfully breach the encryption.

The primary objective behind this ongoing password conversation is to convey two crucial pieces of information.

Human unpredictability being an illusion? People struggle to generate true randomness. Since a leaked or easily guessable password is vulnerable regardless of hashing, no amount of hashing can protect it and therefore all associated accounts are at risk.

As passwords grow in length, their distinctiveness increases, rendering them progressively safer, provided they are not reused across multiple websites.

With a password manager, you’ll generate truly random, complex, and unique passwords for each website you visit.

Which password manager are you utilizing as your supervisor? What’s perfect about this half?

While they may exhibit subtle differences, their fundamental capabilities remain remarkably consistent.

Alongside a detailed analysis of pricing and performance metrics. Among numerous password management tools. Students are taking their time to thoroughly study.

What’s crucial when selecting a password manager is its ability to generate robust passwords that are at least 20 characters long, comprising a well-balanced mix of uppercase and lowercase letters, numerals, and special characters, as well as the capacity to create unique passwords for each website.

Although the website may not support lengthy passwords, you can still generate truly random passwords with the help of a password manager, thereby minimizing the impact of this limitation.

At the end of the day, a password manager eliminates the need for password recycling and prevents simply guessed passwords or phrases. Passwords are really random.

In addition to relying on a password manager for enhanced security, an extra layer of protection is offered through the implementation of multi-factor authentication (MFA), providing further safeguarding against potential breaches. We’re about to explore what an MFA degree has to offer in another blog soon. If your password manager allows you to enable this feature, it is generally advisable to take advantage of the added security and permit it.

Lastly, we’ve got passkeys.

You may’ve heard about them. If circumstances permit, we will thoroughly explore that topic later this month. Passkeys provide a convenient alternative to traditional passwords for secure authentication. However, scaling software programs, including their management within ecosystem lock-ins, poses distinct challenges that the safety and growth sectors are actively tackling. As technology continues to advance, it’s increasingly likely that passkeys will become a standard feature in the near future, addressing emerging challenges.

The truth is, some .

Keep Protected!

Share:

The `primary model of` is now available on CRAN, a major milestone in its development. TensorFlow Hub provides a seamless R interface to publish, discover, and utilize reusable components of machine learning models, empowering users to easily tap into the vast library of pre-trained models and fine-tune them to suit their specific needs. In TensorFlow, a module represents a self-contained component of a computational graph, including its associated weights and dependencies, which can be leveraged across various tasks within the context of transfer learning to facilitate knowledge sharing.

The TensorFlow Hub (TFHub) model based on the CRAN architecture will be integrated.

After installing the R bundle, consider setting up the TensorFlow Hub Python package to unlock its full potential. You are able to achieve this by operating within your comfort zone and taking calculated risks.

The pivotal role of TensorFlow Hub (tfhub) lies in its ability to streamline model development by providing pre-trained models and weights that can be easily fine-tuned for specific tasks. layer_hub Which functions similarly to a layer, allowing you to load an entire pre-trained deep learning model.

For instance you may:

Can this obtain the pre-trained MobileNet model for ImageNet? TfHub fashion models are cached regionally, eliminating the need to download the same model again when reused.

Now you can use layer_mobilenet as a normal Keras layer. Here is the improved text in a different style:

A fashion designer’s muse takes shape as he outlines a mannequin.

Mannequin: "mannequin" ____________________________________________________________________ Layer (Kind) Output Form Parameters ==================================================================== Input Layer (input_2) [(None, 224, 224, 3)] 0 Keras Layer (keras_layer_1) (None, 1001) 3,540,265 ____________________________________________________________________ Whole params: 3,540,265 Trainable params: 0 Non-trainable params: 3,540,265This mannequin has been trained to accurately predict ImageNet labels from an image. As a milestone in computer science history, the iconic photograph of Admiral Grace Hopper is a testament to her pioneering spirit and groundbreaking contributions to the field.

**Classifications** | Class Name | Description | Rating | | --- | --- | --- | | 1 | Military Uniform | 9.76 | | 2 | Bearskin | 5.92 | | 3 | Swimsuit | 5.73 | | 4 | Mortarboard | 5.40 | | 5 | Pickelhaube | 5.01 |TensorFlow Hub offers a diverse range of pre-trained picture, text, and video models.

All available fashion models will be found on the TensorFlow Hub.

There are numerous additional illustrations of this phenomenon that may be discovered. layer_hub Utilisation of TensorFlow features within articles on the TensorFlow for R website.

To address this issue, we propose developing an innovative solution utilizing the Functional Spec API, which will enable seamless integration of functional food ingredients into various recipes. This approach will facilitate the creation of nutritious and healthy meals that incorporate functional food ingredients in a way that is both easy to understand and implement.

By leveraging the Functional Spec API, the system will automatically generate optimized recipes that incorporate functional food ingredients in a manner that is tailored to specific health and wellness goals.

The TensorFlow Hub provides additional steps to manufacture.

It’s often simpler to leverage pre-trained deep learning models in your machine learning workflow.

By outlining a recipe that leverages a pre-trained text-based embedding model,

You’ll be able to visualize a complete operating instance.

It’s also possible to utilize TensorFlow Hub with the latest advancements in TensorFlow Datasets. You’ll be able to see the entire instance.

As we hope our readers have a great time exploring Hub’s styles, or perhaps find inspiration for practical applications. If you encounter any difficulties, kindly report them by raising an issue within the TensorFlow Hub repository.

Content and figures are licensed under Creative Commons Attribution. Figures reusing content from multiple sources are exempt from this licence and will be credited with the notation “Source: Determine from…”

For proper citation, please refer to this research as follows:

Falbel (2019, Dec. 18). Posit AI Weblog: Unlocking the Power of TensorFlow Hub in R - A Seamless Interface for Machine Learning Retrieved from https://blogs.rstudio.com/tensorflow/posts/2019-12-18-tfhub-0.7.0/

BibTeX quotation

@misc{tfhub, author = {Daniel Falbel}, title = {{Posit AI Weblog: tfhub: An R Interface to TensorFlow Hub}}, url = {https://blogs.rstudio.com/tensorflow/posts/2019-12-18-tfhub-0.7.0/}, year = {2019}} What drones are best for aerial photography?

Israel-based company may seal a deal that enables the corporation to establish new, large-scale manufacturing capabilities to provide aviation security solutions for drones and secure the burgeoning Superior Air Mobility (AAM) industry?

On September 25, ParaZero announced that it had secured a substantial $187,000 buy order from a prominent US-based asset management (AAM) firm, marking a significant milestone for the company. Upon successful completion of the shopper’s intricate drone safety-system customization project, this order comprises ParaZero’s groundwork for serial production, accompanied by the provision of an initial shipment of safety systems.

The company’s announcement underscores the pivotal role this order plays in realizing the crucial requirements for the consumer, effectively integrating ParaZero’s cutting-edge drone security technologies into their AAM system.

The settlement marks a significant milestone in ParaZero’s quest to shape the future trajectory of the autonomous aircraft manufacturing (AAM) and unmanned aerial vehicle (UAV) sectors. According to an interview with Amir Lavi, head of sales at ParaZero, the company’s innovative security solutions – akin to its parachute restoration system – have played a crucial role in enabling drone operators to achieve their long-sought goal of obtaining authorizations to fly over people or beyond visual line of sight.

“I firmly believe that the future of urban air mobility and drones holds tremendous potential.” “As ParaZero envisions it, they aim to play a pivotal role in empowering this futuristic endeavour.”

ParaZero, a global leader in safety solutions, collaborates closely with national civil aviation authorities across North and South America, Europe, and Australia to provide cutting-edge technology enabling industrial drone flights.

We are currently collaborating with numerous original equipment manufacturers and leading drone producers globally. The most notable examples include Speedbird in Brazil and Draganfly in Canada, as he pointed out. “Unmanned aerial vehicles (UAVs) like Speedbird are revolutionizing logistics with their beyond-visual-line-of-sight (BVLOS) delivery capabilities, while heavy-lift drones from Draganfly are opening up new possibilities for transporting large or bulky cargo.”

The company has collaborated with drone manufacturers while also working on developing security solutions for manned attack aircraft, including the Elevate Hexa, manufactured in Austin, Texas, and the Jetson One, produced in Tuscany, Italy. Lavi hinted that ParaZero may collaborate with another prominent US-based aerospace and aviation manufacturer, but he was not authorized to disclose the company’s name.

“Regulations vary significantly from one region to another on our planet,” he noted. Since its inception in 2013, ParaZero has sourced more than 10,000 diverse items across five continents worldwide.

In the United States, Lavi noted that ParaZero collaborated with DJI to help the company integrate necessary security features into its Mavic 3 drone to secure FAA approval for a waiver allowing flights over people.

Recently, the FAA reformed its regulations governing drone operations near people, categorizing unmanned aerial vehicles (UAVs) into three classes based on their weight. As a Class Two drone, the DJI Mavic 3 must be equipped with an ASTM-certified parachute-recovery system, alongside other safety features such as remote ID, strobe lights and prop guards, to enable authorized pilots to obtain a waiver for flying over people.

The DJI Mavic 3’s security system has recently achieved ASDM certification following a thorough and rigorous testing process, demonstrating its ability to withstand a series of simulated failures without crashing in a way that could potentially harm people or property. These exams comprised a range of scenarios, including full-ahead velocity, hovering, manual trigger activation, autonomous trigger activation, single-motor shutdown, and simultaneous shutdown of all motors.

According to Lavi, the plane had to survive 45 consecutive successful drops, meaning that if it failed on the 45th attempt after successfully completing the first 44, it would have to start all over again.

Simultaneously, ParaZero collaborates with drone manufacturers to ensure their autonomous systems conform to the requisite safety standards across various geographic regions and regulatory frameworks. To ensure seamless BVLOS deliveries in Brazil, Speedbird must demonstrate the robustness of its overall security framework, alongside verifying the reliability of its ParaZero parachute-recovery system for added safety and assurance.

Prior to granting a Beyond Visual Line Of Sight (BVLOS) waiver in the United States, the Federal Aviation Administration (FAA) mandates that operators thoroughly test both the integrity of their system and the reliability of the parachute involved.

According to ParaZero, its expertise is tailored to integrate seamlessly with each drone’s unique configuration and measurement, ranging from lightweight models weighing one kilo or two kilos up to heavy-duty aircraft of one ton.

ParaZero has been working on developing parachute-recovery methods and corresponding security measures for industrial drones. The company has now announced its plans to expand into the counter-drone and protection sectors. The corporation has secured deals with two security firms, but details regarding these partnerships remain undisclosed.

While the company serves multiple global markets, Lavi highlighted that ParaZero has secured a distinct agreement with a major US-based entity. A hub for unmanned aerial systems (UAS) and advanced air mobility (AAM) enterprises to connect, collaborate, and grow their businesses?

The corporation has been collaborating with first-responder organizations like the Chicago Police Department to support their drone operations, as well as numerous media outlets including CNN, ABC News, and Fox News. ParaZero provides expert knowledge on cybersecurity to construction companies employing drones for mapping and surveillance purposes.

“After all, one of the most significant advancements lies in video, providing content creators with the versatility to record monumental events on a grand scale, producing advertisements and films that were previously unimaginable.” “That empowers operators to secure additional positions and generate more lucrative employment opportunities.”

Learn extra:

As Chief Editor at DRONELIFE and CEO of JobForDrones, a premier marketplace for drone companies, Miriam McNabb is a keen observer of the burgeoning drone industry and its corresponding regulatory landscape. Miriam has authored more than 3,000 articles focused on the industrial drone sector, establishing herself as a prominent and widely recognized authority in the field, with a reputation that extends globally through her speaking engagements. Miriam holds a degree from the University of Chicago, bringing more than two decades of experience to her work in cutting-edge technology sales and marketing for innovative solutions.

What expertise do you bring to the table in drone-related industries?

TWITTER:

Subscribe to DroneLife .

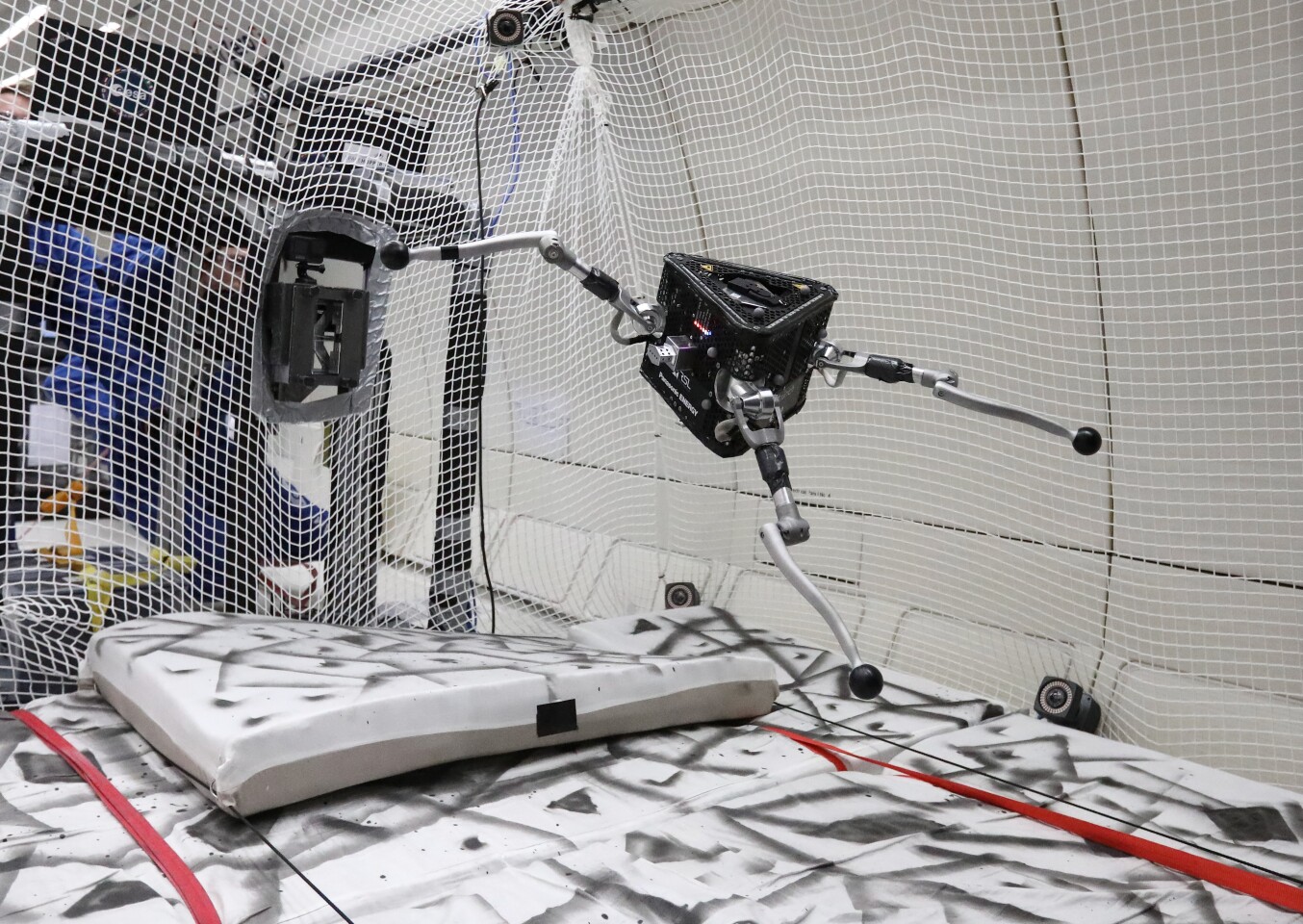

A three-legged robot may eventually hop across the floors of asteroids in search of valuable minerals. Dubbed the SpaceHopper, this innovative bot recently underwent rigorous testing on a zero-gravity aircraft flight.

The SpaceHopper programme was initially inaugurated approximately two and a half years ago as a scholarly analysis mission at ETH Zurich university in Switzerland.

The mission aims to develop effective strategies for exploring low-gravity celestial bodies like asteroids and moons. Notably, human bodies could contain essential substances like rare-earth metals, which may also aid scientists in better understanding the universe’s formation.

The SpaceHopper robotic system features a durable triangular structure crafted from high-strength aerospace aluminum, accompanied by three articulately designed legs, one at each vertex. Each leg in this robotic flipper features a knee and hip joint, powered by a unique combination of motors: two motors work together via a differential drive mechanism to propel the hip, while a single additional motor is responsible for articulating the knee.

ETH Zurich/Jorit Geurts

The onboard deep-learning-based software programme governs the synergistic actions of the legs, enabling the robot to execute a predetermined sequence of specific tasks. These capabilities encompass initiating takeoffs, maintaining the drone’s physical orientation with precision during flight, and executing controlled landings at designated locations.

Nine powerful leg motors harmoniously coordinate to propel the SpaceHopper a great distance from the asteroid’s surface upon jumping. As the robot gains altitude, it stabilises its vertical position through a sophisticated balance mechanism, where its articulated legs adjust their length to dynamically reposition its centre of gravity. Upon landing, its legs flexibly absorb shock and stabilize the bot to prevent tipping.

Initial tests of these capacities were conducted at ETH Zurich’s laboratory, where the robot was connected to a counterbalance and a rotating stabilizer to mimic the low-gravity conditions found on the dwarf planet Ceres.

In late 2022, however, members of the all-female group took the SpaceHopper on a unique journey when they participated in an Air Zero G parabolic flight hosted by the European Space Agency and French company Novespace. During parabolic flights, an Airbus A310 airliner performs a series of ascending and descending maneuvers, creating short periods of zero-gravity conditions within the aircraft as a result.

Nicolas Courtioux

During its 2023 test flight, the robot successfully executed a pre-programmed trajectory, repeatedly jumping off the aircraft’s landing gear to ensure precise orientation upon re-entry into freefall. Watch the accompanying video for a summary of key exam points.

Researchers at ETH Zurich have previously made it renowned that they had developed a 4Legged asteroid-hopping robot, commonly known as… The SpaceHopper’s innovative three-legged structure was designed to significantly reduce its dimensions and weight compared to conventional designs. The robotic spacecraft weighs approximately 5.2 kilograms or 11.5 pounds, making it feasible to transport and deploy it from a small unmanned vehicle.

What’s the best way to explore asteroids effectively? To address this challenge, we’re developing a hopping robotic system designed specifically for asteroid investigation. By leveraging cutting-edge robotics and innovative propulsion technology, our hopper will enable scientists to gather valuable data and samples from these celestial bodies with unprecedented precision. With its ability to jump over rough terrain and navigate challenging environments, the robotic hopper will revolutionize our understanding of asteroids and their potential for harboring extraterrestrial life.

Sources: ,