Across various sectors, artificial intelligence (AI) is revolutionizing processes, amplifying productivity, fuelling technological advancements – and stimulating investments in accelerators, high-performance computing engines, and specialized neural processing units. Many companies initially introduce Retrieval-Augmented Generation (RAG) technology for limited inference purposes before scaling up to handle a broader customer base. Companies processing enormous amounts of personal data may opt for setting up their own coaching clusters to achieve the precision that tailored models built upon selective data can deliver. Regardless of whether you’re deploying a small AI cluster comprising numerous accelerators or a larger setup featuring hundreds, it’s crucial to establish a scalable community that seamlessly integrates and manages all your nodes.

The important thing? Crafting a comprehensive strategy for the community’s development. Well-crafted communities optimize accelerators for maximum efficiency, enable teams to complete tasks swiftly, and maintain low latency throughout. To accelerate job completion, the community seeks to mitigate congestion or, at a minimum, detect its onset promptly. The community must seamlessly facilitate visitor interactions, including during peak or congested periods, ensuring swift resolution of any issues that may arise.

That’s where you’ll find the Knowledge Heart offering quantized congestion notification (DCQCN). DCQCN’s optimal performance is achieved when ECN and PFC are employed together in synergy. ECNs respond promptly on a flow-by-flow basis, whereas PFCs serve as a robust congestion mitigation mechanism to prevent packet loss. This material delves deeply into those concepts. We have also introduced tools to simplify the deployment process by ensuring that it aligns with our established blueprints and industry-leading best practices. Cisco’s Nexus 9000 Series Switches employ a sophisticated dynamic load-balancing approach to efficiently manage congestion on the network.

Load balancing has evolved significantly over the years, with conventional methods still being employed in many scenarios. These traditional techniques rely on distributing incoming traffic across multiple servers or nodes, thereby increasing overall capacity and reducing response times.

In contrast, dynamic approaches have emerged as a more sophisticated solution for managing complex networking demands. This new paradigm leverages advanced algorithms, real-time data analysis, and adaptive decision-making to optimize load distribution and ensure optimal system performance under various conditions.

Conventional methods include:

* Round-Robin (RR) algorithm: Simplest and most basic approach, where incoming requests are directed to each server in sequence.

* Least-Connected (LC) algorithm: Directs new connections to the server with the fewest active connections, thereby reducing the load on busy servers.

* IP Hash algorithm: Uses a hash function based on client IP addresses to distribute traffic across multiple servers.

Dynamic approaches involve:

* Server-Side Load Balancing (SSLB): Distributes incoming requests based on real-time data from each server’s performance and capacity.

* Client-Side Load Balancing (CCLB): Utilizes advanced algorithms to dynamically adjust load distribution according to client-side factors like network latency and throughput.

Traditional load balancing relies on equal-cost multi-path (ECMP), a routing approach where once a path is selected, it typically remains in place until the move concludes? As traffic across multiple routes continues to follow similar persistent pathways, an imbalance can emerge where certain hyperlinks become overwhelmingly utilized while others remain underutilized, ultimately leading to congestion on these heavily trafficked links? Within an artificial intelligence coaching cluster, this anomaly is likely to significantly decrease job completion instances and potentially lead to increased tail latency, thereby undermining the overall efficiency of coaching operations.

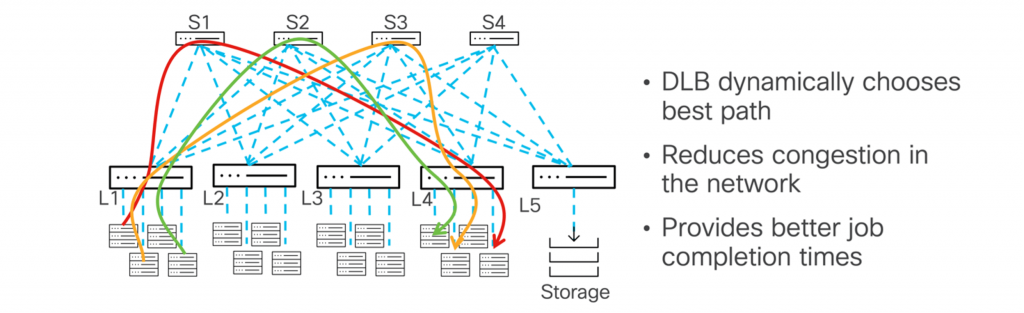

As the community’s state evolves dynamically, a responsive load-balancing strategy must be fueled by real-time insights from community telemetry data or user-defined settings. Dynamic load balancing enables efficient distribution of website traffic by taking into account changes in network conditions, thereby ensuring optimal performance. As a direct result, congestion can be effectively mitigated, thereby enhancing overall efficiency. As the community’s dynamics evolve, the trail may dynamically adapt its route, shifting to alternative routes when primary ones become congested.

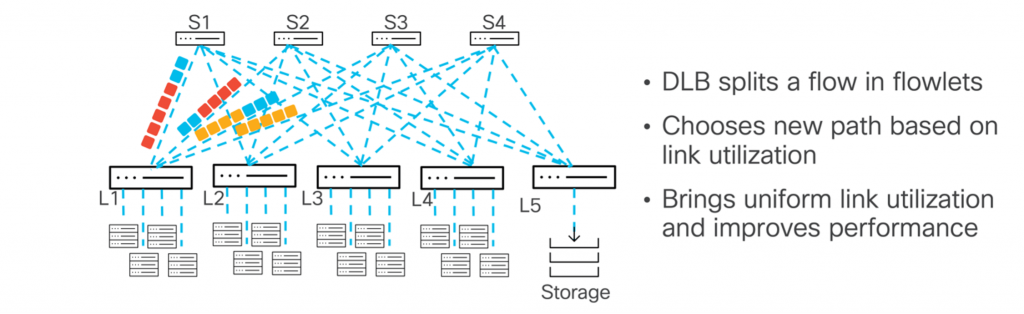

The Nexus 9000 Collection leverages hyperlink utilization as a key factor in optimizing multipath performance. As hyperlink utilization proves to be a dynamic entity, readjusting traffic paths mainly in accordance with path usage enables more efficient forwarding and subsequently minimizes congestion. While evaluating ECMP and DLB, it’s crucial to recognize this pivotal difference: ECMP routes traffic along a chosen path once a flow is assigned, remaining steadfast even if the link becomes congested or heavily utilized. Instead of navigating to the most frequently accessed link, DLB starts by updating the underutilized hyperlink with a quintuple move. When the hyperlink becomes excessively utilised, Dynamic Link Bonding (DLB) will automatically redirect the subsequent flowlet of packets to a distinct, less-congested hyperlink.

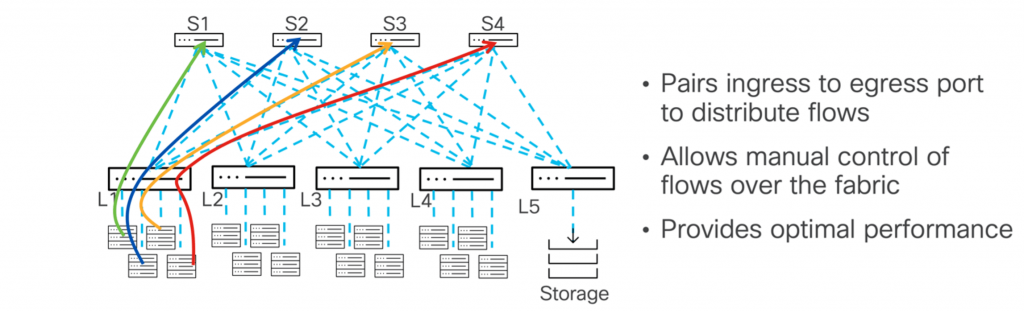

For those seeking leadership roles, the Nexus 9000 Series’ Distributed Layer 3 (DLB) feature empowers precise control over load balancing configurations across both input and output ports. By deliberately designing custom linkages between entry points and exit routes, you’ll gain increased adaptability and accuracy in governing website users. By enabling this feature, you are able to effectively manage the volume of data being sent to output ports, thereby alleviating any potential bottlenecks and reducing congestion. This strategy can be implemented via a command-line interface (CLI) or software programming interface (API), enabling the management of large-scale networks and allowing for manual website visitor distribution.

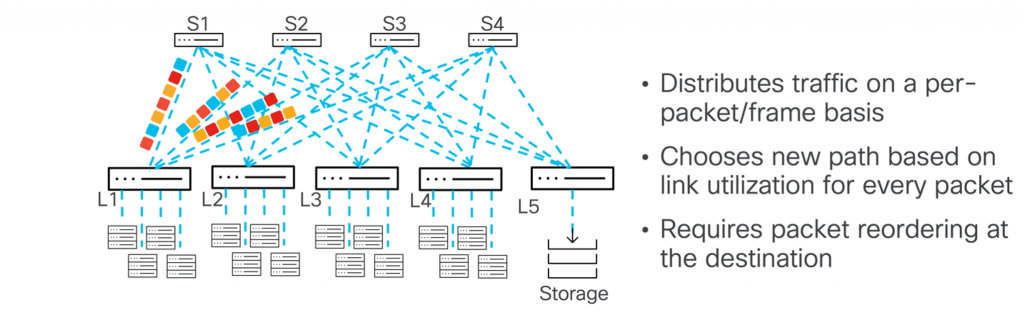

The Nexus 9000 Collection enables packet spraying across the material via per-packet load balancing, routing each packet through a unique path to optimize traffic flow. Random distribution of packets may theoretically optimize hyperlink utilization. Despite this, it’s crucial to acknowledge that packets may well arrive out of sequence at the destination host. The host must be capable of either reordering packets or handling them as they arrive, ensuring sustained and efficient processing within available memory.

Efficiency enhancements underway to optimize the manner in which

Focusing on the long-term, new requirements are expected to further boost efficiency. The Extremely Ethernet Consortium, in collaboration with Cisco, has been driving the development of comprehensive requirements spanning multiple layers of the ISO/OSI stack to enhance both AI and high-performance computing (HPC) workloads? What implications may arise for Nexus 9000 Collection Switches, and what expectations can be made?

Scalable transport, higher management

Can we develop a scalable, flexible, secure, and integrated transportation solution – Extremely Ethernet Transport (UET)?

The UET protocol introduces a novel connectionless transportation method, eschewing traditional handshake procedures that establish a preliminary connection between communicating entities. Transportation commences once a reliable link is established and maintained until the transmission reaches its maximum capacity. This strategy enables increased scalability and reduced latency, ultimately decreasing the cost of network interface cards (NICs).

The UET protocol incorporates congestion management techniques, guiding Network Interface Cards (NICs) to efficiently allocate network traffic across multiple available pathways, thereby optimizing network utilization and reducing congestion. UET can leverage lightweight telemetry, incorporating round-trip time delay measurements to collect data on community path utilization and congestion, subsequently conveying this intelligence to the intended recipient. Packet trimming is an additional optional function that aids in detecting congestion at its earliest stages. When congestion occurs due to a full buffer, the system efficiently handles it by proactively transmitting solely the header information of packets destined for discard. The proposed method provides a transparent framework for receivers to notify senders of congestion, thereby reducing retransmission latency.

In UET, both endpoint devices (Network Interface Cards or NICs) actively participate in the transportation process, operating independently of a centralized network authority. Connection-oriented communication is initiated by the sending device and terminated at both ends. The community necessitates separate training programs for both knowledge site visitors and management site visitors, with the objective of verifying understanding among those who complete the knowledge site visitor course. Specifically for knowledge site visitors, ECN (Explicit Congestion Notification) is employed to indicate and notify congestion on network trails. Visitors to our knowledge site will have seamless access to a lossless community, enabling flexible transportation options.

The institution has thoroughly prepared to seamlessly integrate Universal Executive Training (UET) into its existing curriculum, ensuring a seamless student experience. Additionally, the university is taking proactive steps to accommodate students’ varying needs and circumstances.

Nexus 9000 Collection Switches are now UEC-ready, enabling a seamless transition to the new UET protocol, effortlessly integrating both existing and new infrastructure components. All obligations are currently being met. Cisco’s Nexus products, powered by its Silicon One architecture, now support optional features akin to packet trimming. Further options are expected to be supported on Nexus 9000 Collection Switches in the near future.

Craft a robust and dependable community foundation through precise control and unparalleled productivity with the Nexus 9000 Series. Start leveraging dynamic load balancing for your AI workloads today. As soon as the UEC requirements are ratified, we’ll ensure readiness, enabling a seamless upgrade to Extreme Ethernet NICs, thereby fully unleashing the capabilities of Extreme Ethernet and guaranteeing your network’s future-readiness. Able to optimize your future? Here is the rewritten text in a different style:

Start building your comprehensive solution by leveraging the advanced features of the Nexus 9000 Collection.

Share: