Bored with the infinite debate about which AI coding assistant is “the most effective”? What if the key isn’t discovering one excellent device, however mastering a symphony of them? Overlook the one-size-fits-all strategy. The way forward for coding isn’t about loyalty; it’s about leveraging a various toolbox, every instrument tuned for a selected process.

That is the code post-scarcity period, the place strains of code are now not valuable however a disposable commodity. In a world the place you possibly can generate 1,000 strains of code simply to discover a single bug after which delete it, the foundations of the sport have essentially modified.

“We aren’t simply writing code anymore; we’re orchestrating intelligence.”

In a latest submit on X, Andrej Karpathy, a number one voice within the AI world, shared a deep look into his evolving relationship with giant language fashions (LLMs) within the coding workflow. His perspective displays a broader shift: builders at the moment are transferring towards constructing an LLM workflow – a layered system of instruments and practices that adapts to context, process, and private model.

What follows is a deep dive into Karpathy’s multi-layered strategy to LLM-assisted coding, an exploration of the real-world implications for builders in any respect ranges, and a set of actionable insights that will help you craft your personal optimum AI coding stack.

AI-Powered Coding Stack: A Multi-Layered Strategy to Productiveness

The panorama of AI-assisted coding is huge and ever-evolving. Andrej Karpathy just lately shared his private workflow, revealing a nuanced, multi-layered strategy to leveraging totally different AI instruments. As an alternative of counting on a single excellent resolution, he expertly navigates a stack of instruments, every with its personal strengths and weaknesses. This isn’t nearly utilizing AI; it’s about understanding its totally different types and making use of them strategically.

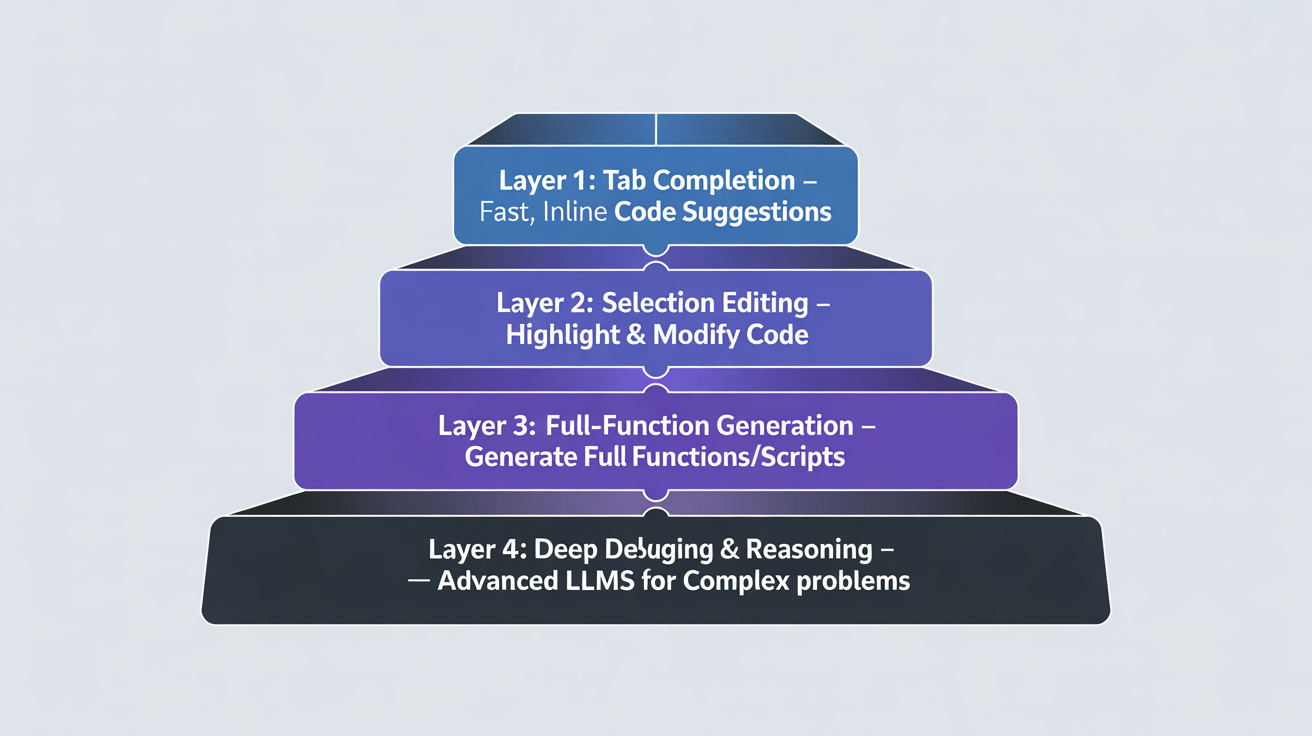

Karpathy’s LLM workflow for builders includes the next layers:

Let’s break down this highly effective, unconventional workflow, layer by layer, and see how one can undertake an analogous mindset to supercharge your personal productiveness.

Layer 1: Tab Full

On the core of Karpathy’s workflow is one easy process – tab completion. He estimates it makes up about 75% of his AI help. Why? As a result of it’s probably the most environment friendly approach to talk with the mannequin. Writing just a few strains of code or feedback is a high-bandwidth approach of specifying a process. You’re not making an attempt to explain a fancy perform in a textual content immediate; you’re merely demonstrating what you need, in the proper place, on the proper time.

This isn’t about AI writing the entire perform for you. It’s a couple of collaborative, back-and-forth course of. You present the intent, and the AI offers the speedy, context-aware options. This workflow is quick, low-latency, and retains you within the driver’s seat. It’s the equal of a co-pilot that finishes your sentences, not one which takes over the controls. For this, Karpathy depends on Cursor, an AI-powered code editor.

- Why it Works: It minimizes the “communication overhead.” Textual content prompts could be verbose and undergo from ambiguity. Code is direct.

- Instance: You kind def calculate_ and the AI immediately suggests calculate_total_price(gadgets, tax_rate):. You’re guiding the method with minimal effort.

Since even the most effective fashions can get annoying, it’s prompt to toggle the function on and off. This manner, one can preserve management and keep away from undesirable options.

Layer 2: Highlighting and Modifying

The subsequent step up in complexity is a focused strategy: highlighting a selected chunk of code and asking for a modification. It is a vital bounce from easy tab completion. You’re not simply asking for a brand new line; you’re asking the AI to grasp the logic of an current block and remodel it.

It is a highly effective method for refactoring and optimization: an important a part of an LLM workflow for builders. Must convert a multi-line for loop right into a concise record comprehension? Spotlight the code and immediate the AI. Need to add error dealing with to a perform? Spotlight and ask. This workflow is ideal for micro-optimizations and targeted enhancements with out rewriting your complete perform from scratch.

- Why it Works: It offers a transparent, bounded context. The AI isn’t guessing what you need from a broad immediate; it’s working throughout the express boundaries you’ve offered.

- Instance: You spotlight a block of nested if-then-else statements and immediate: “Convert this to a cleaner, extra readable format.” The AI may counsel a swap assertion or a sequence of logical and/or operations.

Layer 3: Aspect-by-Aspect Assistants (e.g., Claude Code, Codex)

When the duty at hand is simply too giant for a easy highlight-and-modify, the subsequent layer of Karpathy’s stack comes into play: working a extra highly effective assistant like Claude Code or Codex on the facet. These instruments are for producing bigger, extra substantial chunks of code which might be nonetheless comparatively straightforward to specify in a immediate.

That is the place the “YOLO mode” (you-only-live-once) turns into tempting, but additionally dangerous. Karpathy notes that these fashions can go off-track, introducing undesirable complexity or just doing dumb issues. The bottom line is to not run in YOLO mode. Be able to hit ESC regularly. That is about utilizing the AI as a fast-drafting device, not an ideal, hands-off resolution. You continue to have to be the editor and the ultimate authority.

Utilizing these side-by-side assistants brings its personal set of execs and cons.

The Professionals:

- Velocity: Generates giant quantities of code rapidly. It is a huge time-saver for boilerplate, repetitive duties, or code you’d by no means write in any other case.

- “Vibe-Coding”: Invaluable whenever you’re working in an unfamiliar language or area (e.g., Rust or SQL). The AI can bridge the hole in your information, permitting you to concentrate on the logic.

- Ephemeral Code: That is the guts of the “code post-scarcity period.” Want a 1,000-line visualization to debug a single challenge? The AI can create it in minutes. You employ it, you discover the bug, you delete it. No time misplaced.

The Cons:

- “Dangerous Style”: Karpathy observes that these fashions usually lack a way of “code style.” They are often overly defensive (too many attempt/catch), overcomplicate abstractions, or duplicate code as an alternative of making helper features. A last “cleanup” move is sort of all the time crucial.

- Poor Lecturers: Attempting to get them to clarify code as they write it’s usually a irritating expertise. They’re constructed for technology, not pedagogy.

- Over-bloating: They have a tendency to create verbose, nested if-then-else constructs when a one-liner would suffice. It is a direct results of their coaching information and lack of human instinct.

Layer 4: The Last Frontier

When all different instruments fail, Karpathy turns to his last, strongest layer: a state-of-the-art mannequin like GPT-5 Professional. That is the nuclear possibility, reserved for the toughest, most intractable issues. This device isn’t for writing code snippets or boilerplate; it’s for deep, advanced problem-solving.

Within the context of an LLM workflow for builders, this layer represents the highest of the stack—the purpose the place uncooked mannequin energy is utilized strategically, not routinely. It’s the place refined bug searching, deep analysis, and complicated reasoning come into play. Karpathy describes situations the place he, his tab-complete, and his side-by-side assistant are all caught on a bug for ten minutes, however GPT-5 Professional, given your complete context, can dig for ten minutes and discover a actually refined, hidden challenge.

Why it’s the Final Resort?

GPT-5 Professional and fashions which might be in its league are nice, however they arrive with their very own set of challenges, a few of that are:

- Latency: It’s slower. You may’t use it for speedy, real-time coding. It’s important to await its deep, complete evaluation.

- Scope: Its energy is in its skill to grasp an enormous context. It will probably “dig up all types of esoteric docs and papers,” offering a stage of perception that easier fashions can’t match.

- Strategic Use: This isn’t a each day driver. It’s a strategic weapon for in any other case not possible duties, like advanced architectural cleanup options or a full literature assessment on a selected coding strategy.

Karpathy’s workflow is a masterclass in strategic device utilization. It’s not about discovering the proper device however about constructing a cohesive, multi-layered system. Every device has a selected position, a selected time, and a selected goal.

- Tab full for high-bandwidth, in-the-moment duties.

- Highlighting for targeted refactoring and fast modifications.

- Aspect-by-side assistants for producing bigger chunks of code and exploring unfamiliar territory.

- A strong, state-of-the-art mannequin for debugging the actually intractable issues and performing deep analysis.

That is the way forward for work for leaders, college students, and professionals alike. The period of the single-tool hero is over. Right here, Karpathy clearly mentions a brand new sort of “nervousness” that’s grappling with numerous builders. That is the concern of not being on the frontier.

It’s actual, however the resolution isn’t to chase the subsequent massive factor. The answer is to grasp the ecosystem, study to “sew up” the professionals and cons of various instruments, and change into an orchestra conductor, not only a single musician.

Conclusion

Your skill to thrive on this new period gained’t be measured by the strains of code you write, however by the complexity of the issues you remedy and the pace at which you do it. AI is a device, however your human instinct, style, and strategic pondering are the key weapons. It’s not about discovering one excellent device for all the things; it’s about understanding the necessities of every process. For builders, this implies constructing an LLM workflow that enhances their expertise: recognizing your consolation with totally different instruments and making a system that works for you. Karpathy shared his personal workflow, however it is advisable take a look at, adapt, and create one which aligns together with your distinctive model and targets.

Are you able to cease chasing the proper device and begin constructing the proper workflow?

Login to proceed studying and revel in expert-curated content material.