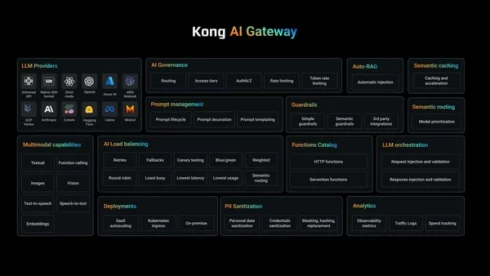

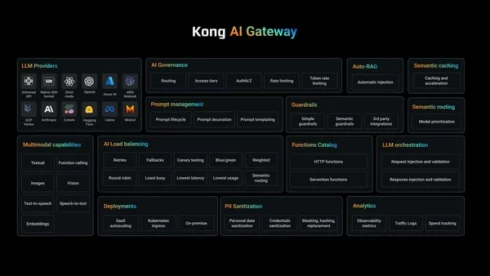

Kong has introduced updates to its AI Gateway, a platform for governance and safety of LLMs and different AI assets.

One of many new options in AI Gateway 3.10 is a RAG Injector to scale back LLM hallucinations by robotically querying the vector database and inserting related knowledge to make sure the LLM is augmenting the outcomes with recognized information sources, the corporate defined.

This improves safety as effectively by placing the vector database behind the Kong AI Gateway, and likewise improves developer productiveness by permitting them to give attention to issues apart from making an attempt to scale back hallucinations.

One other replace in AI Gateway 3.10 is an automated personally identifiable data (PII) sanitization plugin to guard over 20 classes of PII throughout 12 completely different languages. It really works with most main AI suppliers, and may run on the international platform stage in order that builders don’t must manually code the sanitization into each software they construct.

In accordance with Kong, different comparable sanitization choices are sometimes restricted to changing delicate knowledge with a token or eradicating it fully, however this plugin optionally reinserts the sanitized knowledge into the response earlier than it reaches the tip consumer, guaranteeing they can get the info they want with out compromising privateness.

“As synthetic intelligence continues to evolve, organizations should undertake strong AI infrastructure to harness its full potential,” mentioned Marco Palladino, CTO and co-founder of Kong. “With this newest model of AI Gateway, we’re equipping our clients with the instruments essential to implement Agentic AI securely and successfully, guaranteeing seamless integration with out compromising consumer expertise. Furthermore, we’re serving to resolve among the largest challenges with LLMs, resembling chopping down on hallucinations and bettering knowledge safety and governance.”