At Amazon EMR, we continually hearken to our prospects’ challenges with operating large-scale Amazon EMR HBase deployments. One constant ache level that saved rising is unpredictable utility conduct on account of rubbish assortment (GC) pauses on HBase. Clients operating vital workloads on HBase had been experiencing occasional latency spikes on account of various GC pauses, notably impacting once they occurred throughout peak enterprise hours.

To scale back this unpredictable influence to business-critical functions operating on HBase, we flip to Oracle’s Z Rubbish Collector (ZGC), particularly it’s generational help launched in JDK 21. Generational ZGC delivers constant sub-millisecond pause instances that dramatically scale back tail latency.

On this put up, we look at how unpredictable GC pauses have an effect on business-critical workloads, advantages of enabling generational ZGC in HBase. We additionally cowl further GC tuning strategies to enhance the appliance throughput and scale back tail latency. Amazon EMR 7.10.0 introduces new configuration parameters that permit you to seamlessly configure and tune the rubbish collector for HBase RegionServers.

By incorporating generational assortment into ZGC’s ultra-low pause structure, it effectively handles each short-lived and long-lived objects, making it exceptionally well-suited to HBase’s workload traits:

- Dealing with blended object lifetimes – HBase operations create a mixture of short-lived objects (reminiscent of non permanent buffers for learn/write operations) and long-lived objects (reminiscent of cached knowledge blocks and metadata). Generational ZGC can effectively handle each, lowering general GC frequency and influence.

- Adapting to workload patterns – As workload patterns change all through the day — as an example, from write-heavy ingestion to read-heavy analytics — generational ZGC adapts its assortment technique, sustaining optimum efficiency.

- Scaling with heap measurement – As knowledge volumes develop and HBase clusters require bigger heaps, generational ZGC maintains it’s sub-millisecond pause instances, offering constant efficiency whilst you scale up.

Understanding the influence of GC pauses on HBase

When operating HBase RegionServers, the JVM heap can accumulate a lot of objects, each short-lived (non permanent objects created throughout operations) and long-lived (cached knowledge, metadata). Conventional rubbish collectors like Rubbish-First Rubbish Collector (G1 GC) have to pause utility threads throughout sure phases of rubbish assortment, notably throughout “stop-the-world” (STW) occasions. GC pauses can have a number of impacts on HBase :

- Latency spikes – GC pauses introduce latency spikes, typically impacting tail latencies (p99.9 and p99.99) of the appliance which might result in timeout for shopper requests and inconsistent response instances..

- Utility availability – All utility threads are halted throughout STW occasions and it negatively impacts general utility availability.

- RegionServer failures – If GC pauses exceed the configured ZooKeeper session timeout, they may result in RegionServer failures.

HBase RegionServer studies each time there’s an unusually lengthy GC pause time utilizing the JvmPauseMonitor. The next log entry reveals an instance of GC pauses reported by HBase RegionServer. Throughout YCSB benchmarking, G1 GC exhibited 75 such pauses over a 7-hour interval, whereas generational ZGC confirmed no lengthy pauses beneath similar workload and testing situations.

G1 GC pauses are proportional to the strain on the heap and the article allocation patterns. Consequently, the pauses would possibly worsen if the heap is beneath an excessive amount of load, whereas generational ZGC maintains it’s pause instances targets even beneath excessive strain.

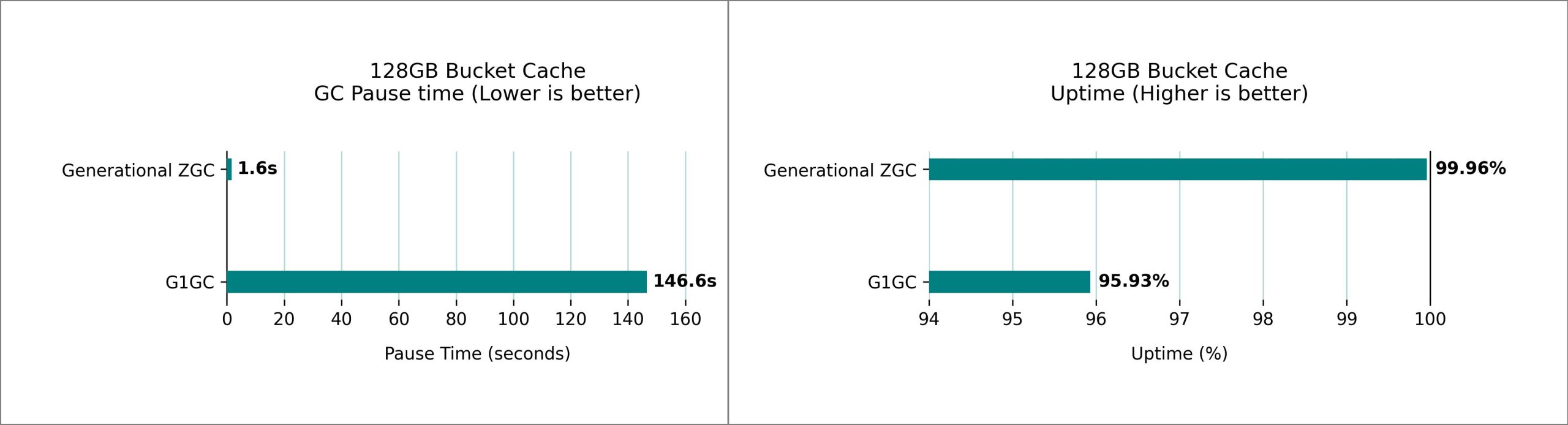

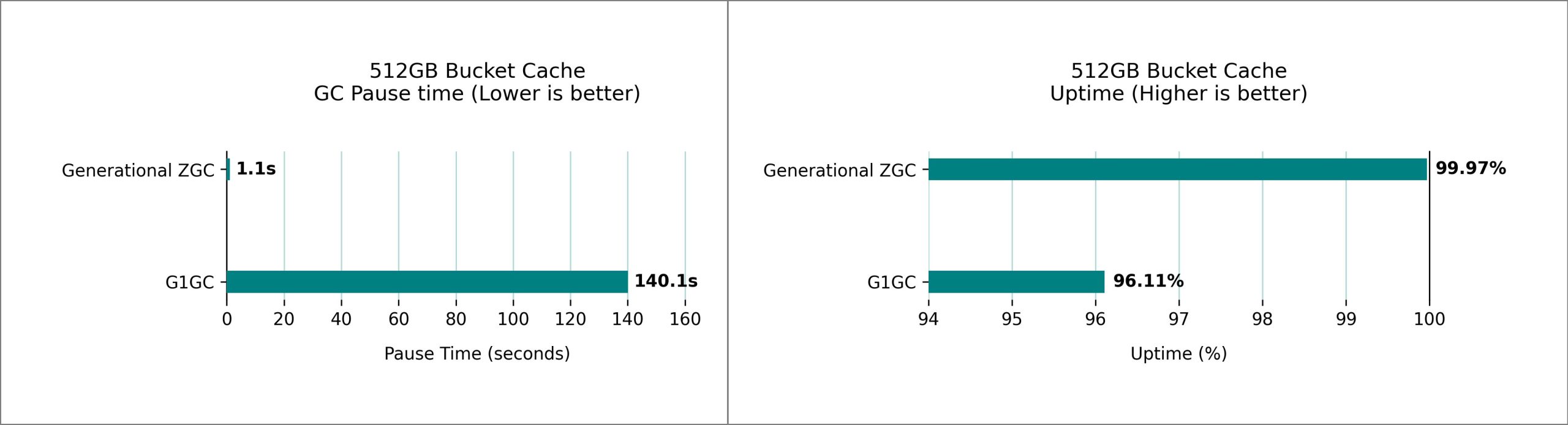

Pause time and availability (uptime) comparability: Generational ZGC vs. G1GC in Amazon EMR HBase

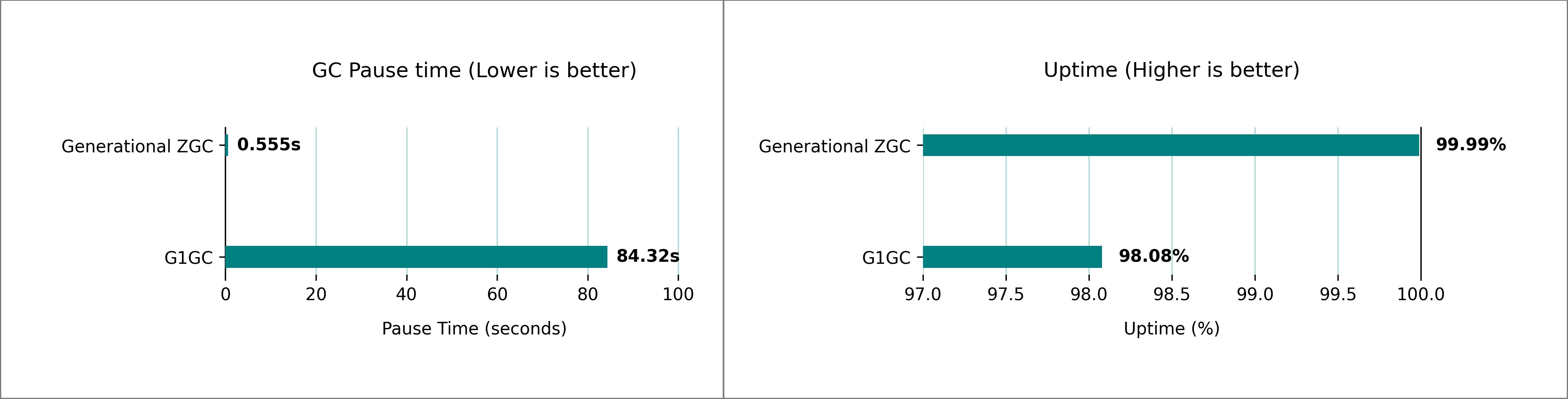

Our testing revealed important variations in GC pause time between the generational ZGC and G1 GC for HBase on Amazon EMR 7.10. We used 1 m5.4xlarge (main), 5 m5.4xlarge (core) nodes cluster settings and ran a number of iterations of 1-billion rows YCSB workloads to check the GC pauses and uptime share. Primarily based on our take a look at cluster, we noticed a GC pause time enchancment from over 1 minute, 24 seconds, to beneath 1 seconds for over an hour-long execution, bettering the appliance uptime from 98.08% to 99.99%.

We performed intensive efficiency testing evaluating G1 GC and generational ZGC on HBase clusters operating on Amazon EMR, utilizing the default heap settings robotically configured primarily based on Amazon Elastic Compute Cloud (Amazon EC2) occasion kind. The next picture reveals the comparability in each GC pause time and uptime share at a peak load of three,00,000 requests per second (knowledge sampled over 1 hour).

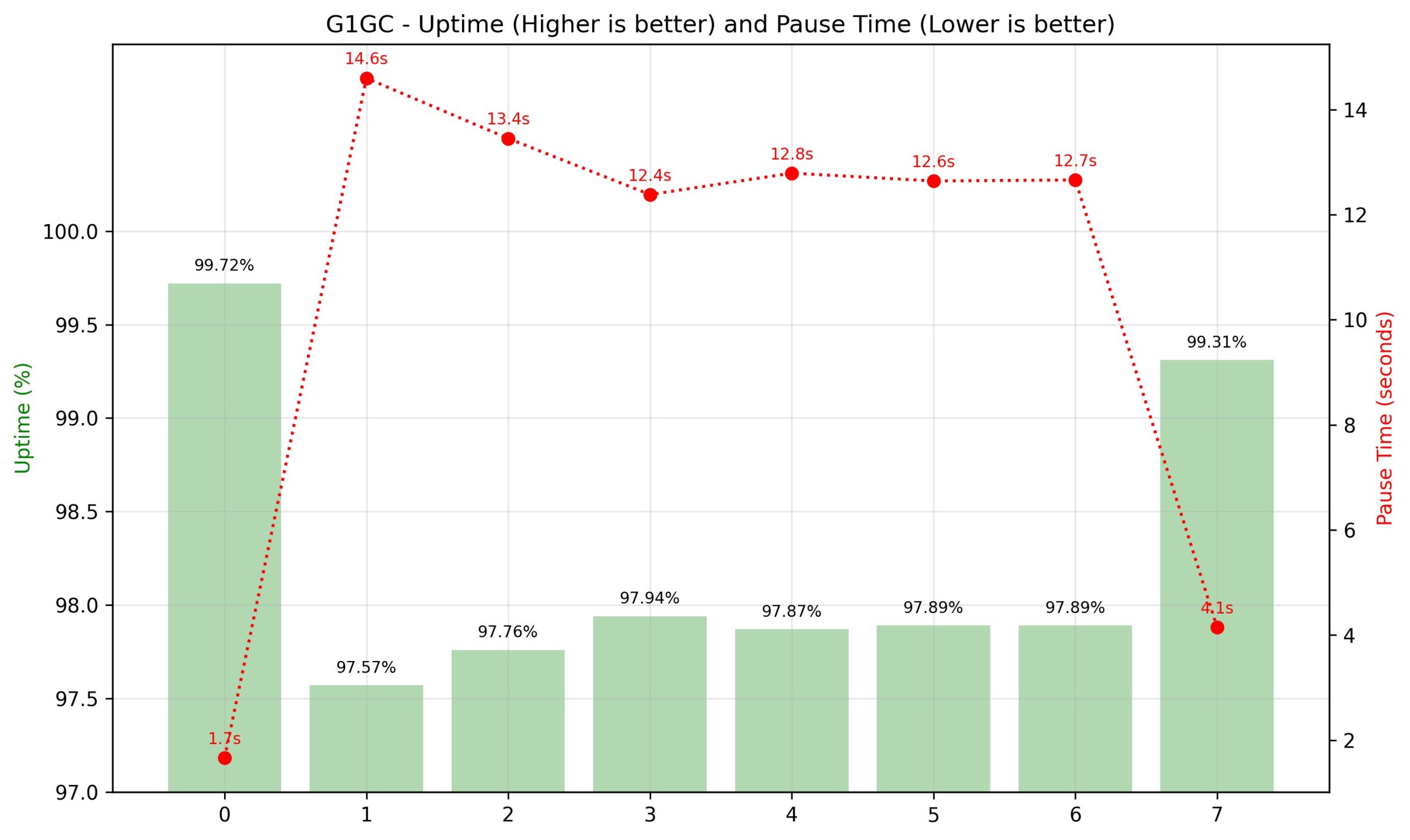

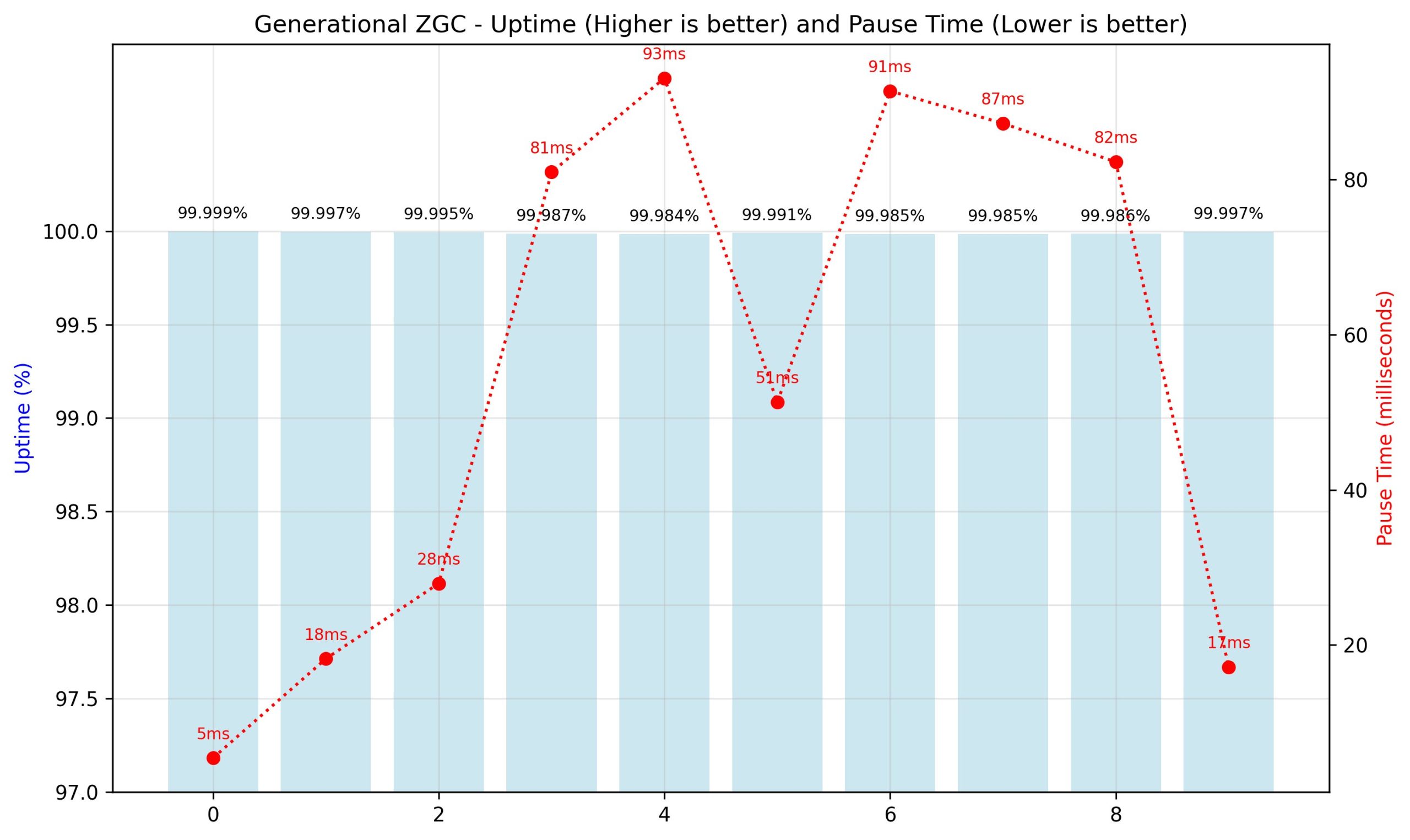

The next figures present the breakdown of the 1-hour runtime in 10-minute intervals. The left vertical axis measures the uptime, the best vertical axis measures the GC pause time, and the horizontal axis reveals the interval. The generational ZGC maintained constant uptime and pause time in milliseconds, and G1 GC demonstrated inconsistent and decreased uptime, pause instances in seconds.

Tail latency comparability: Generational ZGC vs. G1GC in Amazon EMR HBase

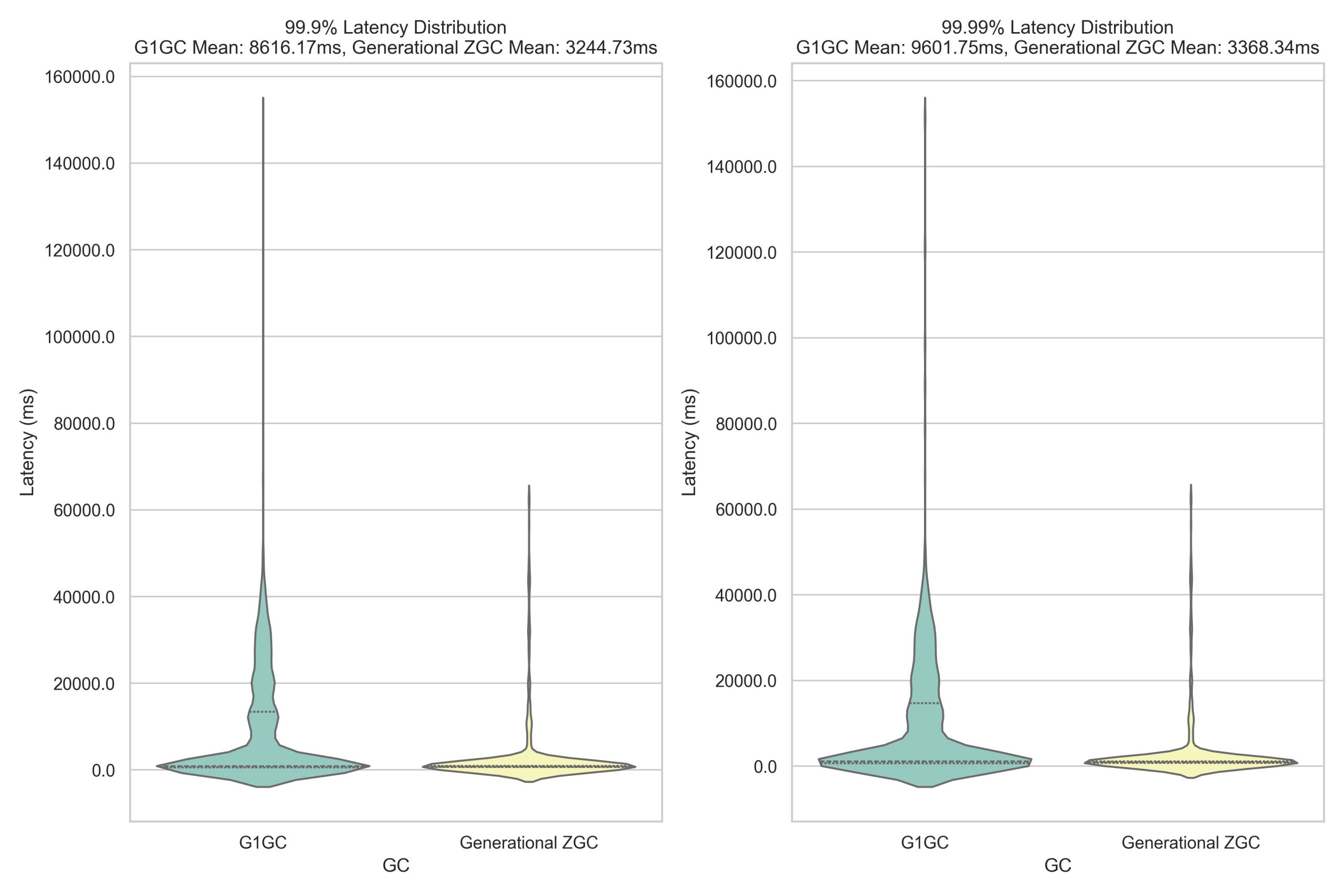

One of the compelling benefits of generational ZGC over G1 GC is its predictable rubbish assortment conduct and the influence on utility tail latency. G1 GC’s assortment triggers are non-deterministic, which means pause instances can range considerably and happen at unpredictable intervals. These surprising pauses, although usually manageable, can create latency spikes that notably have an effect on the slowest percentile of operations. In distinction, generational ZGC maintains constant, sub-millisecond pause instances all through its operation. This predictability proves essential for functions requiring steady efficiency, particularly on the highest percentiles of latency (99.ninth and 99.99th percentiles). Our YCSB benchmark testing reveals the real-world influence of those totally different approaches. The next graph illustrates tail latency distribution between G1 GC and generational ZGC over a 2-hour sampling interval :

Enhancements to BucketCache

BucketCache is an off-heap cache in HBase that’s used to cache the often accessed knowledge blocks and decrease disk I/O. Bucket cache and heap reminiscence works in conjunction and would possibly improve the rivalry on the heap relying on the workload. Generational ZGC maintains it’s pause time targets even with a terabyte-sized bucket cache. We benchmarked a number of HBase clusters with various bucket cache sizes and 32 GB RegionServer heap. The next figures present the height pause instances noticed over a 1-hour sampling interval, evaluating G1 GC and generational ZGC efficiency.

Enabling this characteristic and extra fine-tuning parameters

To allow this characteristic, observe the configurations talked about within the Efficiency Issues. Within the following sections, we talk about further fine-tuning parameters to tailor the configuration in your particular use case.

Mounted JVM heap

Batch processing jobs and short-lived functions profit from dynamic allocation’s potential to adapt to various enter sizes and processing calls for when a number of functions co-exist on the identical cluster and run with useful resource constraints. The reminiscence footprint can increase throughout peak processing and contract when the workload diminishes. Nonetheless, for manufacturing HBase deployments with none co-existing functions in the identical fastened heap allocation provides steady, dependable efficiency.

Dynamic heap allocation is when the JVM flexibly grows and shrinks its reminiscence utilization between minimal (-Xms) and most (-Xmx) limits primarily based on utility wants, returning unused reminiscence to the working system. Nonetheless, this flexibility comes at the price of efficiency overhead and reminiscence fragmentation. Dynamic allocation appeared versatile, however it created fixed disruptions. The JVM was all the time negotiating with the working system for reminiscence, resulting in efficiency overhead and fragmentation. Alternatively, fastened heap allocation pre-allocates a relentless quantity of reminiscence for the JVM at startup and maintains it all through runtime, offering higher efficiency by lowering reminiscence negotiation overhead with the working system. To allow this characteristic, use the next configuration: :

Allow pre-touch

Functions with massive heaps can expertise extra important pauses when the JVM must allocate and fault in new reminiscence pages. Pre-touch (-XX:+AlwaysPreTouch) instructs the JVM to bodily contact and commit all reminiscence pages throughout heap initialization, moderately than ready till they’re first accessed throughout runtime. This early dedication reduces the latency of on-demand web page faults and reminiscence mappings that happen when pages are first accessed, leading to extra predictable efficiency particularly throughout heavy load conditions. By pre-touching reminiscence pages at startup, you commerce a barely longer JVM startup time for extra constant runtime efficiency. To allow pre-touch in your HBase cluster, use the next configuration :

Rising reminiscence mappings for big heaps

Relying on the workload and scale, you would possibly want to extend the Java heap measurement to accommodate massive knowledge in reminiscence. When utilizing the generational ZGC with a big heap setup, it’s vital to additionally improve the working system’s reminiscence mapping restrict (vm.max_map_count).

When a ZGC-enabled utility begins, the JVM proactively checks the system’s vm.max_map_count worth. If the restrict is just too low to help the configured heap, it is going to difficulty the next warning :

To extend the reminiscence mappings, use the next configuration and alter the rely worth within the command primarily based on the heap measurement of the appliance.

Conclusion

The introduction of generational ZGC and glued heap allocation for HBase on Amazon EMR marks a big leap ahead within the predictable efficiency and tail latency discount. By addressing the long-standing challenges of GC pauses and reminiscence administration, these options unlock new ranges of effectivity and stability for Amazon EMR HBase deployments. Though the efficiency enhancements range relying on workload traits, you may anticipate to see important enhancements in your Amazon EMR HBase clusters’ responsiveness and stability. As knowledge volumes proceed to develop and low-latency necessities turn out to be more and more stringent, options like generational ZGC and glued heap allocation turn out to be indispensable. We encourage HBase customers on Amazon EMR to allow these options and expertise the advantages firsthand. As all the time, we advocate testing in a staging atmosphere that mirrors your manufacturing workload to totally perceive the influence and optimize configurations in your particular use case.

Keep tuned for extra improvements as we proceed to push the boundaries of what’s attainable with HBase on Amazon EMR.

Concerning the authors

Vishal Chaudhary is a Software program Growth Engineer at Amazon EMR. His experience is in Amazon EMR, HBase and Hive Question Engine. His dedication in the direction of fixing distributed system issues helps Amazon EMR to realize increased efficiency enhancements.

Vishal Chaudhary is a Software program Growth Engineer at Amazon EMR. His experience is in Amazon EMR, HBase and Hive Question Engine. His dedication in the direction of fixing distributed system issues helps Amazon EMR to realize increased efficiency enhancements.

Ramesh Kandasamy is an Engineering Supervisor at Amazon EMR. He’s a protracted tenured Amazonian devoted to resolve distributed programs issues.

Ramesh Kandasamy is an Engineering Supervisor at Amazon EMR. He’s a protracted tenured Amazonian devoted to resolve distributed programs issues.