In Half 1 of this sequence, we mentioned elementary operations to manage the lifecycle of your Amazon Managed Service for Apache Flink utility. If you’re utilizing higher-level instruments comparable to AWS CloudFormation or Terraform, the device will execute these operations for you. Nevertheless, understanding the basic operations and what the service routinely does can present some stage of Mechanical Sympathy to confidently implement a extra strong automation.

Within the first a part of this sequence, we targeted on the blissful paths. In a perfect world, failures don’t occur, and each change you deploy works completely. Nevertheless, the true world is much less predictable. Quoting Werner Vogels, Amazon’s CTO, “Every thing fails, on a regular basis.”

On this submit, we discover failure eventualities that may occur throughout regular operations or whenever you deploy a change or scale the applying, and find out how to monitor operations to detect and get well when one thing goes improper.

The much less blissful path

A sturdy automation should be designed to deal with failure eventualities, specifically throughout operations. To do this, we have to perceive how Apache Flink can deviate from the blissful path. Because of the nature of Flink as a stateful stream processing engine, detecting and resolving failure eventualities requires totally different methods in comparison with different long-running functions, comparable to microservices or short-lived serverless capabilities (comparable to AWS Lambda).

Flink’s conduct on runtime errors: The fail-and-restart loop

When a Flink job encounters an sudden error at runtime (an unhandled exception), the traditional conduct is to fail, cease the processing, and restart from the most recent checkpoint. Checkpoints enable Flink to assist knowledge consistency and no knowledge loss in case of failure. Additionally, as a result of Flink is designed for stream processing functions, which run constantly, if the error occurs once more, the default conduct is to maintain restarting, hoping the issue is transient and the applying will ultimately get well the traditional processing.In some circumstances, the issue isn’t transient, nonetheless. For instance, whenever you deploy a code change that incorporates a bug, inflicting the job to fail as quickly because it begins processing knowledge, or if the anticipated schema doesn’t match the information within the supply, inflicting deserialization or processing errors. The identical state of affairs may additionally occur should you mistakenly modified a configuration that stops a connector to succeed in the exterior system. In these circumstances, the job is caught in a fail-and-restart loop, indefinitely, or till you actively force-stop it.

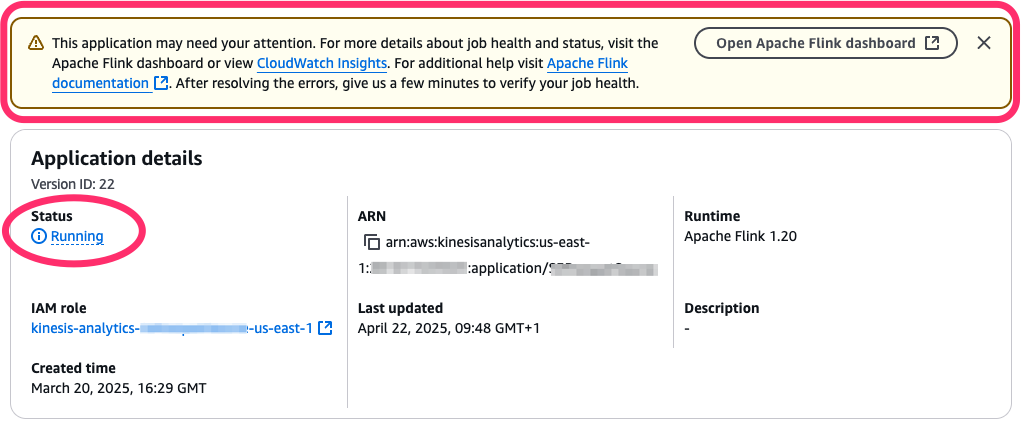

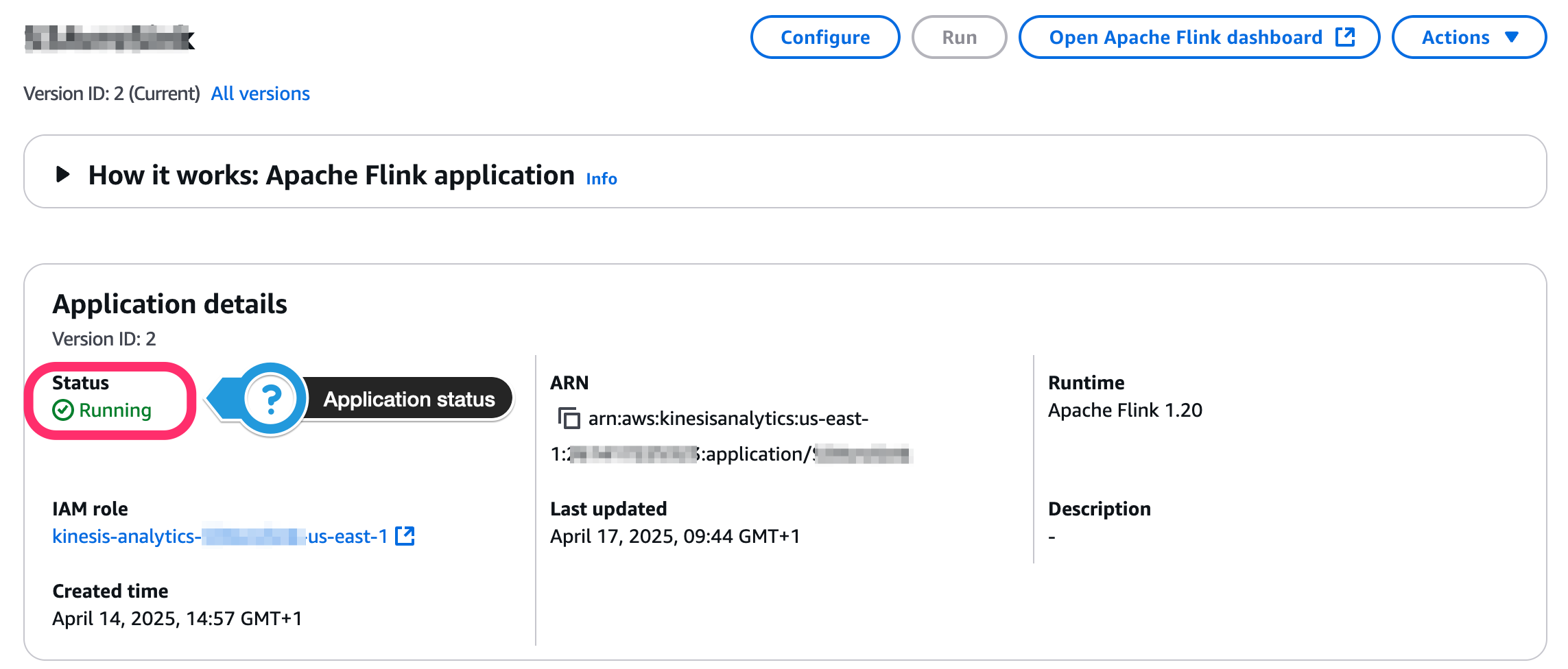

When this occurs, the Managed Service for Apache Flink utility standing could be RUNNING, however the underlying Flink job is definitely failing and restarting. The AWS Administration Console provides you a touch, pointing that the applying may want consideration (see the next screenshot).

Within the following sections, we discover ways to monitor the applying and job standing, to routinely react to this case.

When beginning or updating the applying goes improper

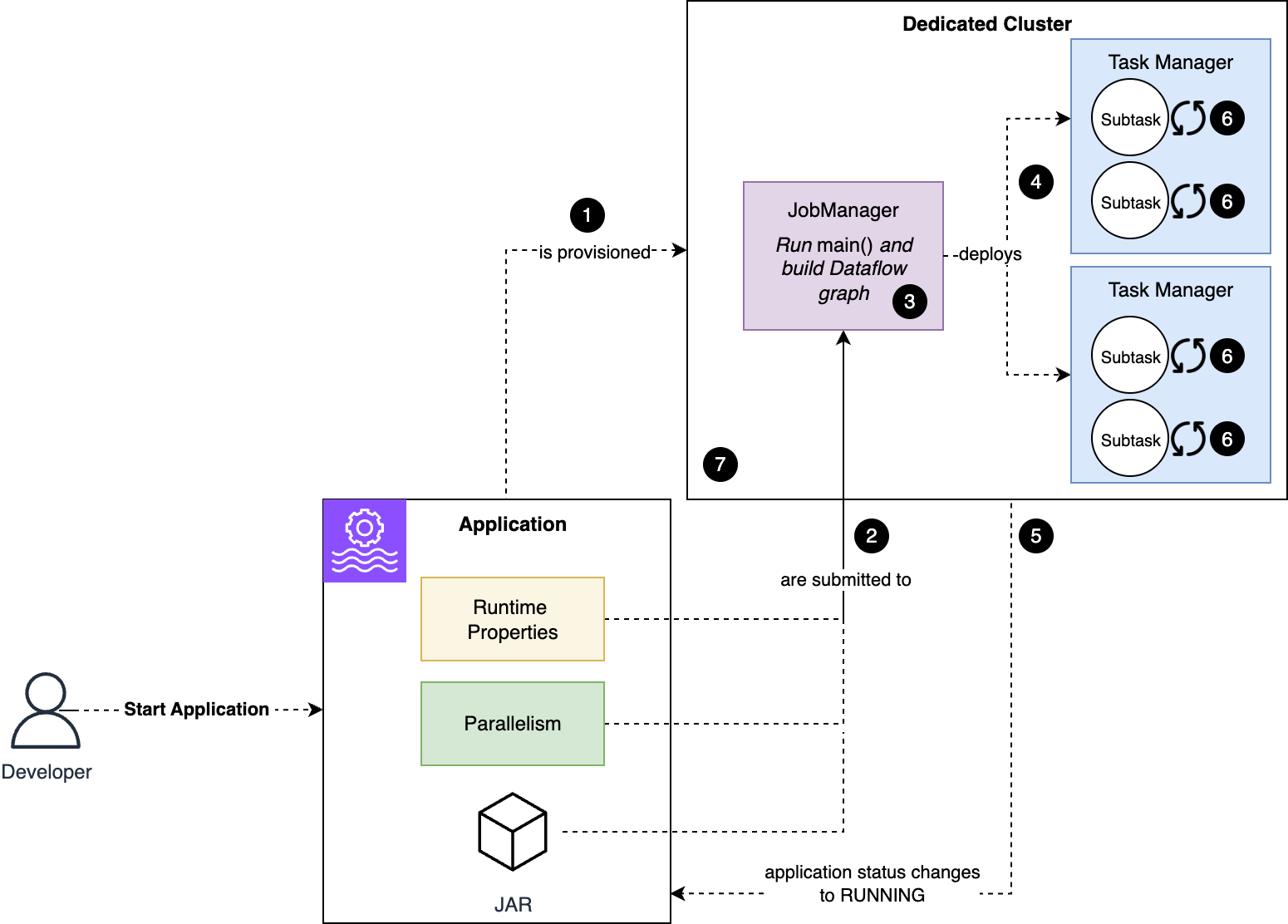

To know the failure mode, let’s overview what occurs routinely whenever you begin the applying, or when the applying restarts after you issued UpdateApplication command, as we explored in Half 1 of this sequence. The next diagram illustrates what occurs when an utility begins.

The workflow consists of the next steps:

- Managed Service for Apache Flink provisions a cluster devoted to your utility.

- The code and configuration are submitted to the Job Supervisor node.

- The code within the

principal()technique of your utility runs, defining the dataflow of your utility. - Flink deploys to the Activity Supervisor nodes the substasks that make up your job.

- The job and utility standing change to

RUNNING. Nevertheless, subtasks begin initializing now. - Subtasks restore their state, if relevant, and initialize any assets. For instance, a Kafka connector’s subtask initializes the Kafka shopper and subscribes the subject.

- When all subtasks are efficiently initialized, they alter to

RUNNINGstanding and the job begins processing knowledge.

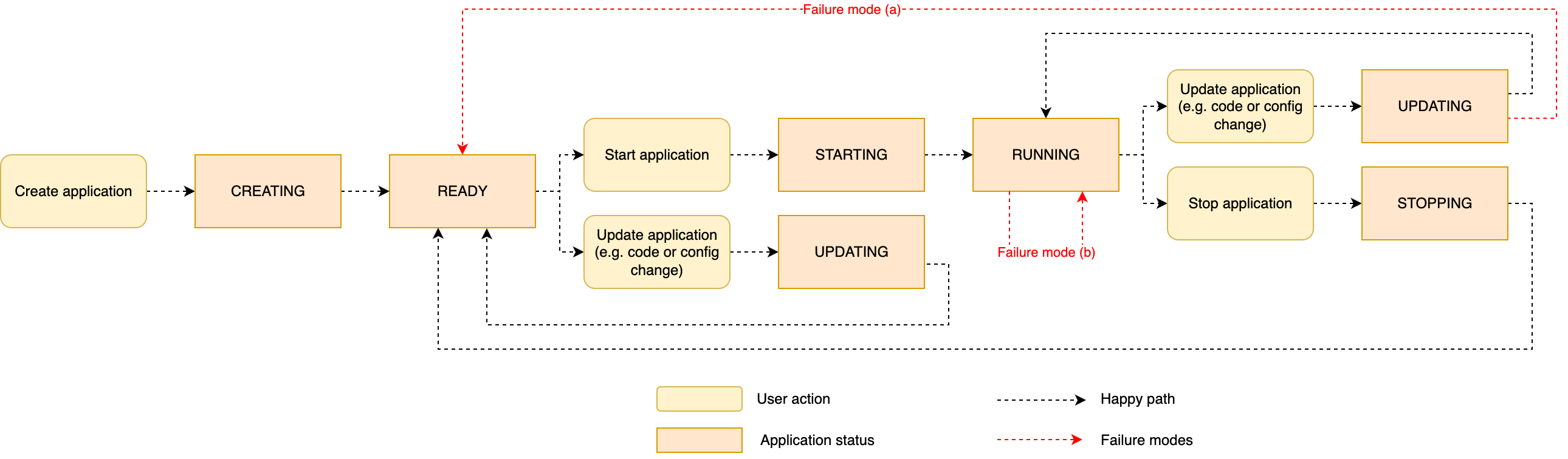

To new Flink customers, it may be complicated {that a} RUNNING standing doesn’t essentially indicate the job is wholesome and processing knowledge.When one thing goes improper through the technique of beginning (or restarting) the applying, relying on the part when the issue arises, you may observe two various kinds of failure modes:

- (a) An issue prevents the applying code from being deployed – Your utility may encounter this failure state of affairs if the deployment fails as quickly because the code and configuration are handed to the Job Supervisor (step 2 of the method), for instance if the applying code package deal is malformed. A typical error is when the JAR is lacking a

mainClassor ifmainClassfactors to a category that doesn’t exist. This failure mode may additionally occur if the code of yourprincipal()technique throws an unhandled exception (step 3). In these circumstances, the applying fails to alter toRUNNING, and reverts toREADYafter the try. - (b) The appliance is began, the job is caught in a fail-and-restart loop – An issue may happen later within the course of, after the applying standing has modified

RUNNING. For instance, after the Flink job has been deployed to the cluster (step 4 of the method), a element may fail to initialize (step 6). This may occur when a connector is misconfigured, or an issue prevents it from connecting to the exterior system. For instance, a Kafka connector may fail to connect with the Kafka cluster due to the connector’s misconfiguration or networking points. One other attainable state of affairs is when the Flink job efficiently initializes, however it throws an exception as quickly because it begins processing knowledge (step 7). When this occurs, Flink reacts to a runtime error and may get caught in a fail-and-restart loop.

The next diagram illustrates the sequence of utility standing, together with the 2 failure eventualities simply described.

Troubleshooting

We now have examined what can go improper throughout operations, specifically whenever you replace a RUNNING utility or restart an utility after altering its configuration. On this part, we discover how we will act on these failure eventualities.

Roll again a change

While you deploy a change and notice one thing isn’t fairly proper, you usually wish to roll again the change and put the applying again in working order, till you examine and repair the issue. Managed Service for Apache Flink supplies a sleek technique to revert (roll again) a change, additionally restarting the processing from the purpose it was stopped earlier than making use of the fault change, offering consistency and no knowledge loss.In Managed Service for Apache Flink, there are two sorts of rollbacks:

- Automated – Throughout an automated rollback (additionally known as system rollback), if enabled, the service routinely detects when the applying fails to restart after a change, or when the job begins however instantly falls right into a fail-and-restart loop. In these conditions, the rollback course of routinely restores the applying configuration model earlier than the final change was utilized and restarts the applying from the snapshot taken when the change was deployed. See Enhance the resilience of Amazon Managed Service for Apache Flink utility with system-rollback function for extra particulars. This function is disabled by default. You may allow it as a part of the applying configuration.

- Handbook – A handbook rollback API operation is sort of a system rollback, however it’s initiated by the person. If the applying is operating however you observe one thing not behaving as anticipated after making use of a change, you possibly can set off the rollback operation utilizing the RollbackApplication API motion or the console. Handbook rollback is feasible when the applying is

RUNNINGorUPDATING.

Each rollbacks work equally, restoring the configuration model earlier than the change and restarting with the snapshot taken earlier than the change. This prevents knowledge loss and brings you again to a model of the applying that was working. Additionally, this makes use of the code package deal that was saved on the time you created the earlier configuration model (the one you might be rolling again to), so there isn’t a inconsistency between code, configuration, and snapshot, even when within the meantime you might have changed or deleted the code package deal from the Amazon Easy Storage Service (Amazon S3) bucket.

Implicit rollback: Replace with an older configuration

A 3rd technique to roll again a change is to easily replace the configuration, bringing it again to what it was earlier than the final change. This creates a brand new configuration model, and requires the right model of the code package deal to be accessible within the S3 bucket whenever you situation the UpdateApplication command.

Why is there a 3rd possibility when the service supplies system rollback and the managed RollbackApplication motion? As a result of most high-level infrastructure-as-code (IaC) frameworks comparable to Terraform use this technique, explicitly overwriting the configuration. It is very important perceive this chance though you’ll in all probability use the managed rollback should you implement your automation primarily based on the low-level actions.

The next are two essential caveats to think about for this implicit rollback:

- You’ll usually wish to restart the applying from the snapshot that was taken earlier than the defective change was deployed. If the applying is at present

RUNNINGand wholesome, this isn’t the most recent snapshot (RESTORE_FROM_LATEST_SNAPSHOT), however moderately the earlier one. You need to set the restart fromRESTORE_FROM_CUSTOM_SNAPSHOTand choose the right snapshot. - UpdateApplication solely works if the applying is

RUNNINGand wholesome, and the job might be gracefully stopped with a snapshot. Conversely, if the applying is caught in a fail-and-restart loop, you should force-stop it first, change the configuration whereas the applying isREADY, and later begin the applying from the snapshot that was taken earlier than the defective change was deployed.

Drive-stop the applying

In regular eventualities, you cease the applying gracefully, with the automated snapshot creation. Nevertheless, this may not be attainable in some eventualities, comparable to if the Flink job is caught in a fail-and-restart loop. This may occur, for instance, if an exterior system the job makes use of stops working, or as a result of the AWS Identification and Entry Administration (IAM) configuration was erroneously modified, eradicating permissions required by the job.

When the Flink job will get caught in a fail-and-restart loop after a defective change, your first possibility ought to be utilizing RollbackApplication, which routinely restores the earlier configuration and begins from the right snapshot. Within the uncommon circumstances you possibly can’t cease the applying gracefully or use RollbackApplication, the final resort is force-stopping the applying. Drive-stop makes use of the StopApplication command with Drive=true. You can even force-stop the applying from the console.

While you force-stop an utility, no snapshot is taken (if that have been attainable, you’d have been in a position to gracefully cease). While you restart the applying, you possibly can both skip restoring from a snapshot (SKIP_RESTORE_FROM_SNAPSHOT) or use a snapshot that was beforehand taken, scheduled utilizing Snapshot Supervisor, or manually, utilizing the console or CreateApplicationSnapshot API motion.

We strongly advocate organising scheduled snapshots for all manufacturing functions that you may’t afford restarting with no state.

Monitoring Apache Flink utility operations

Efficient monitoring of your Apache Flink functions throughout and after operations is essential to confirm the end result of the operation and permit lifecycle automation to boost alarms or react, in case one thing goes improper.

The primary indicators you need to use throughout operations embrace the FullRestarts metric (accessible in Amazon CloudWatch) and the applying, job, and job standing.

Monitoring the end result of an operation

The only technique to detect the end result of an operation, comparable to StartApplication or UpdateApplication, is to make use of the ListApplicationOperations API command. This command returns a listing of the latest operations of a selected utility, together with upkeep occasions that pressure an utility restart.

For instance, to retrieve the standing of the latest operation, you need to use the next command:

The output will likely be much like the next code:

OperationStatus will observe the identical logic as the applying standing reported by the console and by DescribeApplication. This implies it may not detect a failure through the operator initialization or whereas the job begins processing knowledge. As we have now realized, these failures may put the applying in a fail-and-restart loop. To detect these eventualities utilizing your automation, you should use different methods, which we cowl in the remainder of this part.

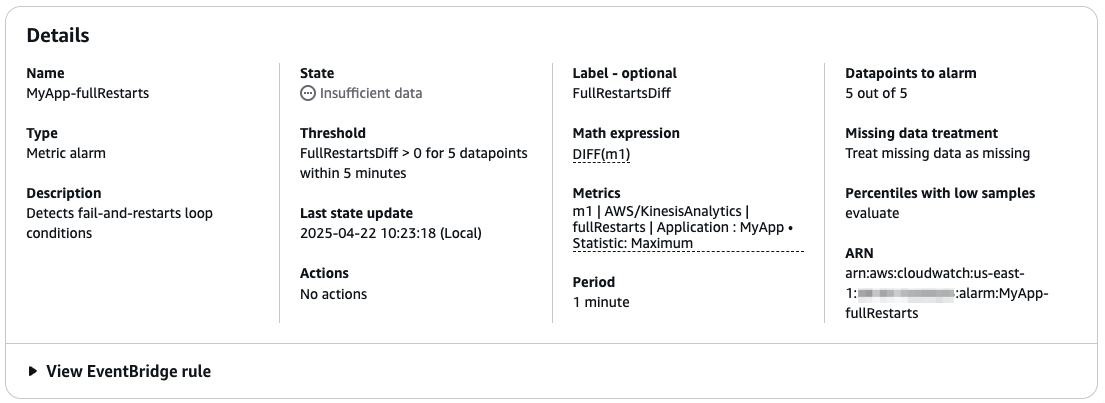

Detecting the fail-and-restart loop utilizing the FullRestarts metric

The only technique to detect whether or not the applying is caught in a fail-and-restart loop is utilizing the fullRestarts metric, accessible in CloudWatch Metrics. This metric counts the variety of restarts of the Flink job after you began the applying with a StartApplication command or restarted with UpdateApplication.

In a wholesome utility, the variety of full restarts ought to ideally be zero. A single full restart could be acceptable throughout deployment or deliberate upkeep; a number of restarts usually point out some situation. We advocate to not set off an alarm on a single restart, and even a few consecutive restarts.

The alarm ought to solely be triggered when the applying is caught in a fail-and-restart loop. This suggests checking whether or not a number of restarts have occurred over a comparatively quick time frame. Deciding the interval isn’t trivial, as a result of the time the Flink job takes to restart from a checkpoint relies on the scale of the applying state. Nevertheless, if the state of your utility is decrease than a number of GB per KPU, you possibly can safely assume the applying ought to begin in lower than a minute.

The objective is making a CloudWatch alarm that triggers when fullRestarts retains rising over a time interval ample for a number of restarts. For instance, assuming your utility restarts in lower than 1 minute, you possibly can create a CloudWatch alarm that depends on the DIFF math expression of the fullRestarts metric. The next screenshot exhibits an instance of the alarm particulars.

This instance is a conservative alarm, solely triggering if the applying retains restarting for over 5 minutes. This implies you detect the issue after no less than 5 minutes. You may think about lowering the time to detect the failure earlier. Nevertheless, watch out to not set off an alarm after only one or two restarts. Occasional restarts may occur, for instance throughout regular upkeep (patching) that’s managed by the service, or for a transient error of an exterior system. Flink is designed to get well from these circumstances with minimal downtime and no knowledge loss.

Detecting whether or not the job is up and operating: Monitoring utility, job, and job standing

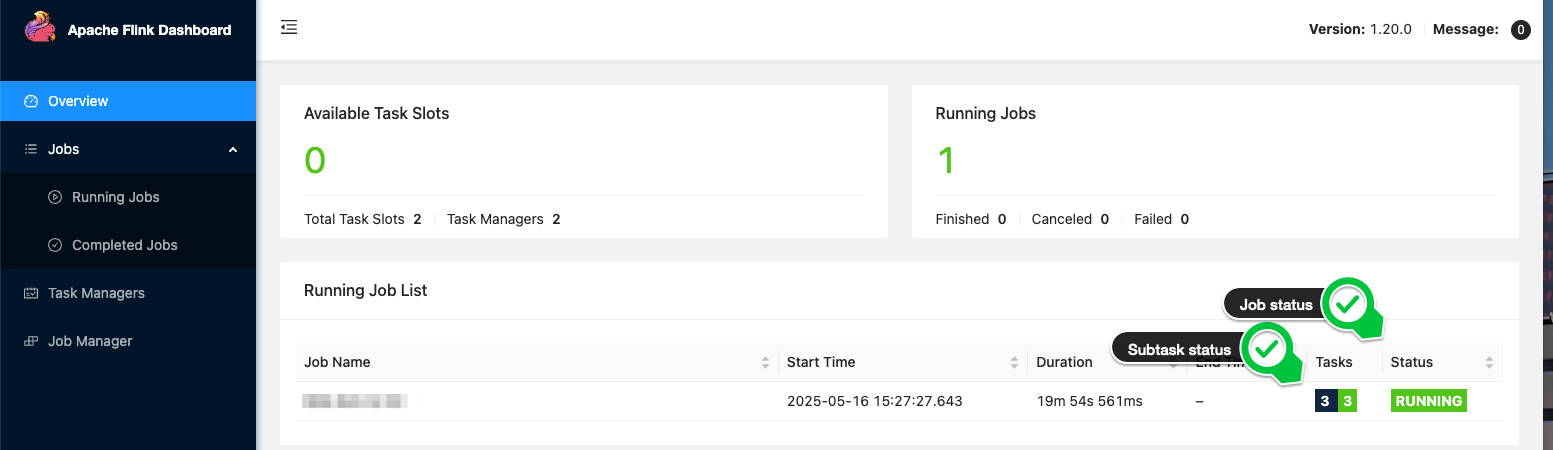

We now have mentioned how you might have totally different statuses: the standing of the applying, job, and subtask. In Managed Service for Apache Flink, the applying and job standing change to RUNNING when the subtasks are efficiently deployed on the cluster. Nevertheless, the job isn’t actually operating and processing knowledge till all of the subtasks are RUNNING.

Observing the applying standing throughout operations

The appliance standing is seen on the console, as proven within the following screenshot.

In your automation, you possibly can ballot the DescribeApplication API motion to look at the applying standing. The next command exhibits find out how to use the AWS Command Line Interface (AWS CLI) and jq command to extract the standing string of an utility:

Observing job and subtask standing

Managed Service for Apache Flink provides you entry to the Flink Dashboard, which supplies helpful data for troubleshooting, together with the standing of all subtasks. The next screenshot, for instance, exhibits a wholesome job the place all subtasks are RUNNING.

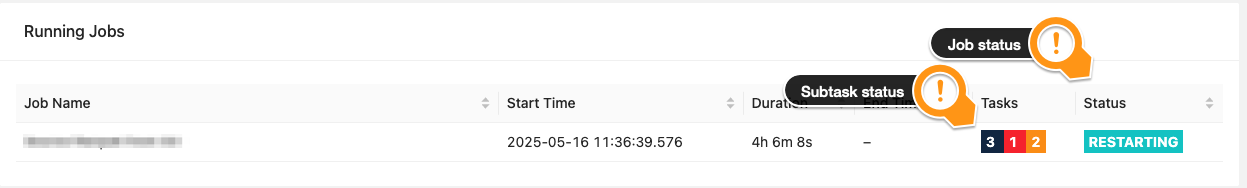

Within the following screenshot, we will see a job the place subtasks are failing and restarting.

In your automation, whenever you begin the applying or deploy a change, you wish to be certain the job is ultimately up and operating and processing knowledge. This occurs when all of the subtasks are RUNNING. Observe that ready for the job standing to turn into RUNNING after an operation isn’t utterly secure. A subtask may nonetheless fail and trigger the job to restart after it was reported as RUNNING.

After you execute a lifecycle operation, your automation can ballot the substasks standing ready for certainly one of two occasions:

- All subtasks report

RUNNING– This means the operation was profitable and your Flink job is up and operating. - Any subtask reviews

FAILINGorCANCELED– This means one thing went improper, and the applying is probably going caught in a fail-and-restart loop. You should intervene, for instance, force-stopping the applying after which rolling again the change.

If you’re restarting from a snapshot and the state of your utility is kind of large, you may observe subtasks will report INITIALIZING standing for longer. Through the initialization, Flink restores the state of the operator earlier than altering to RUNNING.

The Flink REST API exposes the state of the subtasks, and can be utilized in your automation. In Managed Service for Apache Flink, this requires three steps:

- Generate a pre-signed URL to entry the Flink REST API utilizing the CreateApplicationPresignedUrl API motion.

- Make a GET request to the

/jobsendpoint of the Flink REST API to retrieve the job ID. - Make a GET request to the

/jobs/endpoint to retrieve the standing of the subtasks.

The next GitHub repository supplies a shell script to retrieve the standing of the duties of a given Managed Service for Apache Flink utility.

Monitoring subtasks failure whereas the job is operating

The strategy of polling the Flink REST API can be utilized in your automation, instantly after an operation, to look at whether or not the operation was ultimately profitable.

We strongly advocate to not constantly ballot the Flink REST API whereas the job is operating to detect failures. This operation is useful resource consuming, and may degrade efficiency or trigger errors.

To observe for suspicious subtask standing modifications throughout regular operations, we advocate utilizing CloudWatch Logs as a substitute. The next CloudWatch Logs Insights question extracts all subtask state transitions:

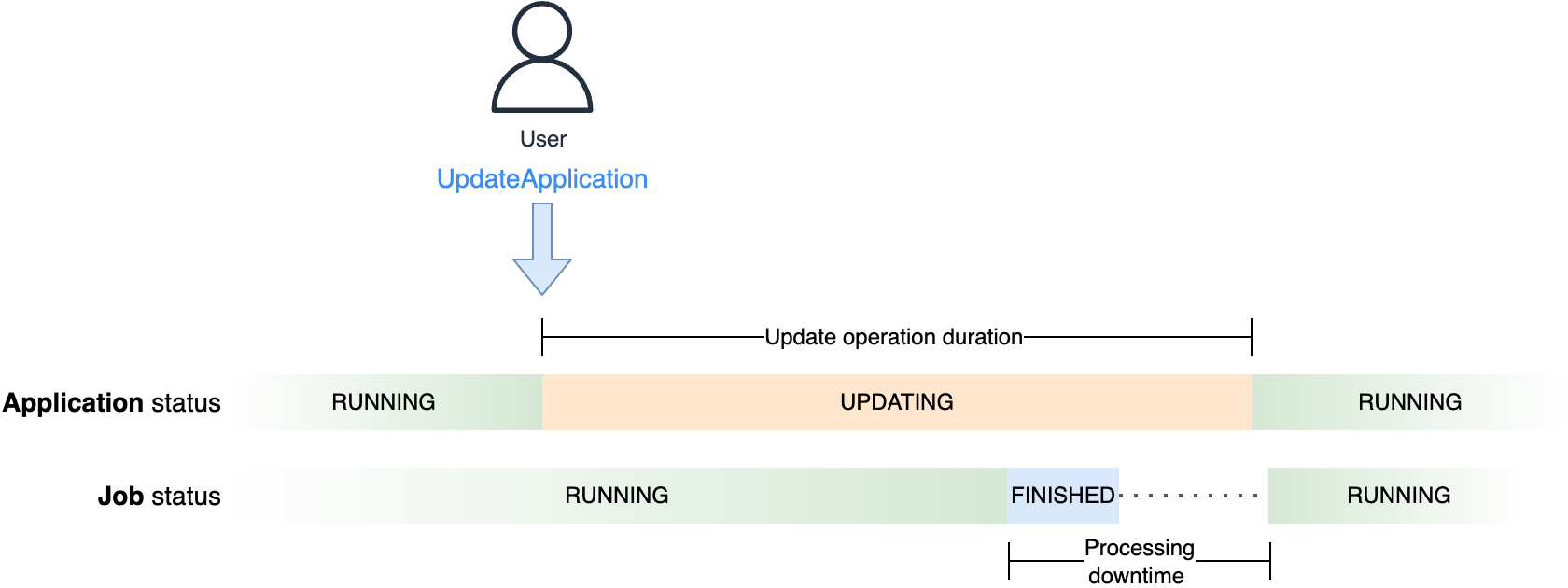

How Managed Service for Apache Flink minimizes processing downtime

We now have seen how Flink is designed for robust consistency. To ensure exactly-once state consistency, Flink quickly stops the processing to deploy any modifications, together with scaling. This downtime is required for Flink to take a constant copy of the applying state and reserve it in a savepoint. After the change is deployed, the job is restarted from the savepoint, and there’s no knowledge loss. In Managed Service for Apache Flink, updates are totally managed. When snapshots are enabled, UpdateApplication routinely stops the job and makes use of snapshots (primarily based on Flink’s savepoints) to retain the state.

Flink ensures no knowledge loss. Nevertheless, your enterprise necessities or Service Stage Goals (SLOs) may additionally impose a most delay for the information obtained by downstream methods, or end-to-end latency. This delay is affected by the processing downtime, or the time the job doesn’t course of knowledge to permit Flink deploying the change.With Flink, some processing downtime is unavoidable. Nevertheless, Managed Service for Apache Flink is designed to attenuate the processing downtime whenever you deploy a change.

We now have seen how the service runs your utility in a devoted cluster, for full isolation. While you situation UpdateApplication on a RUNNING utility, the service prepares a brand new cluster with the required quantity of assets. This operation may take a while. Nevertheless, this doesn’t have an effect on the processing downtime, as a result of the service retains the job operating and processing knowledge on the unique cluster till the final attainable second, when the brand new cluster is prepared. At this level, the service stops your job with a savepoint and restarts it on the brand new cluster.

Throughout this operation, you might be solely charged for the variety of KPU of a single cluster.

The next diagram illustrates the distinction between the period of the replace operation, or the time the applying standing is UPDATING, and the processing downtime, observable from the job standing, seen within the Flink Dashboard.

You may observe this course of, holding each the applying console and Flink Dashboard open, whenever you replace the configuration of a operating utility, even with no modifications. The Flink Dashboard will turn into quickly unavailable when the service switches to the brand new cluster. Moreover, you possibly can’t use the script we offered to examine the job standing for this scope. Although the cluster retains serving the Flink Dashboard till it’s tore down, the CreateApplicationPresignedUrl motion doesn’t work whereas the applying is UPDATING.

The processing time (the time the job isn’t operating on both clusters) relies on the time the job takes to cease with a savepoint (snapshot) and restore the state within the new cluster. This time largely relies on the scale of the applying state. Information skew may additionally have an effect on the savepoint time as a result of barrier alignment mechanism. For a deep dive into the Flink’s barrier alignment mechanism, confer with Optimize checkpointing in your Amazon Managed Service for Apache Flink functions with buffer debloating and unaligned checkpoints, holding in thoughts that savepoints are at all times aligned.

For the scope of your automation, you usually wish to wait till the job is again up and operating and processing knowledge. You usually wish to set a timeout. If each the applying and job don’t return to RUNNING inside this timeout, one thing in all probability went improper and also you may wish to elevate an alarm or pressure a rollback. This timeout ought to think about the complete replace operation period.

Conclusion

On this submit, we mentioned attainable failure eventualities whenever you deploy a change or scale your utility. We confirmed how Managed Service for Apache Flink rollback functionalities can seamlessly carry you again to a secure place after a change went improper. We additionally explored how one can automate monitoring operations to look at utility, job, and subtask standing, and find out how to use the fullRestarts metric to detect when the job is in a fail-and-restart loop.

For extra data, see Run a Managed Service for Apache Flink utility, Implement fault tolerance in Managed Service for Apache Flink, and Handle utility backups utilizing Snapshots.

In regards to the authors