In the current AI-driven landscape, sequential models have gained widespread acclaim for their ability to analyze complex data sets and accurately forecast future actions. You’ve employed techniques akin to those utilized by models such as ChatGPT, which leverage next-token prediction methods to generate responses by anticipating each successive phrase or token in a customer’s query. There exist also full-sequence diffusion models, such as Sora, that transform text into stunning, photorealistic visualizations by iteratively refining an entire video sequence through denoising processes.

Scientists at MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) have introduced a minor modification to the diffusion training protocol, effectively rendering sequence denoising far more adaptable.

As deployed in applications such as laptop vision and robotics, the next-token and full-sequence diffusion models exhibit performance trade-offs between these two approaches. Subsequent token fashions are capable of generating sequences that vary in length. Despite their efforts, these generations inadvertently shape their own distant futures, much like setting a GPS to navigate 10 steps ahead, without consideration of the captivating consequences – a compelling reason to develop additional tools for strategic long-term thinking. While diffusion fashions excel in future-conditioned sampling, they fall short of next-token fashions’ capability to produce variable-length sequences.

Researchers at CSAIL have developed a novel sequence model training approach called “Diffusion Forcing”, which combines the strengths of each fashion. This technique is inspired by traditional “Teacher Forcing” that simplifies complex ideas into manageable, step-by-step processes.

Discoveries in Diffusion Forcing reveal a significant disparity between diffusion models and teacher forcing approaches, both leveraging training schemes that predict masked or noisy tokens based on unmasked counterparts. As part of the diffusion process in fashion trends, a gradual injection of random variation into data is observed, effectively simulating fractional masking. Researchers at MIT have developed a novel approach called Diffusion Forcing that utilizes neural networks to purify sequences of tokens by eliminating varying amounts of noise within each sequence while simultaneously forecasting the ensuing tokens. The outcome was a sophisticated, reliable generative model that produced high-caliber synthetic videos and enabled more precise decision-making for robots and artificial intelligence agents.

By leveraging noisy data and accurately forecasting the next stages in a process, Diffusion Forcing enables robots to effectively filter out visual distractions, thereby ensuring timely completion of complex manipulation tasks. The AI system can also produce consistent and continuous video footage, as well as provide training data for an AI agent through digital labyrinths. This approach could undoubtedly enable family and manufacturing robots to generalise to novel tasks, thereby augmenting AI-produced entertainment.

Here’s an attempt at improving the text in a different style:

“By mapping patterns between past experiences and anticipated outcomes, Sequence aims to distill the essence of a given situation, effectively masking uncertainty through binary predictions.” Notwithstanding the traditional notion that masking must necessarily be an all-or-nothing proposition, Boyuan Chen, a PhD student in MIT’s Electrical Engineering and Computer Science department and member of the Computer Science and Artificial Intelligence Laboratory, offers a counterintuitive perspective: “Masking doesn’t have to be binary.” Here is the rewritten text:

“With Diffusion Forcing, we inject diverse levels of noise into each token, effectively functioning as fractional masking.” When checking time arrives, our system successfully unmasks a specific set of tokens and accurately predicts a sequence within a near-future timeframe with significantly reduced noise levels. “It is capable of discerning what lies within its domain of knowledge, effectively countering out-of-distribution inputs.”

In various experiments, Diffusion Forcing consistently demonstrated the ability to disregard misleading data and effectively perform tasks while also considering potential future consequences.

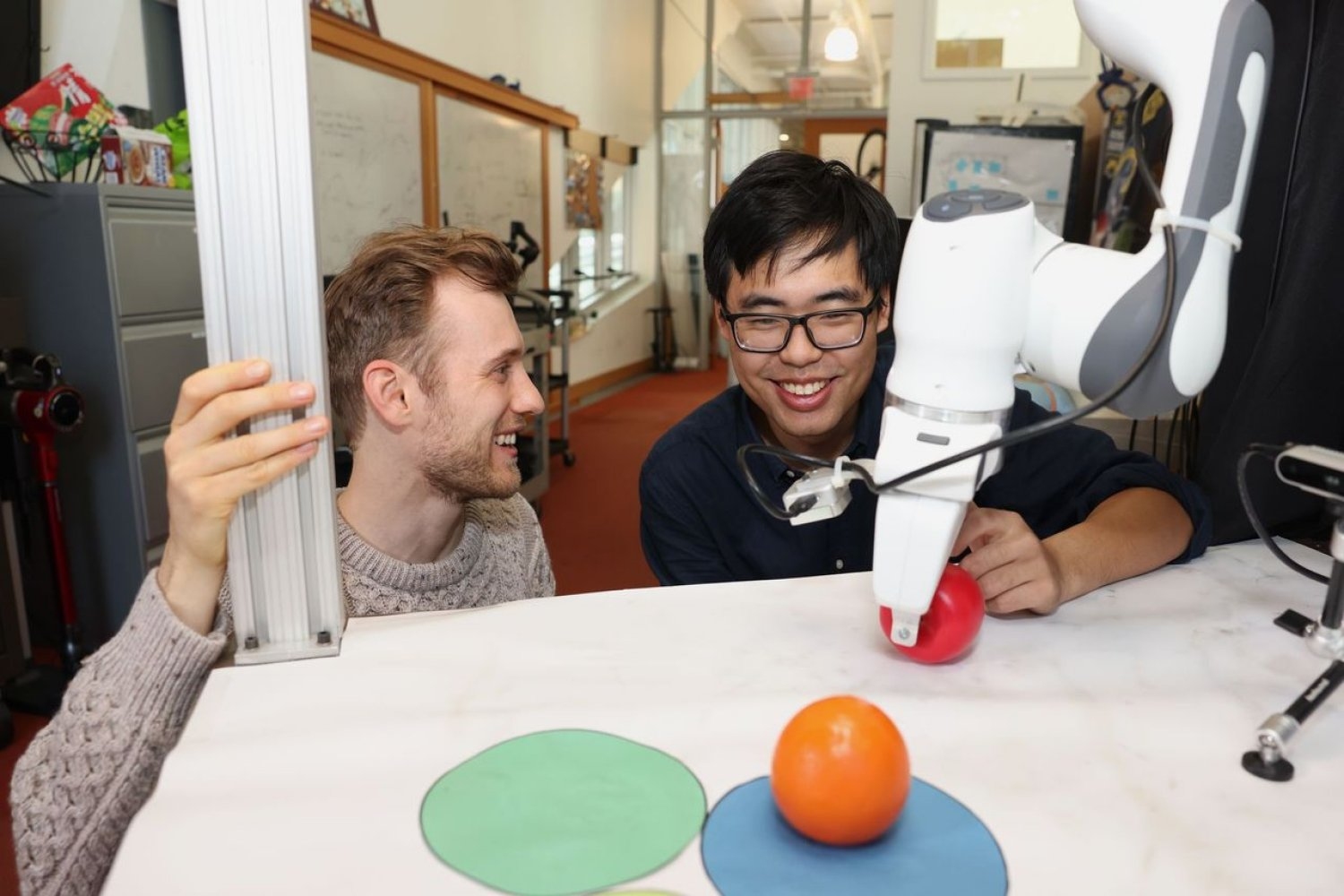

When integrated into a robotic arm, this technology enabled the efficient transfer of two toy fruits across three circular mats, exemplifying a small-scale demonstration of the type of long-term tasks that rely on memory recall. Researchers taught the robot using remote control technology, operating it within a virtual environment. The robot is programmed to mimic a consumer’s actions through its camera. Despite potential obstacles such as randomly placed objects or visual distractions like a shopping bag obstructing the target markers, it successfully placed the items in their designated locations.

Developing movies, researchers applied the principles of diffusion forcing to a virtual environment within Google’s platform, crafting colorful digital settings reminiscent of those found in popular games like “Minecraft”. The provided tactic yielded exceptionally stable and high-definition videos from a single body of footage, outperforming benchmarks such as sequence-based models like Sora’s full-sequence diffusion approach and token-based models akin to ChatGPT. These methods produced films that often exhibited inconsistencies, with the latter struggling to produce viable footage beyond a mere 72 frames.

While diffusion forcing initially creates visually stunning simulations, its true potential lies in its ability to serve as a movement planner that skillfully navigates towards desired outcomes or rewards. Because of its adaptability, Diffusion Forcing excels at producing diverse plans with adjustable horizons, conducting tree searches, and incorporating the intuitive notion that the more distant future is inherently less certain than the near-term horizon. In contrast to six baseline methods, the diffusion-based approach significantly outperformed them, generating more expedient paths that ultimately led to the target destination, suggesting a promising prospect as an efficient robotic planner in the near future.

During all demos, Diffusion Forcing functioned as either a full sequence model, a next-token prediction model, or both simultaneously. According to Chen’s findings, this innovative approach could undoubtedly serve as a robust foundation for a “world model,” an AI system capable of simulating global dynamics by training on vast amounts of online video data. This enables robots to take on new tasks by using their surroundings as a primary guide for what actions to perform. The mannequin could create a tutorial video showcasing the precise steps required to successfully operate the robotic door opener, thereby empowering those who lack prior knowledge of the process.

The team is currently endeavouring to upscale their methodology for larger datasets and leverage the latest transformer architectures to boost performance. Their goal is to develop a robotic cognitive architecture akin to ChatGPT’s capabilities, enabling machines to perform tasks in novel settings without the need for explicit human guidance or demonstrations.

“Dramatically advancing video technology and robotics convergence, Vincent Sitzmann, senior creator at MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) and leader of the Scene Illustration group, notes that the Diffusion Forcing innovation brings these fields significantly closer together.” Ultimately, our goal is to utilize the vast amounts of data stored in online movies to enable robots to seamlessly integrate into daily life and provide meaningful assistance. While numerous intriguing analysis hurdles remain, such as exploring how robots can be trained to emulate humans by observing their actions despite having vastly dissimilar bodily structures.

Chen and Sitzmann co-authored the paper with visiting researcher Diego Martí Monsó from MIT, alongside associates from CSAIL: Yilun Du, an EECS graduate student; Max Simchowitz, a former postdoc and incoming assistant professor at Carnegie Mellon University; and Russ Tedrake, the Toyota Professor of Electrical Engineering and Computer Science, Aeronautics and Astronautics, and Mechanical Engineering at MIT, as well as VP of Robotics Research at the Toyota Research Institute. The researchers’ project received partial funding from the United States government. The National Science Foundation, along with the Singapore Defence Science and Technology Agency, has conducted advanced research initiatives under the U.S. Department of Defense’s Intelligence Advanced Research Projects Activity. Amazon’s Drive to Divide, and the Emergence of a Revolutionary Science Hub. They will present their analysis at NeurIPS in December.