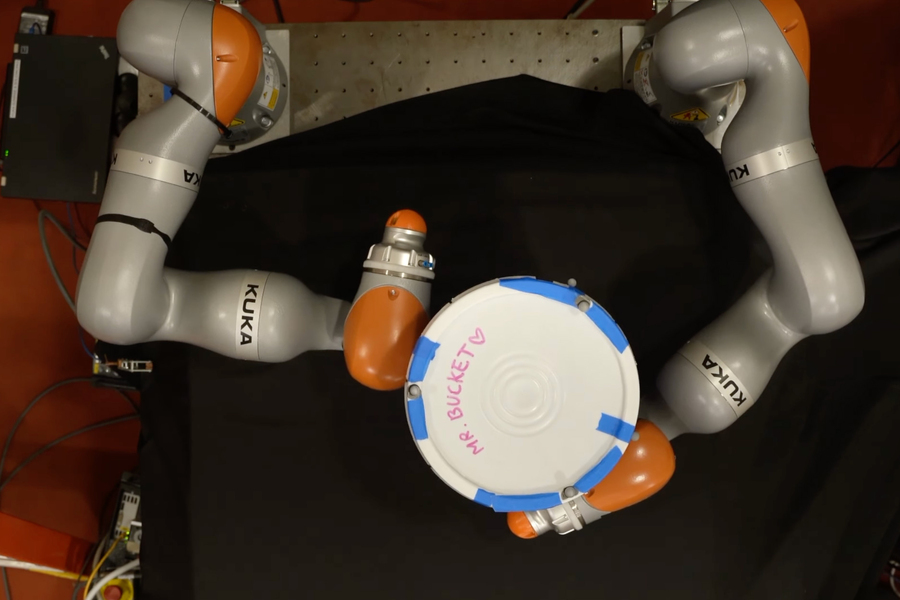

Researchers at MIT have designed an AI-powered framework enabling robots to formulate intricate plans for grasping and manipulating objects using their entire hand, rather than just relying on fingertip manipulation. This AI-powered mannequin can swiftly produce effective plans within minutes on a standard laptop. A robotic makes a concerted effort to rotate a bucket by 180 degrees.

How do you plan to lug that enormous load of a gigantic, weighty field all the way up the staircase? Unfold your fingers to release the grip, and slowly lift the field with each finger, holding it aloft on top of your forearm before stabilizing it against your chest, using your entire body to control the weight.

While humans excel in coordinating whole-body movements, robots struggle to replicate this proficiency. For a robot, each point where the field may come into contact with any level on the provider’s fingers, arms, and torso constitutes a contact event that it must consider. With countless potential contact opportunities, planning for this task soon becomes overwhelming.

Contact-Rich Manipulation Planning? Using an AI-driven methodology called smoothing, numerous contact scenarios are condensed into a more manageable set of options, enabling a straightforward algorithm to swiftly develop an effective manipulation strategy for the robot.

While initially, this technology allowed for the development of smaller, cellular robots that could manipulate objects using their entire bodies or limbs, rather than large robotic arms that could only grasp using fingertips? This is expected to help reduce energy consumption and lower costs. This autonomous decision-making system could prove particularly valuable for robots deployed on Martian or other solar system expeditions, as it would enable them to quickly adjust to their environment using only onboard computing resources.

“Moderately interested in this black-box system, I believe that by leveraging the construction of robotic methods utilizing established patterns, we can accelerate the decision-making process and generate contact-rich plans,” says an electrical engineering and computer science (EECS) graduate student and co-lead author of a paper on this topic.

Becoming a member of the paper are co-lead creators Tao Pang, PhD ’23, a roboticist at Boston Dynamics AI Institute, Lujie Yang, an EECS graduate student, and senior author Dr. John Canny, Toyota Professor of Electrical Engineering and Computer Science (EECS), Aeronautics and Astronautics, and Mechanical Engineering, as well as a member of the Computer Science and Artificial Intelligence Laboratory (CSAIL). The analysis appears to be this week?

Studying about studying

Reinforcement learning is a machine-learning approach where an agent, such as a robot, learns to complete a task through trial and error, receiving rewards for progressing towards a goal. Researchers claim that this type of analysis employs a black-box approach, where the system learns through trial-and-error methods by examining every aspect of the world.

It has been employed with success in contact-rich manipulation planning, allowing the robot to explore optimal methods for manipulating objects in a predetermined manner.

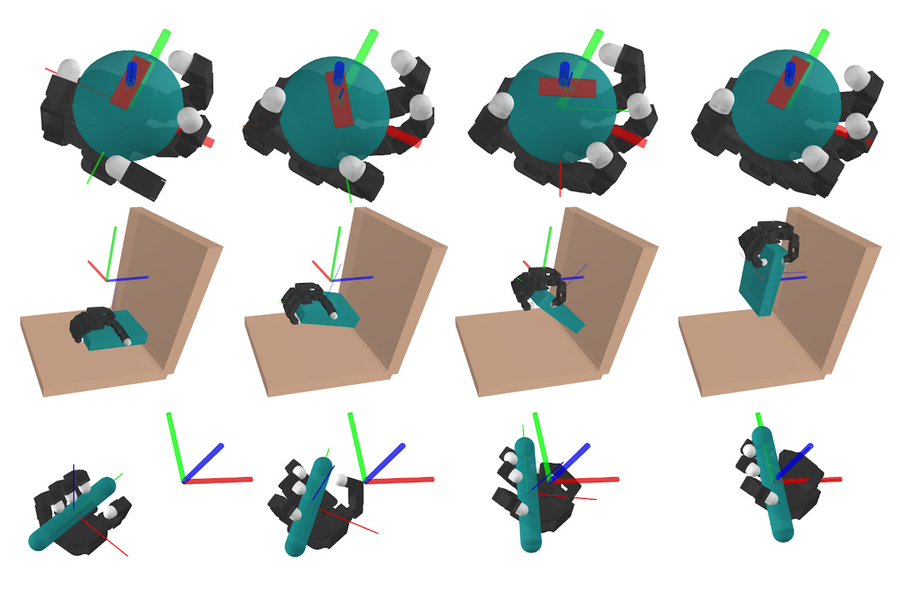

A simulated robotic arm successfully executes three complex manipulation tasks: in-hand manipulation of a ball, picking up a plate, and orienting a pen to a desired position.

Despite the potentially vast array of contact factors that a robot must consider when determining the most effective means of using its hands, hands, arms, and body to interact with an object, this trial-and-error approach necessitates considerable computational resources.

“Reinforcement learning may require simulations spanning tens of thousands of years to effectively investigate a complex concept,”

When researchers specifically develop a physics-based model of the system using their understanding of the process and task they require the robot to accomplish, that model incorporates knowledge about this domain, making it more efficient.

While physics-based methods may not offer the same level of efficiency as reinforcement learning in terms of contact-rich manipulation planning, Suh and Pang’s investigation sought to uncover the underlying reasons for this disparity.

Researchers conducted a thorough examination, discovering that the technique known as smoothing enables reinforcement learning to excel remarkably well.

In most instances, the decisions a robot makes about manipulating an object are non-essential in the larger context of problems. Every minute adjustment, regardless of whether a single finger makes contact with the object or not, has little to no significant impact. By smoothing out averages, many unessential, intermediate options are eliminated, yielding only a handful of vital decisions.

Reinforcement learning inherently incorporates implicit smoothing through repeated attempts to interact with multiple environment factors, followed by the calculation of a weighted average of the resulting outcomes. By leveraging this insight, MIT researchers developed a straightforward model that replicates the smoothing effect, allowing it to focus on core robot-object interactions and anticipate long-term behavioral patterns. They verified that this approach could potentially rival the effectiveness of reinforcement learning in generating intricate strategies.

“If you delve deeper into the intricacies of a particular challenge, you’ll discover that designing more efficient algorithms becomes a tangible possibility,” Pang explains.

A profitable mixture

Despite simplification provided by smoothing, examining the remaining options can still pose a considerable challenge. Researchers combined a humanoid robot’s decision-making framework with an algorithm designed to rapidly explore all possible options, thereby enabling efficient and effective decision-making processes for the robotic system.

With this efficient combination, the computation time plummeted, taking mere minutes on a standard laptop.

Initially, researchers tested their approach through simulations where robotic fingers performed tasks such as placing a pen into a specific configuration, opening a door, or grasping a plate. While their model-based approach consistently matched the performance of reinforcement learning, it did so at a significantly reduced computational cost. When examining their simulator model on actual robotic arms, they discovered similar results.

These fundamental principles enabling whole-body control also facilitate seamless coordination with precision, finger movements reminiscent of those in humans. Prior to this breakthrough, many researchers believed that reinforcement learning was the only approach scalable to dexterous fingers. However, Terry and Tao demonstrated that by applying the concept of randomized smoothing – a key idea borrowed from reinforcement learning – they could successfully adapt conventional planning strategies as well.”

Notwithstanding their development of a mannequin, it relies on a less complex simulation of reality, limiting its ability to handle highly dynamic movements like objects in free fall. While suitable for processing tasks that require less agility, this approach lacks the capability to devise a strategy allowing a robot to directly deposit a can into a waste receptacle, such as tossing it into a trash bin. Once they’ve made progress on this project, the researchers intend to scale up their method to effectively tackle these highly complex movements.

For individuals who scrutinize fashion trends with care, recognizing the challenges they aim to address, certain benefits can be derived. There are benefits to tackling challenges that may lie beyond one’s comfort zone, notes Suh.

The research project receives partial funding from Amazon, MIT Lincoln Laboratory, the National Science Foundation, and the Ocado Group.

MIT Information