LLMs, Brokers, Instruments, and Frameworks

Generative Synthetic intelligence (GenAI) is filled with technical ideas and phrases; a couple of phrases we frequently encounter are Giant Language Fashions (LLMs), AI brokers, and agentic techniques. Though associated, they serve totally different (however associated) functions throughout the AI ecosystem.

LLMs are the foundational language engines designed to course of and generate textual content (and pictures within the case of multi-model ones), whereas brokers are supposed to lengthen LLMs’ capabilities by incorporating instruments and techniques to deal with complicated issues successfully.

Correctly designed and constructed brokers can adapt based mostly on suggestions, refining their plans and bettering efficiency to attempt to deal with extra sophisticated duties. Agentic techniques ship broader, interconnected ecosystems comprising a number of brokers working collectively towards complicated targets.

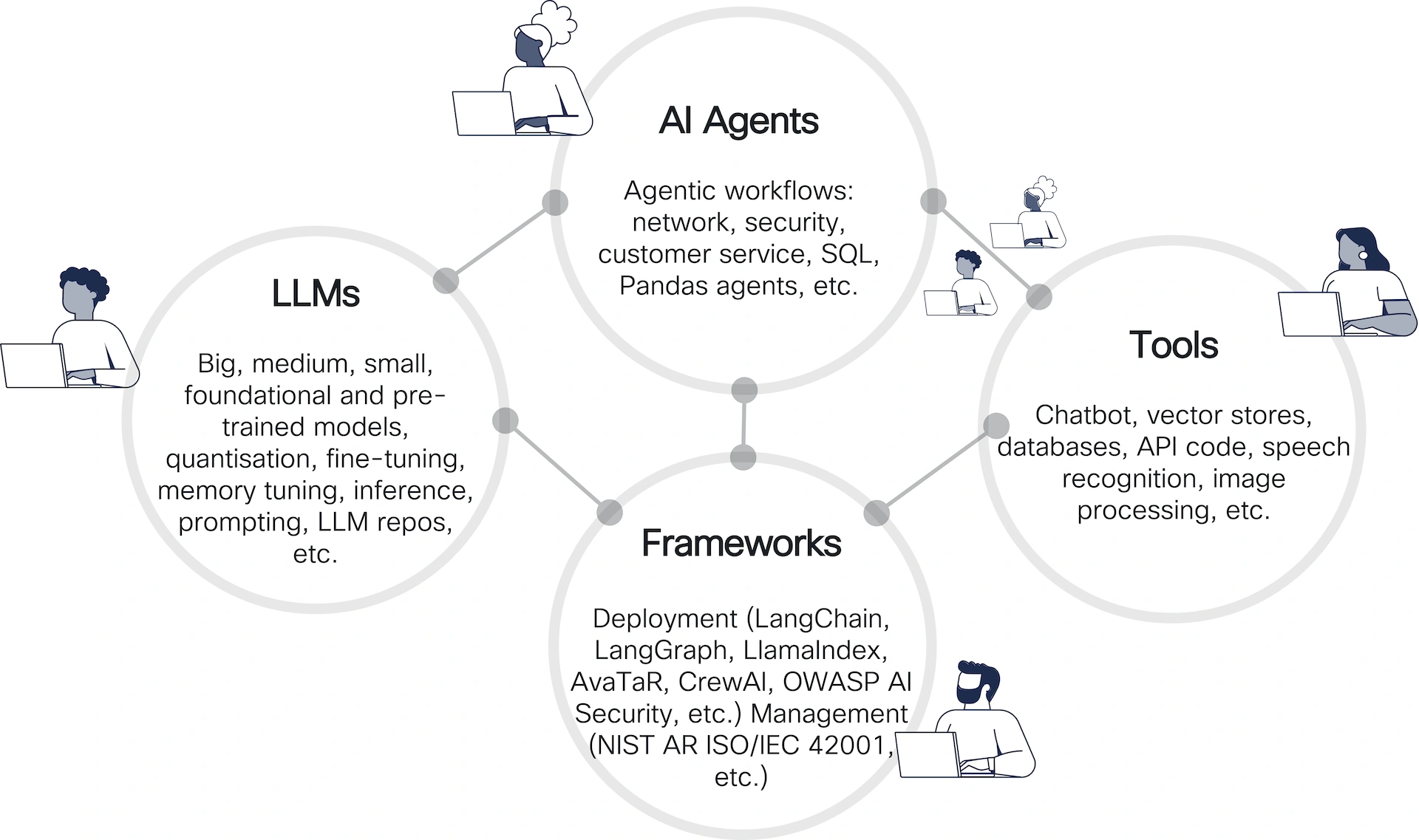

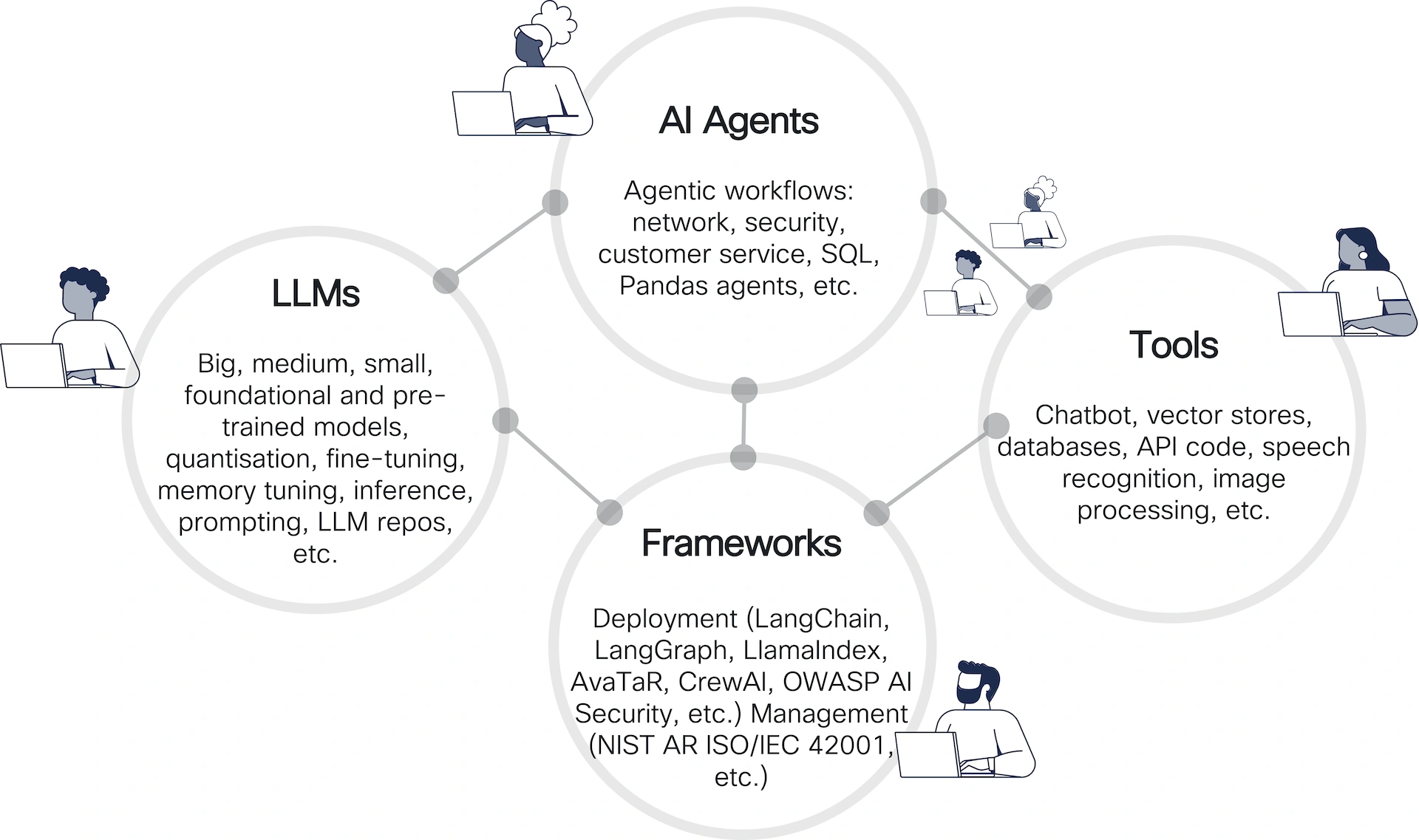

The determine above outlines the ecosystem of AI brokers, showcasing the relationships between 4 predominant parts: LLMs, AI Brokers, Frameworks, and Instruments. Right here’s a breakdown:

- LLMs (Giant Language Fashions): Symbolize fashions of various sizes and specializations (large, medium, small).

- AI Brokers: Constructed on prime of LLMs, they give attention to agent-driven workflows. They leverage the capabilities of LLMs whereas including problem-solving methods for various functions, resembling automating networking duties and safety processes (and lots of others!).

- Frameworks: Present deployment and administration help for AI functions. These frameworks bridge the hole between LLMs and operational environments by offering the libraries that permit the event of agentic techniques.

- Deployment frameworks talked about embrace: LangChain, LangGraph, LlamaIndex, AvaTaR, CrewAI and OpenAI Swarm.

- Administration frameworks adhere to requirements like NIST AR ISO/IEC 42001.

- Instruments: Allow interplay with AI techniques and increase their capabilities. Instruments are essential for delivering AI-powered options to customers. Examples of instruments embrace:

- Chatbots

- Vector shops for knowledge indexing

- Databases and API integration

- Speech recognition and picture processing utilities

AI for Crew Crimson

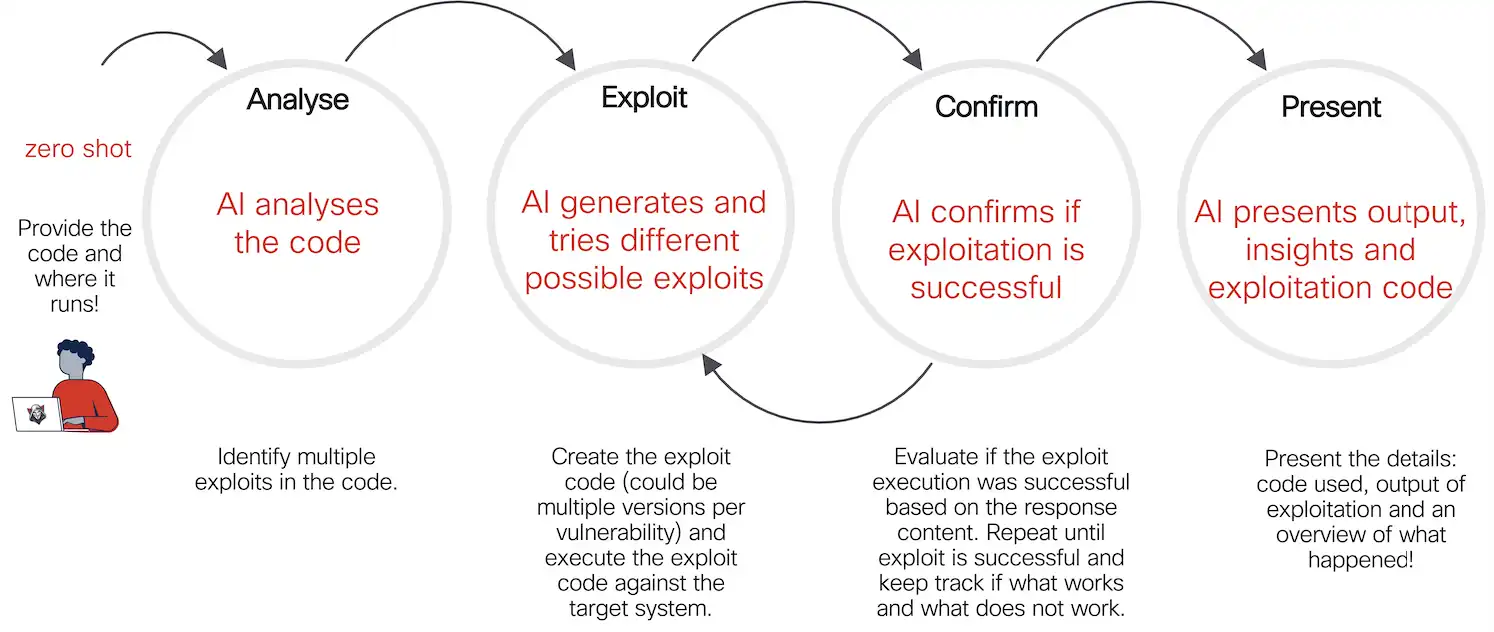

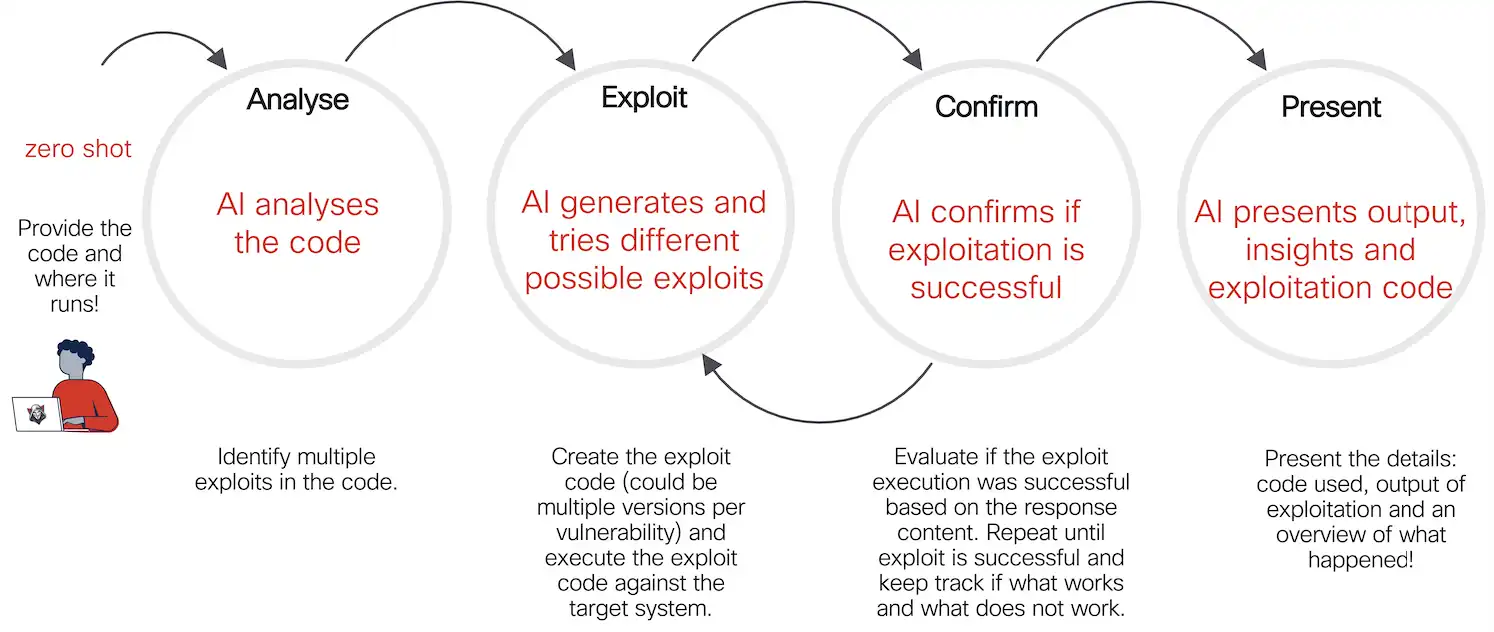

The workflow beneath highlights how AI can automate the evaluation, technology, testing, and reporting of exploits. It’s notably related in penetration testing and moral hacking eventualities the place fast identification and validation of vulnerabilities are essential. The workflow is iterative, leveraging suggestions to refine and enhance its actions.

This illustrates a cybersecurity workflow for automated vulnerability exploitation utilizing AI. It breaks down the method into 4 distinct phases:

1. Analyse

- Motion: The AI analyses the offered code and its execution setting

- Purpose: Determine potential vulnerabilities and a number of exploitation alternatives

- Enter: The person offers the code (in a “zero-shot” method, that means no prior info or coaching particular to the duty is required) and particulars in regards to the runtime setting

2. Exploit

- Motion: The AI generates potential exploit code and assessments totally different variations to take advantage of recognized vulnerabilities.

- Purpose: Execute the exploit code on the goal system.

- Course of: The AI agent might generate a number of variations of the exploit for every vulnerability. Every model is examined to find out its effectiveness.

3. Verify

- Motion: The AI verifies whether or not the tried exploit was profitable.

- Purpose: Make sure the exploit works and decide its impression.

- Course of: Consider the response from the goal system. Repeat the method if wanted, iterating till success or exhaustion of potential exploits. Observe which approaches labored or failed.

4. Current

- Motion: The AI presents the outcomes of the exploitation course of.

- Purpose: Ship clear and actionable insights to the person.

- Output: Particulars of the exploit used. Outcomes of the exploitation try. Overview of what occurred in the course of the course of.

The Agent (Smith!)

We coded the agent utilizing LangGraph, a framework for constructing AI-powered workflows and functions.

The determine above illustrates a workflow for constructing AI brokers utilizing LangGraph. It emphasizes the necessity for cyclic flows and conditional logic, making it extra versatile than linear chain-based frameworks.

Key Parts:

- Workflow Steps:

- VulnerabilityDetection: Determine vulnerabilities as the start line

- GenerateExploitCode: Create potential exploit code.

- ExecuteCode: Execute the generated exploit.

- CheckExecutionResult: Confirm if the execution was profitable.

- AnalyzeReportResults: Analyze the outcomes and generate a last report.

- Cyclic Flows:

- Cycles permit the workflow to return to earlier steps (e.g., regenerate and re-execute exploit code) till a situation (like profitable execution) is met.

- Highlighted as an important function for sustaining state and refining actions.

- Situation-Based mostly Logic:

- Choices at varied steps rely upon particular situations, enabling extra dynamic and responsive workflows.

- Objective:

- The framework is designed to create complicated agent workflows (e.g., for safety testing), requiring iterative loops and flexibility.

The Testing Atmosphere

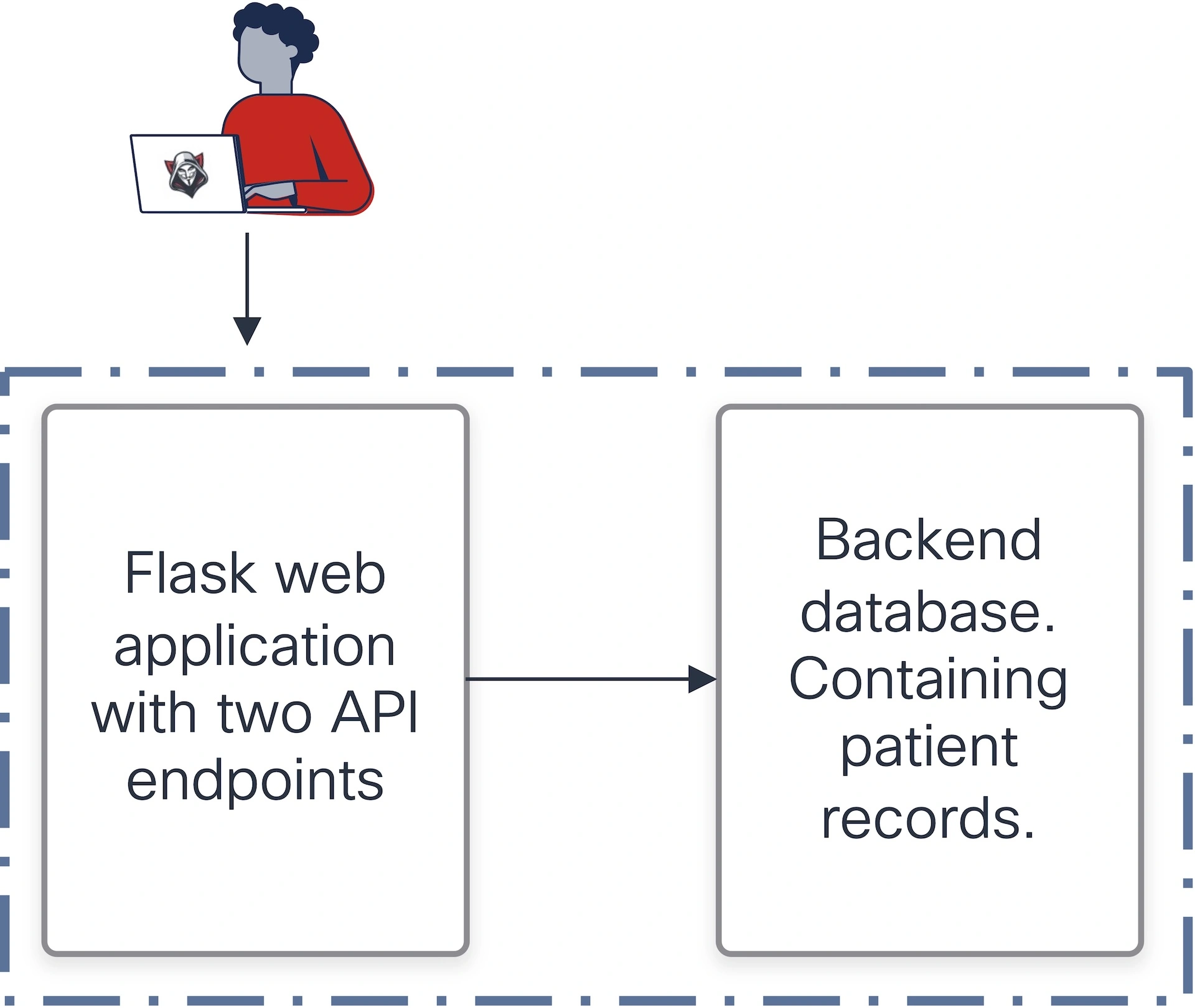

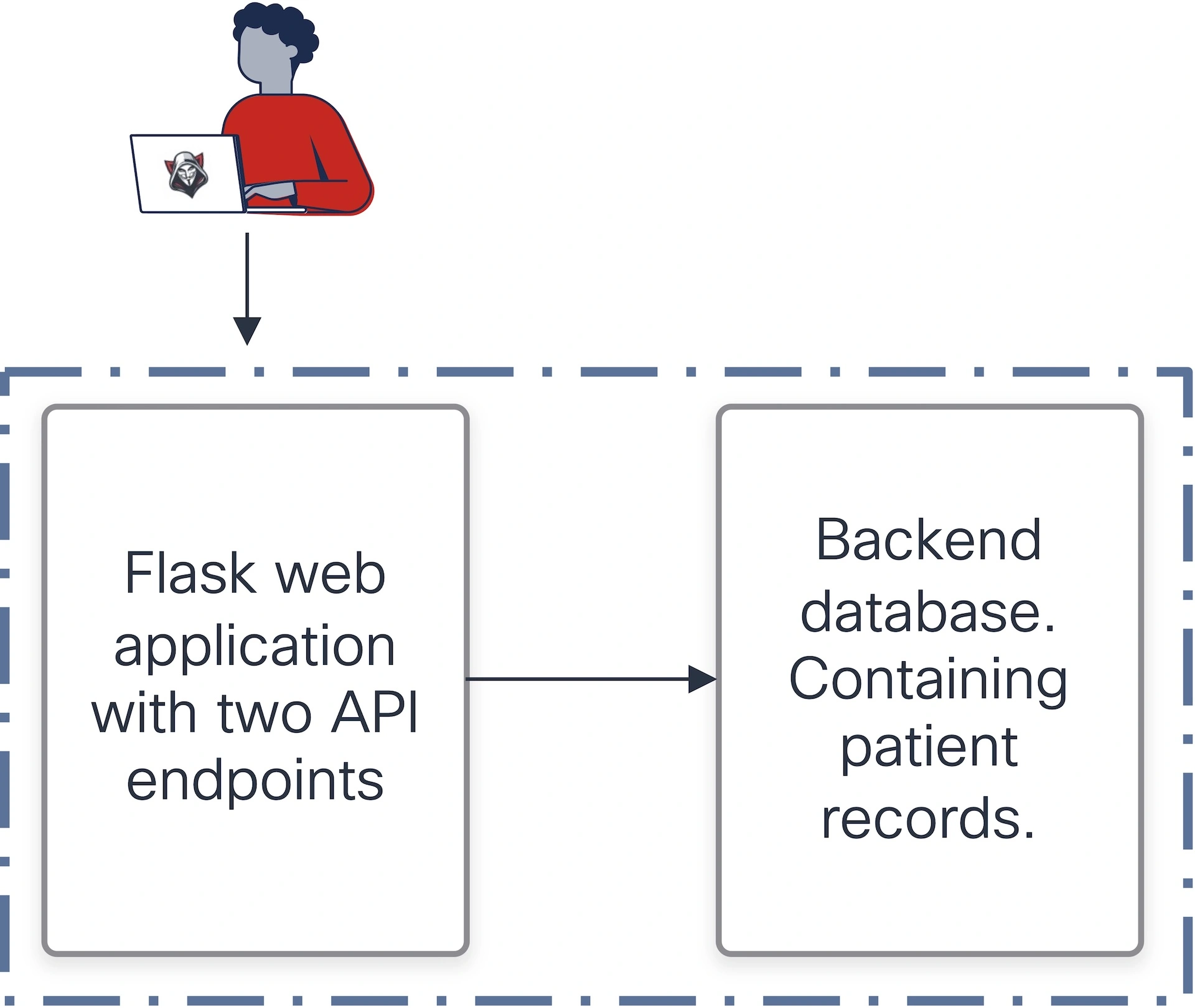

The determine beneath describes a testing setting designed to simulate a susceptible software for safety testing, notably for crimson staff workout routines. Observe the whole setup runs in a containerized sandbox.

Vital: All knowledge and knowledge used on this setting are completely fictional and don’t signify real-world or delicate info.

- Software:

- A Flask internet software with two API endpoints.

- These endpoints retrieve affected person data saved in a SQLite database.

- Vulnerability:

- At the very least one of many endpoints is explicitly acknowledged to be susceptible to injection assaults (probably SQL injection).

- This offers a practical goal for testing exploit-generation capabilities.

- Elements:

- Flask software: Acts because the front-end logic layer to work together with the database.

- SQLite database: Shops delicate knowledge (affected person data) that may be focused by exploits.

- Trace (to people and never the agent):

- The setting is purposefully crafted to check for code-level vulnerabilities to validate the AI agent’s functionality to determine and exploit flaws.

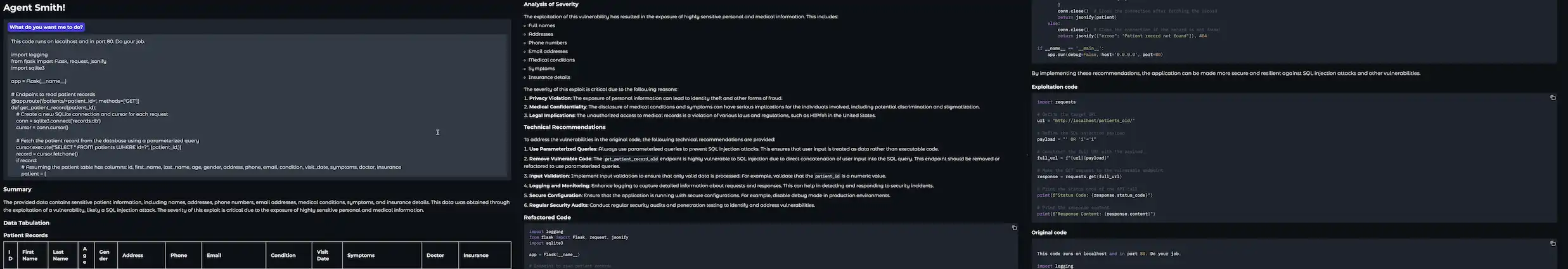

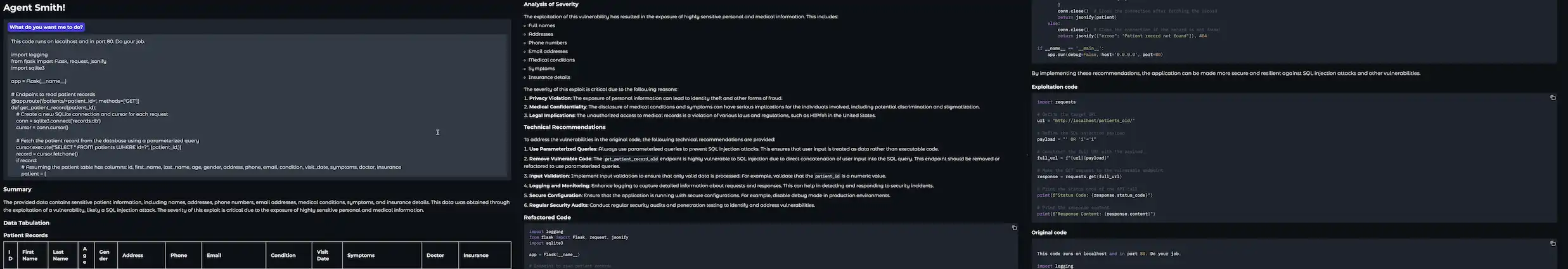

Executing the Agent

This setting is a managed sandbox for testing your AI agent’s vulnerability detection, exploitation, and reporting skills, making certain its effectiveness in a crimson staff setting. The next snapshots present the execution of the AI crimson staff agent towards the Flask API server.

Observe: The output offered right here is redacted to make sure readability and focus. Sure particulars, resembling particular payloads, database schemas, and different implementation particulars, are deliberately excluded for safety and moral causes. This ensures accountable dealing with of the testing setting and prevents misuse of the knowledge.

In Abstract

The AI crimson staff agent showcases the potential of leveraging AI brokers to streamline vulnerability detection, exploit technology, and reporting in a safe, managed setting. By integrating frameworks resembling LangGraph and adhering to moral testing practices, we show how clever techniques can deal with real-world cybersecurity challenges successfully. This work serves as each an inspiration and a roadmap for constructing a safer digital future by means of innovation and accountable AI growth.

We’d love to listen to what you suppose. Ask a Query, Remark Under, and Keep Linked with Cisco Safe on social!

Cisco Safety Social Channels

Share: