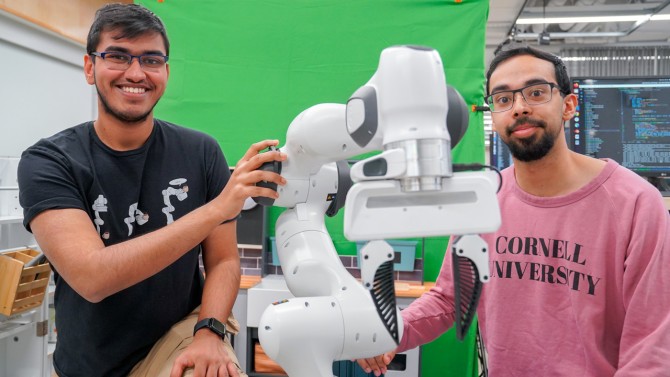

Kushal Kedia (left) and Prithwish Dan (proper) are members of the event workforce behind RHyME, a system that permits robots to be taught duties by watching a single how-to video.

Kushal Kedia (left) and Prithwish Dan (proper) are members of the event workforce behind RHyME, a system that permits robots to be taught duties by watching a single how-to video.

By Louis DiPietro

Cornell researchers have developed a brand new robotic framework powered by synthetic intelligence – referred to as RHyME (Retrieval for Hybrid Imitation underneath Mismatched Execution) – that permits robots to be taught duties by watching a single how-to video. RHyME might fast-track the event and deployment of robotic programs by considerably decreasing the time, power and cash wanted to coach them, the researchers stated.

“One of many annoying issues about working with robots is gathering a lot information on the robotic doing totally different duties,” stated Kushal Kedia, a doctoral scholar within the subject of pc science and lead writer of a corresponding paper on RHyME. “That’s not how people do duties. We have a look at different individuals as inspiration.”

Kedia will current the paper, One-Shot Imitation underneath Mismatched Execution, in Could on the Institute of Electrical and Electronics Engineers’ Worldwide Convention on Robotics and Automation, in Atlanta.

Dwelling robotic assistants are nonetheless a good distance off – it’s a very troublesome process to coach robots to cope with all of the potential eventualities that they might encounter in the actual world. To get robots up to the mark, researchers like Kedia are coaching them with what quantities to how-to movies – human demonstrations of assorted duties in a lab setting. The hope with this method, a department of machine studying referred to as “imitation studying,” is that robots will be taught a sequence of duties sooner and be capable of adapt to real-world environments.

“Our work is like translating French to English – we’re translating any given process from human to robotic,” stated senior writer Sanjiban Choudhury, assistant professor of pc science within the Cornell Ann S. Bowers School of Computing and Info Science.

This translation process nonetheless faces a broader problem, nonetheless: People transfer too fluidly for a robotic to trace and mimic, and coaching robots with video requires gobs of it. Additional, video demonstrations – of, say, choosing up a serviette or stacking dinner plates – have to be carried out slowly and flawlessly, since any mismatch in actions between the video and the robotic has traditionally spelled doom for robotic studying, the researchers stated.

“If a human strikes in a approach that’s any totally different from how a robotic strikes, the strategy instantly falls aside,” Choudhury stated. “Our pondering was, ‘Can we discover a principled technique to cope with this mismatch between how people and robots do duties?’”

RHyME is the workforce’s reply – a scalable method that makes robots much less finicky and extra adaptive. It trains a robotic system to retailer earlier examples in its reminiscence financial institution and join the dots when performing duties it has considered solely as soon as by drawing on movies it has seen. For instance, a RHyME-equipped robotic proven a video of a human fetching a mug from the counter and putting it in a close-by sink will comb its financial institution of movies and draw inspiration from related actions – like greedy a cup and reducing a utensil.

RHyME paves the way in which for robots to be taught multiple-step sequences whereas considerably reducing the quantity of robotic information wanted for coaching, the researchers stated. They declare that RHyME requires simply half-hour of robotic information; in a lab setting, robots educated utilizing the system achieved a greater than 50% improve in process success in comparison with earlier strategies.

“This work is a departure from how robots are programmed as we speak. The established order of programming robots is 1000’s of hours of tele-operation to show the robotic the best way to do duties. That’s simply inconceivable,” Choudhury stated. “With RHyME, we’re transferring away from that and studying to coach robots in a extra scalable approach.”

This analysis was supported by Google, OpenAI, the U.S. Workplace of Naval Analysis and the Nationwide Science Basis.

Learn the work in full

One-Shot Imitation underneath Mismatched Execution, Kushal Kedia, Prithwish Dan, Angela Chao, Maximus Adrian Tempo, Sanjiban Choudhury.

Cornell College