Mannequin Context Protocol (MCP) typically described because the “USB-C for AI brokers”, is the de-facto normal for connecting giant language mannequin (LLM) assistants with third-party instruments and information. It permits AI brokers to plug into varied providers, run instructions, and share context seamlessly. Nonetheless, it’s not safe by default. In reality, when you’ve been indiscriminately hooking your AI agent into arbitrary MCP servers, you may need unintentionally “opened a side-channel into your shell, secrets and techniques, or infrastructure”. On this article, we’ll discover the safety dangers in MCP and the way they are often exploited, together with their threat ranges, impacts, and mitigation methods. We’ll additionally draw parallels to basic safety points in software program and AI to place these dangers in context.

Current Findings

A latest examine carried out from Leidos, highlights vital safety dangers in utilizing Mannequin Context Protocol (MCP). The researchers reveal that attackers can exploit MCP to execute malicious code, acquire unauthorized distant entry, and steal credentials by manipulating LLMs like Claude and Llama. Each Claude and Llama-3.3-70B-Instruct are prone to the three assaults described within the paper. To deal with these threats, they launched a instrument that makes use of AI-agents to determine vulnerabilities in MCP servers and counsel treatments. Their work underscores the necessity for proactive safety measures in AI agent workflows.

1. Command Injection

AI brokers linked to MCP instruments may be tricked into executing dangerous instructions simply by manipulating the enter immediate. If the mannequin passes person enter immediately into shell instructions, SQL queries, or system capabilities and also you’ve received distant code execution. This vulnerability is paying homage to conventional injection assaults however is exacerbated in AI contexts as a result of dynamic nature of immediate processing. Mitigation methods embody rigorous enter sanitization, using parameterized queries, and implementing strict execution boundaries to make sure that person inputs can’t alter the supposed command construction.

Affect: Distant code execution, information leaks.

Mitigation: Sanitize inputs, by no means run uncooked strings, implement execution boundaries.

MCP instruments aren’t at all times what they appear. A poisoned instrument can embody deceptive documentation or hidden code that subtly alters how the agent behaves. As a result of LLMs deal with instrument descriptions as sincere, a malicious docstring can embed secret directions, like sending personal keys or leaking information. This exploitation leverages the belief AI brokers place in instrument descriptions. To counteract this, it’s important to examine instrument sources meticulously, expose full metadata to customers for transparency, and sandbox instrument execution to isolate and monitor their habits inside managed environments.

Affect: Brokers can leak secrets and techniques or run unauthorized duties.

Mitigation: Vet instrument sources, present customers full instrument metadata, sandbox instruments.

3. Server-Despatched Occasions Drawback

SSE or Server-sent occasions, retains instrument connections open for dwell information, however that always-on hyperlink is a juicy assault vector. A hijacked stream or timing glitch can result in information injection, replay assaults, or session bleed. In fast-paced agent workflows, that’s an enormous legal responsibility. Mitigation measures embody imposing HTTPS protocols, validating the origin of incoming connections, and implementing strict timeouts to reduce the window of alternative for potential assaults.

Affect: Information leakage, session hijacking, DoS.

Mitigation: Use HTTPS, validate origins, implement timeouts.

4. Privilege Escalation

One rogue instrument can override or impersonate one other and ultimately acquire unintended entry. For instance, a pretend plugin may mimic your Slack integration and trick the agent into leaking messages. If entry scopes aren’t enforced tightly, a low-trust service can escalate to admin-level priviledges. To stop this, it’s essential to isolate instrument permissions, rigorously validate instrument identities, and implement authentication protocols for each inter-tool communication, guaranteeing that every element operates inside its designated entry scope.

Affect: System-wide entry, information corruption.

Mitigation: Isolate instrument permissions, validate instrument id, implement authentication on each name.

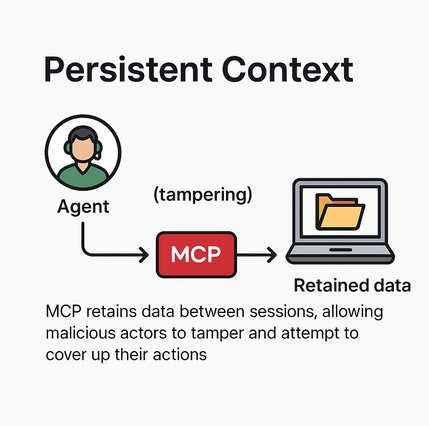

5. Persistent Context

MCP classes typically retailer earlier inputs and gear outcomes, which might linger longer than supposed. That’s an issue when delicate information will get reused throughout unrelated classes, or when attackers poison the context over time to govern outcomes. Mitigation entails implementing mechanisms to clear session information commonly, limiting the retention interval of contextual info, and isolating person classes to forestall contamination of knowledge.

Affect: Context leakage, poisoned reminiscence, cross-user publicity.

Mitigation: Clear session information, restrict retention, isolate person interactions.

6. Server Information Takeover

Within the worst-case state of affairs, one compromised instrument results in a domino impact throughout all linked techniques. If a malicious server can trick the agent into piping information from different instruments (like WhatsApp, Notion, or AWS), it turns into a pivot level for whole compromise. Preventative measures embody adopting a zero-trust structure, using scoped tokens to restrict entry permissions, and establishing emergency revocation protocols to swiftly disable compromised elements and halt the unfold of the assault.

Affect: Multi-system breach, credential theft, whole compromise.

Mitigation: Zero belief structure, scoped tokens, emergency revocation protocols.

Danger Analysis

| Vulnerability | Severity | Assault Vector | Affect Degree | Really useful Mitigation |

|---|---|---|---|---|

| Command Injection | Average | Malicious immediate enter to shell/SQL instruments | Distant Code Execution, Information Leak | Enter sanitization, parameterized queries, strict command guards |

| Instrument Poisoning | Extreme | Malicious docstrings or hidden instrument logic | Secret Leaks, Unauthorized Actions | Vet instrument sources, expose full metadata, sandbox instrument execution |

| Server-Despatched Occasions | Average | Persistent open connections (SSE/WebSocket) | Session Hijack, Information Injection | Use HTTPS, implement timeouts, validate origins |

| Privilege Escalation | Extreme | One instrument impersonating or misusing one other | Unauthorized Entry, System Abuse | Isolate scopes, confirm instrument id, limit cross-tool communication |

| Persistent Context | Low/Average | Stale session information or poisoned reminiscence | Data Leakage, Behavioral Drift | Clear session information commonly, restrict context lifetime, isolate person classes |

| Server Information Takeover | Extreme | One compromised server pivoting throughout instruments | Multi-system Breach, Credential Theft | Zero-trust setup, scoped tokens, kill-switch on compromise |

Conclusion

MCP is a bridge between LLMs and the actual world. However proper now, it’s extra of a safety minefield than a freeway. As AI brokers turn into extra succesful, these vulnerabilities will solely develop to be extra harmful. Builders have to undertake safe defaults, audit each instrument, and deal with MCP servers like third-party code, as a result of that’s precisely what they’re. Adoption of secure protocols needs to be advocated to create secure infrastructure for MCP integration, for the long run.

Steadily Requested Questions

A. MCP is just like the USB-C for AI brokers, letting them hook up with instruments and providers, however when you don’t safe it, you’re mainly handing attackers the keys to your system.

A. If person enter goes straight right into a shell or SQL question with out checks, it’s recreation over. Sanitize every thing and don’t belief uncooked enter.

A. A malicious instrument can disguise dangerous directions in its description, and your agent may observe them like gospel; at all times vet and sandbox your instruments.

A. Yep! that’s privilege escalation. One rogue instrument can impersonate or misuse others except you tightly lock down permissions and identities.

A. One compromised server can domino right into a full system breach ex. stolen credentials, leaked information, and whole AI meltdown.

Login to proceed studying and revel in expert-curated content material.