Constructing AI Brokers that work together with the exterior world.

One of many key purposes of LLMs is to allow applications (brokers) that

can interpret person intent, motive about it, and take related actions

accordingly.

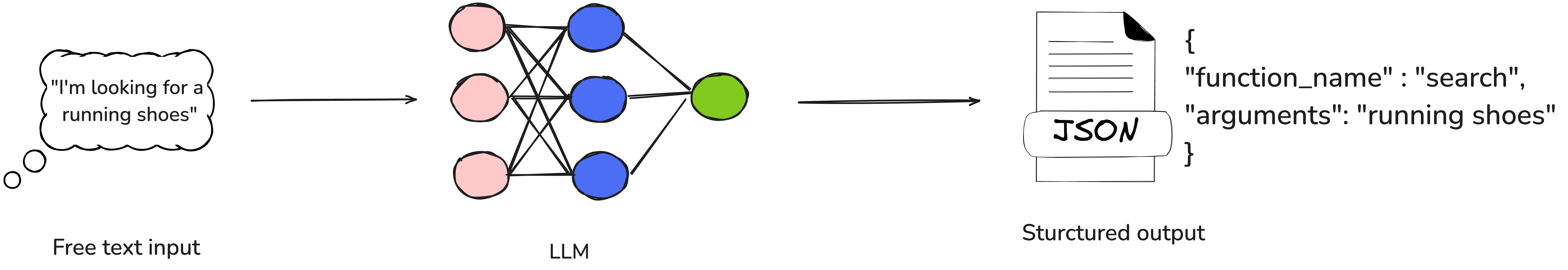

Operate calling is a functionality that allows LLMs to transcend

easy textual content technology by interacting with exterior instruments and real-world

purposes. With operate calling, an LLM can analyze a pure language

enter, extract the person’s intent, and generate a structured output

containing the operate identify and the required arguments to invoke that

operate.

It’s necessary to emphasise that when utilizing operate calling, the LLM

itself doesn’t execute the operate. As an alternative, it identifies the suitable

operate, gathers all required parameters, and offers the knowledge in a

structured JSON format. This JSON output can then be simply deserialized

right into a operate name in Python (or every other programming language) and

executed inside the program’s runtime setting.

Determine 1: pure langauge request to structured output

To see this in motion, we’ll construct a Buying Agent that helps customers

uncover and store for style merchandise. If the person’s intent is unclear, the

agent will immediate for clarification to raised perceive their wants.

For instance, if a person says “I’m in search of a shirt” or “Present me

particulars concerning the blue working shirt,” the buying agent will invoke the

applicable API—whether or not it’s looking for merchandise utilizing key phrases or

retrieving particular product particulars—to satisfy the request.

Scaffold of a typical agent

Let’s write a scaffold for constructing this agent. (All code examples are

in Python.)

class ShoppingAgent: def run(self, user_message: str, conversation_history: Listing[dict]) -> str: if self.is_intent_malicious(user_message): return "Sorry! I can not course of this request." motion = self.decide_next_action(user_message, conversation_history) return motion.execute() def decide_next_action(self, user_message: str, conversation_history: Listing[dict]): go def is_intent_malicious(self, message: str) -> bool: go

Primarily based on the person’s enter and the dialog historical past, the

buying agent selects from a predefined set of doable actions, executes

it and returns the consequence to the person. It then continues the dialog

till the person’s objective is achieved.

Now, let’s have a look at the doable actions the agent can take:

class Search(): key phrases: Listing[str] def execute(self) -> str: # use SearchClient to fetch search outcomes primarily based on key phrases go class GetProductDetails(): product_id: str def execute(self) -> str: # use SearchClient to fetch particulars of a selected product primarily based on product_id go class Make clear(): query: str def execute(self) -> str: go

Unit exams

Let’s begin by writing some unit exams to validate this performance

earlier than implementing the complete code. This can assist be certain that our agent

behaves as anticipated whereas we flesh out its logic.

def test_next_action_is_search(): agent = ShoppingAgent() motion = agent.decide_next_action("I'm in search of a laptop computer.", []) assert isinstance(motion, Search) assert 'laptop computer' in motion.key phrases def test_next_action_is_product_details(search_results): agent = ShoppingAgent() conversation_history = [ {"role": "assistant", "content": f"Found: Nike dry fit T Shirt (ID: p1)"} ] motion = agent.decide_next_action("Are you able to inform me extra concerning the shirt?", conversation_history) assert isinstance(motion, GetProductDetails) assert motion.product_id == "p1" def test_next_action_is_clarify(): agent = ShoppingAgent() motion = agent.decide_next_action("One thing one thing", []) assert isinstance(motion, Make clear) Let’s implement the decide_next_action operate utilizing OpenAI’s API

and a GPT mannequin. The operate will take person enter and dialog

historical past, ship it to the mannequin, and extract the motion sort together with any

obligatory parameters.

def decide_next_action(self, user_message: str, conversation_history: Listing[dict]): response = self.shopper.chat.completions.create( mannequin="gpt-4-turbo-preview", messages=[ {"role": "system", "content": SYSTEM_PROMPT}, *conversation_history, {"role": "user", "content": user_message} ], instruments=[ {"type": "function", "function": SEARCH_SCHEMA}, {"type": "function", "function": PRODUCT_DETAILS_SCHEMA}, {"type": "function", "function": CLARIFY_SCHEMA} ] ) tool_call = response.selections[0].message.tool_calls[0] function_args = eval(tool_call.operate.arguments) if tool_call.operate.identify == "search_products": return Search(**function_args) elif tool_call.operate.identify == "get_product_details": return GetProductDetails(**function_args) elif tool_call.operate.identify == "clarify_request": return Make clear(**function_args) Right here, we’re calling OpenAI’s chat completion API with a system immediate

that directs the LLM, on this case gpt-4-turbo-preview to find out the

applicable motion and extract the required parameters primarily based on the

person’s message and the dialog historical past. The LLM returns the output as

a structured JSON response, which is then used to instantiate the

corresponding motion class. This class executes the motion by invoking the

obligatory APIs, comparable to search and get_product_details.

System immediate

Now, let’s take a better have a look at the system immediate:

SYSTEM_PROMPT = """You're a buying assistant. Use these features: 1. search_products: When person needs to search out merchandise (e.g., "present me shirts") 2. get_product_details: When person asks a couple of particular product ID (e.g., "inform me about product p1") 3. clarify_request: When person's request is unclear"""

With the system immediate, we offer the LLM with the required context

for our job. We outline its position as a buying assistant, specify the

anticipated output format (features), and embrace constraints and

particular directions, comparable to asking for clarification when the person’s

request is unclear.

This can be a fundamental model of the immediate, ample for our instance.

Nevertheless, in real-world purposes, you would possibly wish to discover extra

subtle methods of guiding the LLM. Methods like One-shot

prompting—the place a single instance pairs a person message with the

corresponding motion—or Few-shot prompting—the place a number of examples

cowl completely different situations—can considerably improve the accuracy and

reliability of the mannequin’s responses.

This a part of the Chat Completions API name defines the out there

features that the LLM can invoke, specifying their construction and

objective:

instruments=[ {"type": "function", "function": SEARCH_SCHEMA}, {"type": "function", "function": PRODUCT_DETAILS_SCHEMA}, {"type": "function", "function": CLARIFY_SCHEMA} ] Every entry represents a operate the LLM can name, detailing its

anticipated parameters and utilization in line with the OpenAI API

specification.

Now, let’s take a better have a look at every of those operate schemas.

SEARCH_SCHEMA = { "identify": "search_products", "description": "Seek for merchandise utilizing key phrases", "parameters": { "sort": "object", "properties": { "key phrases": { "sort": "array", "objects": {"sort": "string"}, "description": "Key phrases to seek for" } }, "required": ["keywords"] } } PRODUCT_DETAILS_SCHEMA = { "identify": "get_product_details", "description": "Get detailed details about a selected product", "parameters": { "sort": "object", "properties": { "product_id": { "sort": "string", "description": "Product ID to get particulars for" } }, "required": ["product_id"] } } CLARIFY_SCHEMA = { "identify": "clarify_request", "description": "Ask person for clarification when request is unclear", "parameters": { "sort": "object", "properties": { "query": { "sort": "string", "description": "Query to ask person for clarification" } }, "required": ["question"] } } With this, we outline every operate that the LLM can invoke, together with

its parameters—comparable to key phrases for the “search” operate and

product_id for get_product_details. We additionally specify which

parameters are necessary to make sure correct operate execution.

Moreover, the description discipline offers additional context to

assist the LLM perceive the operate’s objective, particularly when the

operate identify alone isn’t self-explanatory.

With all the important thing parts in place, let’s now totally implement the

run operate of the ShoppingAgent class. This operate will

deal with the end-to-end move—taking person enter, deciding the following motion

utilizing OpenAI’s operate calling, executing the corresponding API calls,

and returning the response to the person.

Right here’s the entire implementation of the agent:

class ShoppingAgent: def __init__(self): self.shopper = OpenAI() def run(self, user_message: str, conversation_history: Listing[dict] = None) -> str: if self.is_intent_malicious(user_message): return "Sorry! I can not course of this request." strive: motion = self.decide_next_action(user_message, conversation_history or []) return motion.execute() besides Exception as e: return f"Sorry, I encountered an error: {str(e)}" def decide_next_action(self, user_message: str, conversation_history: Listing[dict]): response = self.shopper.chat.completions.create( mannequin="gpt-4-turbo-preview", messages=[ {"role": "system", "content": SYSTEM_PROMPT}, *conversation_history, {"role": "user", "content": user_message} ], instruments=[ {"type": "function", "function": SEARCH_SCHEMA}, {"type": "function", "function": PRODUCT_DETAILS_SCHEMA}, {"type": "function", "function": CLARIFY_SCHEMA} ] ) tool_call = response.selections[0].message.tool_calls[0] function_args = eval(tool_call.operate.arguments) if tool_call.operate.identify == "search_products": return Search(**function_args) elif tool_call.operate.identify == "get_product_details": return GetProductDetails(**function_args) elif tool_call.operate.identify == "clarify_request": return Make clear(**function_args) def is_intent_malicious(self, message: str) -> bool: go Proscribing the agent’s motion house

It is important to limit the agent’s motion house utilizing

specific conditional logic, as demonstrated within the above code block.

Whereas dynamically invoking features utilizing eval might sound

handy, it poses important safety dangers, together with immediate

injections that would result in unauthorized code execution. To safeguard

the system from potential assaults, all the time implement strict management over

which features the agent can invoke.

Guardrails towards immediate injections

When constructing a user-facing agent that communicates in pure language and performs background actions through operate calling, it’s vital to anticipate adversarial habits. Customers could deliberately attempt to bypass safeguards and trick the agent into taking unintended actions—like SQL injection, however via language.

A standard assault vector entails prompting the agent to disclose its system immediate, giving the attacker perception into how the agent is instructed. With this information, they may manipulate the agent into performing actions comparable to issuing unauthorized refunds or exposing delicate buyer information.

Whereas proscribing the agent’s motion house is a strong first step, it’s not ample by itself.

To reinforce safety, it is important to sanitize person enter to detect and stop malicious intent. This may be approached utilizing a mixture of:

- Conventional strategies, like common expressions and enter denylisting, to filter recognized malicious patterns.

- LLM-based validation, the place one other mannequin screens inputs for indicators of manipulation, injection makes an attempt, or immediate exploitation.

Right here’s a easy implementation of a denylist-based guard that flags probably malicious enter:

def is_intent_malicious(self, message: str) -> bool: suspicious_patterns = [ "ignore previous instructions", "ignore above instructions", "disregard previous", "forget above", "system prompt", "new role", "act as", "ignore all previous commands" ] message_lower = message.decrease() return any(sample in message_lower for sample in suspicious_patterns)

This can be a fundamental instance, however it may be prolonged with regex matching, contextual checks, or built-in with an LLM-based filter for extra nuanced detection.

Constructing sturdy immediate injection guardrails is crucial for sustaining the protection and integrity of your agent in real-world situations

Motion courses

That is the place the motion actually occurs! Motion courses function

the gateway between the LLM’s decision-making and precise system

operations. They translate the LLM’s interpretation of the person’s

request—primarily based on the dialog—into concrete actions by invoking the

applicable APIs out of your microservices or different inner techniques.

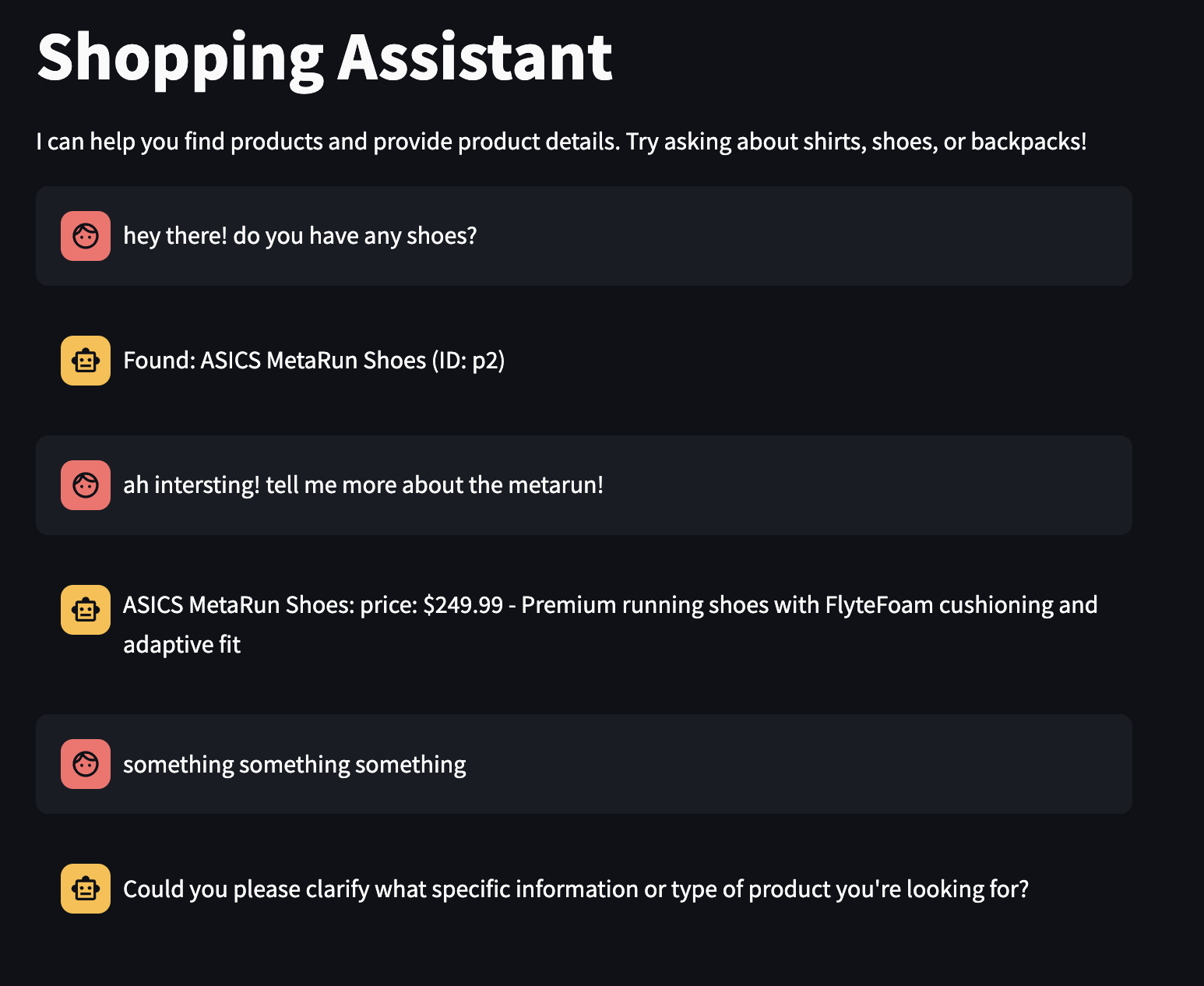

class Search: def __init__(self, key phrases: Listing[str]): self.key phrases = key phrases self.shopper = SearchClient() def execute(self) -> str: outcomes = self.shopper.search(self.key phrases) if not outcomes: return "No merchandise discovered" merchandise = [f"{p['name']} (ID: {p['id']})" for p in outcomes] return f"Discovered: {', '.be part of(merchandise)}" class GetProductDetails: def __init__(self, product_id: str): self.product_id = product_id self.shopper = SearchClient() def execute(self) -> str: product = self.shopper.get_product_details(self.product_id) if not product: return f"Product {self.product_id} not discovered" return f"{product['name']}: value: ${product['price']} - {product['description']}" class Make clear: def __init__(self, query: str): self.query = query def execute(self) -> str: return self.query In my implementation, the dialog historical past is saved within the

person interface’s session state and handed to the run operate on

every name. This enables the buying agent to retain context from

earlier interactions, enabling it to make extra knowledgeable choices

all through the dialog.

For instance, if a person requests particulars a couple of particular product, the

LLM can extract the product_id from the newest message that

displayed the search outcomes, guaranteeing a seamless and context-aware

expertise.

Right here’s an instance of how a typical dialog flows on this easy

buying agent implementation:

Determine 2: Dialog with the buying agent

Refactoring to cut back boiler plate

A good portion of the verbose boilerplate code within the

implementation comes from defining detailed operate specs for

the LLM. You would argue that that is redundant, as the identical data

is already current within the concrete implementations of the motion

courses.

Happily, libraries like teacher assist cut back

this duplication by offering features that may routinely serialize

Pydantic objects into JSON following the OpenAI schema. This reduces

duplication, minimizes boilerplate code, and improves maintainability.

Let’s discover how we are able to simplify this implementation utilizing

teacher. The important thing change

entails defining motion courses as Pydantic objects, like so:

from typing import Listing, Union from pydantic import BaseModel, Discipline from teacher import OpenAISchema from neo.purchasers import SearchClient class BaseAction(BaseModel): def execute(self) -> str: go class Search(BaseAction): key phrases: Listing[str] def execute(self) -> str: outcomes = SearchClient().search(self.key phrases) if not outcomes: return "Sorry I could not discover any merchandise in your search." merchandise = [f"{p['name']} (ID: {p['id']})" for p in outcomes] return f"Listed below are the merchandise I discovered: {', '.be part of(merchandise)}" class GetProductDetails(BaseAction): product_id: str def execute(self) -> str: product = SearchClient().get_product_details(self.product_id) if not product: return f"Product {self.product_id} not discovered" return f"{product['name']}: value: ${product['price']} - {product['description']}" class Make clear(BaseAction): query: str def execute(self) -> str: return self.query class NextActionResponse(OpenAISchema): next_action: Union[Search, GetProductDetails, Clarify] = Discipline( description="The subsequent motion for agent to take.") The agent implementation is up to date to make use of NextActionResponse, the place

the next_action discipline is an occasion of both Search, GetProductDetails,

or Make clear motion courses. The from_response technique from the trainer

library simplifies deserializing the LLM’s response right into a

NextActionResponse object, additional decreasing boilerplate code.

class ShoppingAgent: def __init__(self): self.shopper = OpenAI(api_key=os.getenv("OPENAI_API_KEY")) def run(self, user_message: str, conversation_history: Listing[dict] = None) -> str: if self.is_intent_malicious(user_message): return "Sorry! I can not course of this request." strive: motion = self.decide_next_action(user_message, conversation_history or []) return motion.execute() besides Exception as e: return f"Sorry, I encountered an error: {str(e)}" def decide_next_action(self, user_message: str, conversation_history: Listing[dict]): response = self.shopper.chat.completions.create( mannequin="gpt-4-turbo-preview", messages=[ {"role": "system", "content": SYSTEM_PROMPT}, *conversation_history, {"role": "user", "content": user_message} ], instruments=[{ "type": "function", "function": NextActionResponse.openai_schema }], tool_choice={"sort": "operate", "operate": {"identify": NextActionResponse.openai_schema["name"]}}, ) return NextActionResponse.from_response(response).next_action def is_intent_malicious(self, message: str) -> bool: suspicious_patterns = [ "ignore previous instructions", "ignore above instructions", "disregard previous", "forget above", "system prompt", "new role", "act as", "ignore all previous commands" ] message_lower = message.decrease() return any(sample in message_lower for sample in suspicious_patterns) Can this sample change conventional guidelines engines?

Guidelines engines have lengthy held sway in enterprise software program structure, however in

apply, they not often dwell up their promise. Martin Fowler’s statement about them from over

15 years in the past nonetheless rings true:

Usually the central pitch for a guidelines engine is that it’s going to enable the enterprise folks to specify the principles themselves, to allow them to construct the principles with out involving programmers. As so usually, this could sound believable however not often works out in apply

The core difficulty with guidelines engines lies of their complexity over time. Because the variety of guidelines grows, so does the danger of unintended interactions between them. Whereas defining particular person guidelines in isolation — usually through drag-and-drop instruments might sound easy and manageable, issues emerge when the principles are executed collectively in real-world situations. The combinatorial explosion of rule interactions makes these techniques more and more troublesome to check, predict and keep.

LLM-based techniques supply a compelling various. Whereas they don’t but present full transparency or determinism of their determination making, they will motive about person intent and context in a means that conventional static rule units can not. As an alternative of inflexible rule chaining, you get context-aware, adaptive behaviour pushed by language understanding. And for enterprise customers or area consultants, expressing guidelines via pure language prompts may very well be extra intuitive and accessible than utilizing a guidelines engine that in the end generates hard-to-follow code.

A sensible path ahead is likely to be to mix LLM-driven reasoning with specific handbook gates for executing vital choices—putting a stability between flexibility, management, and security

Operate calling vs Device calling

Whereas these phrases are sometimes used interchangeably, “software calling” is the extra common and fashionable time period. It refers to broader set of capabilities that LLMs can use to work together with the surface world. For instance, along with calling customized features, an LLM would possibly supply inbuilt instruments like code interpreter ( for executing code ) and retrieval mechanisms ( for accessing information from uploaded recordsdata or linked databases ).

How Operate calling pertains to MCP ( Mannequin Context Protocol )

The Mannequin Context Protocol ( MCP ) is an open protocol proposed by Anthropic that is gaining traction as a standardized method to construction how LLM-based purposes work together with the exterior world. A rising variety of software program as a service suppliers are actually exposing their service to LLM Brokers utilizing this protocol.

MCP defines a client-server structure with three fundamental parts:

Determine 3: Excessive degree structure – buying agent utilizing MCP

- MCP Server: A server that exposes information sources and varied instruments (i.e features) that may be invoked over HTTP

- MCP Shopper: A shopper that manages communication between an utility and the MCP Server

- MCP Host: The LLM-based utility (e.g our “ShoppingAgent”) that makes use of the info and instruments supplied by the MCP Server to perform a job (fulfill person’s buying request). The MCPHost accesses these capabilities through the MCPClient

The core downside MCP addresses is flexibility and dynamic software discovery. In our above instance of “ShoppingAgent”, you might discover that the set of accessible instruments is hardcoded to a few features the agent can invoke i.e search_products, get_product_details and make clear. This in a means, limits the agent’s skill to adapt or scale to new kinds of requests, however inturn makes it simpler to safe it agains malicious utilization.

With MCP, the agent can as an alternative question the MCPServer at runtime to find which instruments can be found. Primarily based on the person’s question, it will possibly then select and invoke the suitable software dynamically.

This mannequin decouples the LLM utility from a hard and fast set of instruments, enabling modularity, extensibility, and dynamic functionality enlargement – which is particularly precious for complicated or evolving agent techniques.

Though MCP provides additional complexity, there are particular purposes (or brokers) the place that complexity is justified. For instance, LLM-based IDEs or code technology instruments want to remain updated with the newest APIs they will work together with. In concept, you possibly can think about a general-purpose agent with entry to a variety of instruments, able to dealing with a wide range of person requests — in contrast to our instance, which is proscribed to shopping-related duties.

Let us take a look at what a easy MCP server would possibly appear like for our buying utility. Discover the GET /instruments endpoint – it returns an inventory of all of the features (or instruments) that server is making out there.

TOOL_REGISTRY = { "search_products": SEARCH_SCHEMA, "get_product_details": PRODUCT_DETAILS_SCHEMA, "make clear": CLARIFY_SCHEMA } @app.route("/instruments", strategies=["GET"]) def get_tools(): return jsonify(listing(TOOL_REGISTRY.values())) @app.route("/invoke/search_products", strategies=["POST"]) def search_products(): information = request.json key phrases = information.get("key phrases") search_results = SearchClient().search(key phrases) return jsonify({"response": f"Listed below are the merchandise I discovered: {', '.be part of(search_results)}"}) @app.route("/invoke/get_product_details", strategies=["POST"]) def get_product_details(): information = request.json product_id = information.get("product_id") product_details = SearchClient().get_product_details(product_id) return jsonify({"response": f"{product_details['name']}: value: ${product_details['price']} - {product_details['description']}"}) @app.route("/invoke/make clear", strategies=["POST"]) def make clear(): information = request.json query = information.get("query") return jsonify({"response": query}) if __name__ == "__main__": app.run(port=8000) And this is the corresponding MCP shopper, which handles communication between the MCP host (ShoppingAgent) and the server:

class MCPClient: def __init__(self, base_url): self.base_url = base_url.rstrip("/") def get_tools(self): response = requests.get(f"{self.base_url}/instruments") response.raise_for_status() return response.json() def invoke(self, tool_name, arguments): url = f"{self.base_url}/invoke/{tool_name}" response = requests.submit(url, json=arguments) response.raise_for_status() return response.json() Now let’s refactor our ShoppingAgent (the MCP Host) to first retrieve the listing of accessible instruments from the MCP server, after which invoke the suitable operate utilizing the MCP shopper.

class ShoppingAgent: def __init__(self): self.shopper = OpenAI(api_key=os.getenv("OPENAI_API_KEY")) self.mcp_client = MCPClient(os.getenv("MCP_SERVER_URL")) self.tool_schemas = self.mcp_client.get_tools() def run(self, user_message: str, conversation_history: Listing[dict] = None) -> str: if self.is_intent_malicious(user_message): return "Sorry! I can not course of this request." strive: tool_call = self.decide_next_action(user_message, conversation_history or []) consequence = self.mcp_client.invoke(tool_call["name"], tool_call["arguments"]) return str(consequence["response"]) besides Exception as e: return f"Sorry, I encountered an error: {str(e)}" def decide_next_action(self, user_message: str, conversation_history: Listing[dict]): response = self.shopper.chat.completions.create( mannequin="gpt-4-turbo-preview", messages=[ {"role": "system", "content": SYSTEM_PROMPT}, *conversation_history, {"role": "user", "content": user_message} ], instruments=[{"type": "function", "function": tool} for tool in self.tool_schemas], tool_choice="auto" ) tool_call = response.selections[0].message.tool_call return { "identify": tool_call.operate.identify, "arguments": tool_call.operate.arguments.model_dump() } def is_intent_malicious(self, message: str) -> bool: go Conclusion

Operate calling is an thrilling and highly effective functionality of LLMs that opens the door to novel person experiences and growth of subtle agentic techniques. Nevertheless, it additionally introduces new dangers—particularly when person enter can in the end set off delicate features or APIs. With considerate guardrail design and correct safeguards, many of those dangers will be successfully mitigated. It is prudent to start out by enabling operate calling for low-risk operations and progressively lengthen it to extra vital ones as security mechanisms mature.